The scope line is sometimes a bit chonky because it's time synced, and recording causes it to lag a bit. It generally looks a bit better than in the video normally.

You can also make it worse by taking successive screenshots :3

aeva (@[email protected])

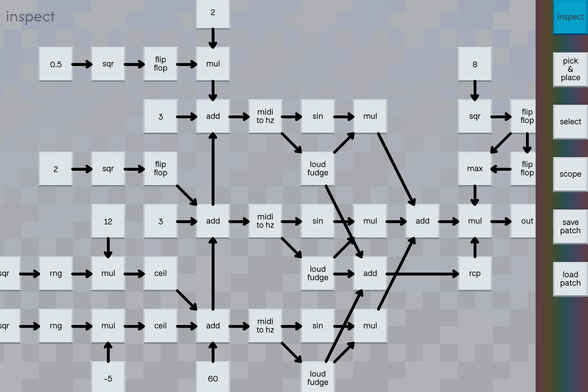

Attached: 1 image I put it to the test by translating my test patch from my python fronted to a hypothetical equivalent node based representation without regard for wire placement and then stepped through my wire placement rules by hand. I'm very pleased with the results, it's a lot clearer to me what it does than the python version is at a glance.

I figure if I move the touch screen keyboard into the synth and cut out the trip through the midi subsystem that might make the situation a little better (again, depending on where the real problem is)

Anyways, it got me thinking, I think processing input events synchronously with rendering might be a mistake, and it would be better if I could process input events on the thread where I'm generating the audio.

tragic. pygame.display.flip seems to always imply a vsync stall if you aren't using it to create an opengl context, and so solving the input events merging problem is going to be a pain in the ass. it is, however, an ass pain for another night: it is now time to "donkey kong"

EDIT: pygame.display.flip does not imply vsync! I just messed up my throttling logic :D huzzah!

someone brought up a really good point, which is that depending on how the touch screen works, it may be fundamentally ambiguous whether or not rapidly alternating adjacent taps can be interpreted as one touch event wiggling vs two separate touch events

easy to verify if that is the case, but damn, that might put the kibosh on one of my diabolical plans

ok great news, that seems to not be the case here. I made little touch indicators draw colors for what they correspond to, and rapid adjacent taps don't share a color.

bad news; when a touch or sometimes a long string of rapid touches is seemingly dropped without explanation, nothing for them shows up in evtest either D: does that mean my touch screen is just not registering them?

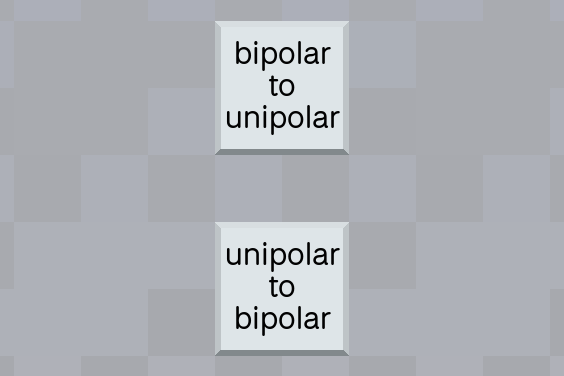

I want to add a pair of instructions for switching between (0, 1) range and (-1, 1) range. so

u = s * .5 + .5

and

s = u * 2 - 1

what are good short names for these operations?

EDIT: can be up to three words, less than 7 letters each preferably closer to 4 each

why the hell am i outputting 48 khz

i'm pretty sure i can't even hear 10 khz

i love how a bunch of people were like it's because of nyquist duh and then the two smart people were like these two specific numbers are because of video tape and film

EDIT: honorable mention to the 3rd smart person whose text wall came in after I posted this

I'm going to end up with something vaguely resembling a type system in this language because I'm looking to add reading and writing to buffers so I can do stuff like tape loops and sampling, and having different connection types keeps the operator from accidentally using a sine wave as a file handle.

I've got a sneaking suspicion this is also going to end up tracking metadata about how often code should execute, which is kinda a cool idea.

oh, so I was thinking about the sampling rate stuff because I want to make tape loops and such, and I figured ok three new tiles: blank tape, tape read, tape write. the blank tape tile provides a handle for the memory buffer to be used with the other two tiles along with the buffer itself.

I thought it would be cute to just provide tiles for different kinds of blank media instead of making you specify the exact buffer size, but I've since abandoned this idea.

bytes per second is 48000 * sizeof(double)

wikipedia says a cassette typically has either 30 minutes or an hour worth of tape, so

48000 * sizeof(double) * 60 * 30

is about half a GiB, which is way too much to allocate by default for a tile you can casually throw down without thinking about.

I thought ok, well, maybe something smaller. Floppy? 1.44 MiB gets you a few seconds of audio lol.

I have since abandoned this idea, you're just going to have to specify how long the blank tape is.

@aeva time to pretend windows doesn‘t exist and just overcommit memory, it‘s Literally Free(*)

*: may cause mysterious oom death at some point

@aeva doubles! *spit take* :)

FWIW your usual compact cassette has the equivalent of less than 6 bits/sample, professional reel-to-reel tape under ideal conditions maybe 13

aeva (@[email protected])

i'm using doubles for samples which feels excessive except that std::sinf sounds moldy so that has a reason at least, but i do not know why i picked 48 khz for the sampling rate i probably just copied the number from somewhere but seriously why is 48 khz even a thing what is this audiophile nonsense

@lritter @aeva in analog you get it for free (because it's all stochastic processes anyhow), in digital when decimating down to 6 bits you want dither (tape hiss would be Gaussian dither), and then you don't get a sudden drop-out, you get a nice uniform noise floor.

For cassette tape you get maybe 58, 60 dB SNR. 6 bits dithered gives you ~64dB SNR.

@rygorous @lritter @aeva How does the calculation work for that? I always thought the rule of thumb was 6dB per bit. (As calculated 20*log10(2) )

I know you can exceed the digital noise floor with noise shaping, where the analog lowpass post-DAC removes the noise, but Gaussian noise is in the audible range.

@aeva i would go with 100Mb Zip disks - that would be suitable capacity for three-minute pop songs

And you could add a click of death simulation

@aeva Sounds like a fun rabbit hole for someone interested in reversible computing to go down, temporal type systems.

I shouldn't be surprised this is already a thing, but I am.

Temporal Type Theory: A topos-theoretic approach to systems and behavior

This book introduces a temporal type theory, the first of its kind as far as we know. It is based on a standard core, and as such it can be formalized in a proof assistant such as Coq or Lean by adding a number of axioms. Well-known temporal logics---such as Linear and Metric Temporal Logic (LTL and MTL)---embed within the logic of temporal type theory. The types in this theory represent "behavior types". The language is rich enough to allow one to define arbitrary hybrid dynamical systems, which are mixtures of continuous dynamics---e.g. as described by a differential equation---and discrete jumps. In particular, the derivative of a continuous real-valued function is internally defined. We construct a semantics for the temporal type theory in the topos of sheaves on a translation-invariant quotient of the standard interval domain. In fact, domain theory plays a recurring role in both the semantics and the type theory.

@aeva That's what I'd like to know.

The summary somewhat made sense up until the last sentence, when it veered off into the weeds of category theory and algebraic geometry.

But what I gather from my skimming is that behavior types are types that describe the set of all possible trajectories of a dynamic system.

And I think the last sentence just means that the rules of the theory is derivable from a partially ordered set of intervals.

@aeva meanwhile, my favourite language: https://opensource.ieee.org/vasg/Packages/-/blob/release/std/standard.vhdl#L296

(also cool: "boolean" is an enumerated type, and so is "character")

@aeva

You need one of these....

https://chaos.social/@axwax/114918779814482679

and a pair of these

https://a.co/d/4hzbWZu

AxWax (@[email protected])

Attached: 1 image Needs moar bass! #TinaTheCat #Caturday #SynthCat #StudioCat