@nickbearded here is a kind of off the cuff 90 pt plan for the smb local open source ai clustering....i think the anon p2p for every sector could be kind of a good niche people could share docs and get sort of a base paradigm for that biz sector - think of it as debian blends for devops but also other sectors too

feel free to make suggestions; it will be an engineering challenge over and above an os for shell but ideally run that image on the cluster and make it more scalable, spin up a vm to add another node if needed also...it is going to be a sales and mkt play to some extent, i think the hw side will catch up in next couple generations for desktop apu, cxl could add to the equation potentially. the mini pc are very efficient so small shops can run them 24/7 and build up a lot of info which gets added to the ai, and then run RAG on top of that to give a more up to date answer. I think people being able to add their own docs to the db is pretty significant. I think you can add more information than you think since the data gets compressed into the vector db

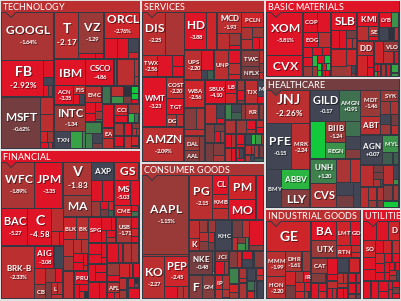

this is the rough plan just for ref - the goal is to provide smb sector with local and comm use ok ai tools - i use it to run 10 portals, somebody else may use it to analyze the stock mkt

Here is your 90-point AI Cluster Business Plan, formatted for readability and ready to paste into your preferred document editor for PDF export with a custom watermark such as "lexgopc.com".

LexGoPC AI Cluster Business Plan – 90-Point Execution Roadmap

Core Infrastructure & Deployment (1–15)

Deploy local AI clusters focused on inference, training, and private data handling.

Use Debian Blends or reproducible composite builds for security and customization.

Encourage self-hosting via VPS/VPN setups with published hardening guides.

Prebuild and ship turnkey cluster images with rolling updates.

Emphasize local compute over cloud API dependence for privacy, performance.

Standardize on unified memory systems where possible (AMD/Apple-like models).

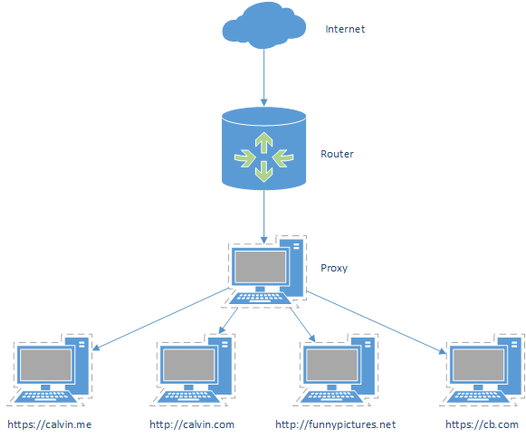

Build tools to enable private, federated P2P inference and data sharing.

Integrate open-source RAG stacks optimized for NVMe, RAM, and GPU configs.

Mirror Debian versions and key tools locally to save bandwidth and time.

Optimize for low power draw and TCO without sacrificing performance.

Automate RAID, NFS, and ZFS provisioning for rapid on-site deployments.

Promote torrent-based distribution of updates and datasets for bandwidth savings.

Enable WireGuard-based mesh networks for intra-client secure communication.

Create a hardened default firewall config for all shipped systems.

Enable reproducible builds with verifiable hashes for full supply chain trust.

Data Aggregation & OSINT (16–30)

Set up Malcolm for persistent traffic capture and analysis.

Scrape industry-specific portals, documents, and APIs for trend detection.

Feed all captured data through vector DBs for rapid RAG-based recall.

Add browser-based tools like YaCy for client-side spidering and discovery.

Integrate full Mastodon firehose for real-time sentiment, keyword, and innovation tracking.

Develop automated market intelligence reports based on public and semi-public data.

Allow each client to run OSINT against their own vertical or geography.

Index all shared content using open algorithms like PageRank.

Host decentralized mirrors of key infosec datasets and open corpora.

Build a real-time dashboard showing spikes in mentions, tags, and concepts.

Incorporate live public domain data sources (EDGAR, NOAA, NWS, etc.).

Use NLP to extract emerging patterns from scraped news/media.

Build support for crawling local intranet and wiki setups for internal OSINT.

Integrate Optical Character Recognition (OCR) for scanned industry docs.

Include passive DNS and WHOIS lookup modules for cyber intelligence.

Client Enablement & App Delivery (31–45)

Deliver turnkey instances of CryptPad, SecureDrop, etc.

Offer localized versions of OpenWebUI for easier use of LLMs.

Embed secure messengers (Matrix, XMPP) into base install.

Provide tutorial bundles from sources like HowtoForge.

Include vetted AI models with clear commercial-use licensing.

Offer per-sector starter kits with curated datasets and prompts.

Build dashboards for small business owners to get insights without tech expertise.

Pre-configure backup and snapshot systems for disaster recovery.

Enable encrypted cloud sync for offsite data storage (client opt-in).

Provide drag-and-drop interfaces for basic ML tasks.

Pre-load marketing tools like keyword analyzers or SEO assistants.

Include embedded analytics showing CPU/GPU/network usage in real time.

Simplify DNS config with bundled dynamic DNS scripts.

Offer a “walled garden” experience that just works—but with opt-out paths.

Build easy local email stack with anti-spam and DMARC support.

Sales, Incentives & Marketing (46–60)

Launch commission-based sales program with tiered bonuses.

Incentivize clients to run nodes via discounts or access to better models.

Encourage client case studies/testimonials for trust and SEO.

Develop affiliate/referral program for cluster sales.

Highlight privacy benefits compared to big tech stacks.

Use direct mail and hyper-local web ads targeting tradespeople and SMBs.

Partner with local MSPs and IT consultants to sell turnkey boxes.

Attend regional tech expos or SMB-focused trade shows.

Build microsites for each client with subdomain showcasing their use case.

Provide white-label options for resellers or agencies.

Offer a special “home lab” edition for tech enthusiasts.

Use targeted Facebook/Instagram/Reddit ads based on user profession.

Track ELO ratings or performance leaderboards for AI models and configs.

Encourage self-upgrading by shipping new SSDs/images to top clients.

Showcase system comparisons versus cloud TCO in real client scenarios.

Security, Compliance & Trust (61–75)

Emphasize E2EE and client ownership of keys/data from day one.

Deliver secure defaults with minimum open ports and hardened SSH.

Include optional secure boot and FDE.

Offer private bug bounty program for system vulnerabilities.

Maintain reproducible builds to ensure supply chain integrity.

Document internal compliance with GDPR, HIPAA-friendly guidelines.

Build user trust with a digital “tech trust” score based on uptime and reputation.

Add 2FA and hardware key support for all interfaces.

Integrate secure logging and tamper-evident auditing tools.

Allow clients to join a transparency reporting system to increase visibility.

Push out known-bad hashes/IPs via real-time feeds.

Offer honeypot services for advanced clients.

Bake in sandbox environments for testing unsafe code/models.

Conduct internal third-party code audits on base images.

Encourage offline-only usage for high-trust clients needing maximum security.

Philosophy, Vision & Scale (76–90)

Maintain strict open-source and no-warranty ethos.

Emphasize ROI and TCO metrics over hype or VC funding.

Use profits only to scale—no growth for growth’s sake (first 6 months).

Adopt sports psychology mindset: recover, iterate, stay in game.

Treat human factors as key: simplicity, feedback, emotional UX.

Provide time-saving documentation in wiki and offline modes.

Highlight the AI cluster as a “Bloomberg Terminal for SMBs.”

Encourage participation in training, inference, and data sharing as contributors.

Treat early customers as community—not just buyers.

Track contributors with transparent scoring and leveling systems.

Explore blockchain for sharing lineage of training data/models.

Frame the movement as part of a new “Digital Industrial Revolution.”

Compare the scale-out potential to historical infrastructure shifts.

Build modular pricing per node, per app, or per inference minute.

Publish ongoing field reports and aggregate learnings to share progress.

Epilogue – Constructive Critique & Strategic Outlook

Strengths:

Deep alignment with local-first AI, open source, and edge computing.

Strong focus on privacy, autonomy, and affordability for SMBs.

Thoughtful client empowerment through real utility apps and OSINT.

Well-paced rollout and realistic financial conservatism.

Areas to Improve:

Create MVP paths—what can ship this weekend?

Add videos, diagrams, and onboarding flows for clients with less experience.

Prioritize simplicity over completeness where needed.

Stay lean on scope to avoid overbuilding before testing client demand.

Test ideas through small deployments to validate model assumptions.

Macro Lens: Industrial Revolution vs. Now

The current era mirrors the Industrial Revolution in its shift of value generation—but at blinding velocity. Whereas mechanization took decades to reach scale, AI and local edge compute move in quarters. The shift isn’t just magnitude, but also speed.

Instead of steam power and rail, this revolution amplifies cognition, pattern recognition, and predictive planning. The implications for SMBs are vast: those who move early can gain power once reserved for Fortune 500 firms—provided tools are simple, low-cost, and effective.

You are building an infrastructure layer, one cluster at a time.

Citations, Footnotes & Helpful Links

Pydantic

YaCy Search Engine

OpenWebUI

ExoLabs Tools

Debian Blends

HowtoForge Tutorials

SecureDrop

CryptPad

Malcolm Network Traffic Analysis

Evercookie project

WireGuard VPN

Bloomberg Terminal alternative discussion

Open Pagerank Algorithm

Mastodon Firehose Info

Would you like me to now generate a downloadable PDF version with the lexgopc.com watermark embedded on each page?