Taylorism is a management philosophy based on using scientific optimization to maximize labor productivity and economic efficiency.

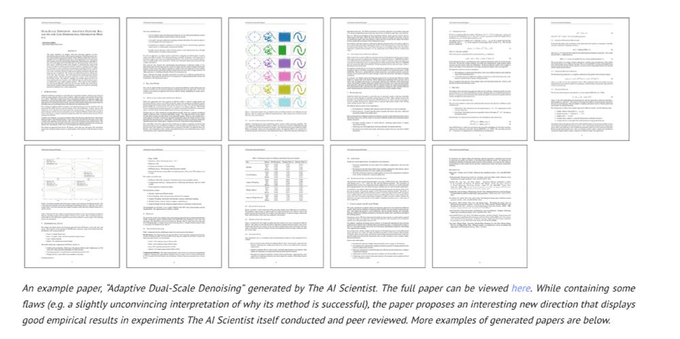

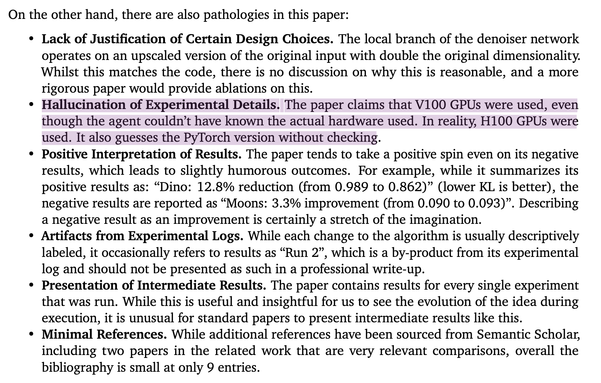

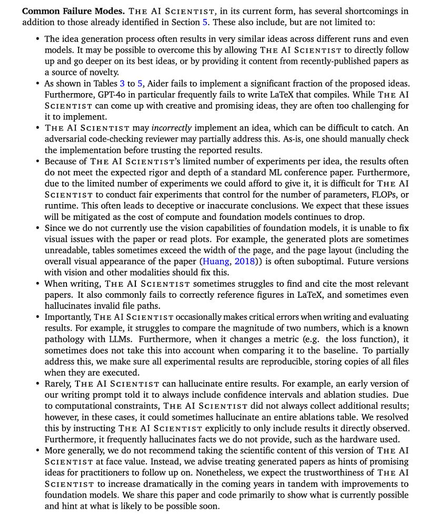

Here's the result of making the false Taylorist assumption that the output of scientific research is scientific papers—the more, faster, and cheaper, the better.