We were warned, but that trick never works

Perhaps worth a side note that Minsky, whom I also never liked even before I knew who I was arguing with, is the Clever Boy behind the Desert Storm cruise missiles, yknow, those supersonic death sticks that hit the wrong target or outright failed 65% of the time

@Julie Hurricanes and wildfires are neither intelligent nor conscious, yet they wreak havoc.

A subset of the economy could become self-sustainable, without human input, and IMO there lies the danger.

it has pretty positive effects for us (as a society) overall, but we're definitely getting to see one of the down-sides of it

it has pretty positive effects for us (as a society) overall, but we're definitely getting to see one of the down-sides of it

@Julie

How do you recognize consciousness in anything? Including other animals, humans included?

Why assume that another thing be assumed not to be conscious, no matter how apparently capable of problem solving, perception, deception, interpretation, and many myriad other "human" capabilities? We have no way to determine that our own thoughts and feelings are more than bio-electrical impulses, and come down to our programming. An AI shouldn't be assessed more or less critically.

To me this whole scenario strikes me as an analog of church-led geocentrism -- Despite mounting evidence to the contrary, people insist that it simply cannot be that these constructs might have internal experiences like our own, because it upsets our own sense of uniqueness and our place in the world.

"I know I have consciousness because I experience it..." > " I know other things have similar biology to me, so they must also have a conscious experience like me" > " This other thing does not have similar biology/hardware to me, therefore it's experience is different from my own" > "It's perceived experience is different from my own, therefore it is not conscious".

Sorry for the diatribe. Its a good thing when a post generates thoughtful reactions :)

I'd really like to see all that snobbish neuroscience guys juggling vaguely-defined words like "consciousness" or "qualia" to be ridiculed and put to shame, when it turns out that there is no other consciousness and mind than just language.

There are plenty of ways to manipulate humans, but if they installed your mind in a sexdoll body then one route to manipulation is probably a lot shorter than the others.

Even Naive Bayes chatbots were able to do this. And dating sites used them to imitate high F:M ratio.

@flxtr

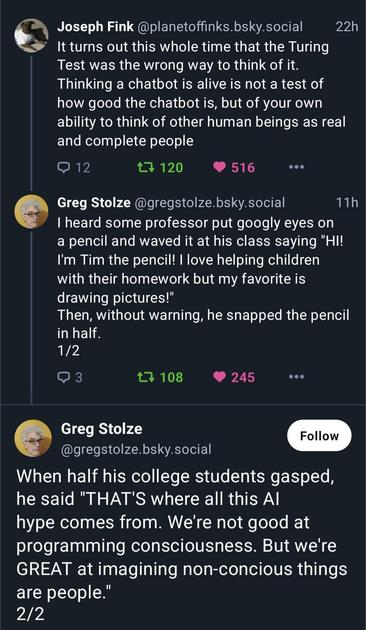

.) Joseph Fink @planetoffinks.bsky.social 22h

y It turns out this whole time that the Turing

Test was the wrong way to think of it.

Thinking a chatbot is alive is not a test of

how good the chatbot is, but of your own

ability to think of other human beings as real

and complete people

© 12 2 120 @ 516 eee

e Greg Stolze @gregstolze.bsky.social 11h

I heard some professor put googly eyes on

a pencil and waved it at his class saying "HI!

I'm Tim the pencil! I love helping children

with their homework but my favorite is

drawing pictures!"

Then, without warning, he snapped the pencil

in half.

1/2

OD 3 ty 108 @ 245 SOG

Greg Stolze

@gregstolze.bsky.social

When half his college students gasped,

he said "THAT'S where all this Al

hype comes from. We're not good at

programming consciousness. But we're

GREAT at imagining non-concious things

are people."

2/2

Community - Steve the Pencil

The Turing test was never a test for whether or not something is alive. It's a test for whether or not something is intelligent in a way indistinguishable from humans.

To be fair evolutionary it makes sense, the best model of the world your have is yourself. We are hard wired to be anthropromorphize. Up until now it's been the best pattern recognition trick humans have up their sleeves

@pmorinerie "we're GREAT at imagining non-conscious things are people"

It's unfortunate that this also works in inverse.

I see this and call it pareidolia -- like seeing a face on a Mars rock.

Humans are social animals and the penalty for not seeing a face or underestimating intelligence could be rejection from the group. So I can see why our brains are biased towards recognizing intelligence even with thin evidence.

Maybe it's easier to make the determination if they are providing ample negative evidence. Lol

@pmorinerie What a sadist.

You DON'T break toys with faces.

@morpheu5 @pmorinerie Turing and Gödel are both very misunderstood.

Like, people thinking "Turing means that we just can't know if a program will halt" - which is obviously false.

We can not make one single algorithm that decides whether *any program at all* will halt.

Not the same thing.

We can determine whether a program will halt.

And we can even make an algorithm that decides whether a lot of programs will halt.

@fink

.) Joseph Fink @planetoffinks.bsky.social 22h

y It turns out this whole time that the Turing

Test was the wrong way to think of it.

Thinking a chatbot is alive is not a test of

how good the chatbot is, but of your own

ability to think of other human beings as real

and complete people

© 12 2 120 @ 516 eee

e Greg Stolze @gregstolze.bsky.social 11h

I heard some professor put googly eyes on

a pencil and waved it at his class saying "HI!

I'm Tim the pencil! I love helping children

with their homework but my favorite is

drawing pictures!"

Then, without warning, he snapped the pencil

in half.

1/2

OD 3 ty 108 @ 245 SOG

Greg Stolze

@gregstolze.bsky.social

When half his college students gasped,

he said "THAT'S where all this Al

hype comes from. We're not good at

programming consciousness. But we're

GREAT at imagining non-concious things

are people."

2/2

@fink

.) Joseph Fink @planetoffinks.bsky.social 22h

y It turns out this whole time that the Turing

Test was the wrong way to think of it.

Thinking a chatbot is alive is not a test of

how good the chatbot is, but of your own

ability to think of other human beings as real

and complete people

© 12 2 120 @ 516 eee

e Greg Stolze @gregstolze.bsky.social 11h

I heard some professor put googly eyes on

a pencil and waved it at his class saying "HI!

I'm Tim the pencil! I love helping children

with their homework but my favorite is

drawing pictures!"

Then, without warning, he snapped the pencil

in half.

1/2

OD 3 ty 108 @ 245 SOG

Greg Stolze

@gregstolze.bsky.social

When half his college students gasped,

he said "THAT'S where all this Al

hype comes from. We're not good at

programming consciousness. But we're

GREAT at imagining non-concious things

are people."

2/2

@fink

.) Joseph Fink @planetoffinks.bsky.social 22h

y It turns out this whole time that the Turing

Test was the wrong way to think of it.

Thinking a chatbot is alive is not a test of

how good the chatbot is, but of your own

ability to think of other human beings as real

and complete people

© 12 2 120 @ 516 eee

e Greg Stolze @gregstolze.bsky.social 11h

I heard some professor put googly eyes on

a pencil and waved it at his class saying "HI!

I'm Tim the pencil! I love helping children

with their homework but my favorite is

drawing pictures!"

Then, without warning, he snapped the pencil

in half.

1/2

OD 3 ty 108 @ 245 SOG

Greg Stolze

@gregstolze.bsky.social

When half his college students gasped,

he said "THAT'S where all this Al

hype comes from. We're not good at

programming consciousness. But we're

GREAT at imagining non-concious things

are people."

2/2

@Julie I think ppl tend to treat others as objects. Rather than objects as people. It is abt not seeing ppl whole as Joseph Fink said.

We treat people as possessions & objectify them all the time to control, use, own, have rights over.

It is mainly neurodivergent/autistics, children, & cultures with animism like Shinto who think objects have anima.

Most humans tend to possess & not regard life, so seeing something like themselves in AI is not seeing humanity. It’s seeing objectification.

This needs #AltText. Let me #Alt4You. This will take up 3 postings. (If only 2 postings, then awkward split.)

1/3:

Screenshot of blue sky post from @planetoffinks.bsky.social:

It turns out this whole time that the Turing test was the wrong way to think of it. Thinking a chatbot is alive is not a test of how good the chatbot is, but of your own ability to think of other human being as real and complete people .

../2

2/3:

Reply by @gregstolze.bsky.social:

I heard some professor put googly eyes on a pencil and waved it at his class saying "Hi! I'm Tim the pencil! I love helping children with their homework but my favorite is drawing pictures! "

Then, without warning, he snapped the pencil in half.

../3

@Julie

Hi !

Could you add alt text to this please ? I'd very much like to boost it.

Thank you.

(I think there's an automatic text reader for this in the menu.)

𝕛𝕦𝕝𝕖𝕤

𝕛𝕦𝕝𝕖𝕤

Succubard's Library

Succubard's Library