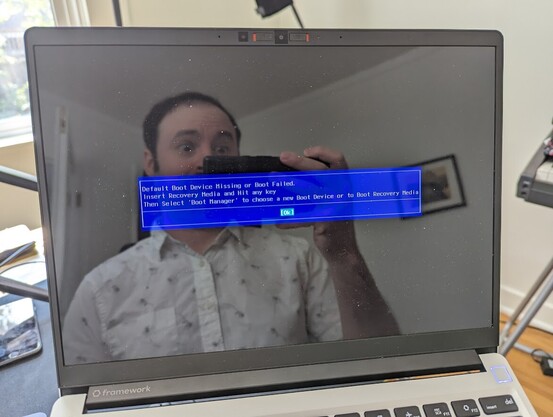

Yesterday morning, I pulled open my laptop to send a quick email. It had a frozen black screen, so I rebooted it, and… oh crap.

My 2-year-old SSD had unceremoniously died.

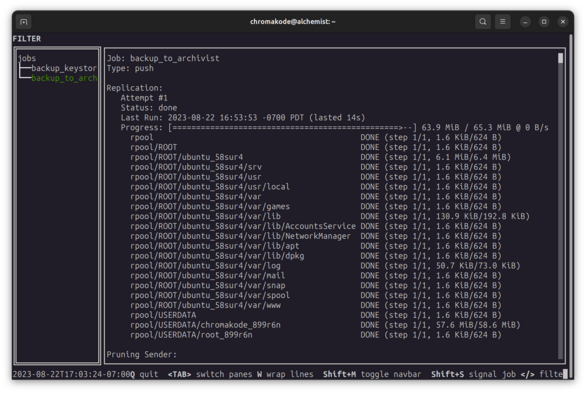

This was a gut punch, but I had an ace in the hole. I'm typing this from my restored system on a brand new drive.

In total, I lost about 10 minutes of data. Here's how. (Spoilers: #zfs #zrepl)