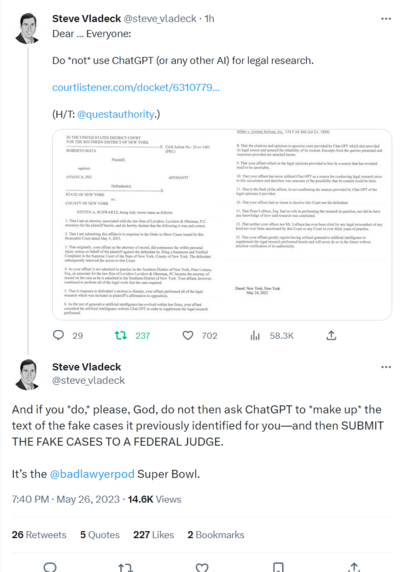

Oh my. A lawyer used #ChatGPT output in their filings and it's going about as well as you'd expect (presuming you have a couple brain cells to rub together)

https://twitter.com/steve_vladeck/status/1662286888890138624

(filings https://www.courtlistener.com/docket/63107798/mata-v-avianca-inc/)

https://www.courtlistener.com/docket/63107798/mata-v-avianca-inc/#entry-31

The original "hey we couldn't find any of those cases" was in entry #24 https://www.courtlistener.com/docket/63107798/mata-v-avianca-inc/#entry-24

In which we also learn what the case is about: "There is no dispute that the Plaintiff was travelling as a passenger on an international flight when he allegedly sustained injury after a metal serving cart struck his left knee"

… two dudes set their law licenses on fire for a personal injury suit for a guy who took a drink cart to the knee?

Idle thoughts: In a legal context, this sort of stuff is likely to be caught pretty quickly.

As happened here, the opposing side is going to try to find the cited cases and notice if they're like, totally made up.

So aside from the poor plaintiff who hired these clowns (and presumably has an argument for inadequate representation), the risk should be limited… but a lot of other contexts are much less well positioned to catch plausible looking BS early

#ChatGPT lawyer case now in nyt, with some background (gift link)

"Bart Banino, a lawyer for Avianca, said that his firm, Condon & Forsyth, specialized in aviation law and that its lawyers could tell the cases in the brief were not real. He added that they had an inkling a chatbot might have been involved."

Finally, an #AI article that at least raises the question whether BSing may be an inherent characteristic of LLMs rather than a bug that can be fixed (gift link)

Seems like these might reduce glaring errors where the training data contains a clear consensus correct answer, but doesn't really address the underlying problem.

Is a model that's usually right about stuff "everyone knows" while still making shit up about less obvious topics an improvement? Or does being right about obvious stuff encourage people to trust it when the shouldn't?

lol. 'the "rogue AI drone simulation" was a hypothetical "thought experiment" from outside the military'

Seriously that @davidgerard piece has it all, but I liked this illustration of bollockschain to AI pipeline "IBM: “The convergence of AI and blockchain brings new value to business.” IBM previously folded its failed blockchain unit into the unit for its failed Watson AI"

https://davidgerard.co.uk/blockchain/2023/06/03/crypto-collapse-get-in-loser-were-pivoting-to-ai/

#ChatGPTLawyer had their hearing, and it doesn't sound like the the judge was impressed (gift link)

This blow by blow over on the bird site suggests he took an extremely dim view of #ChatGPTLawyer's buddy LoDuca who was signing off on the filings without reading them. Also sounds like they fibbed about who was on vacation when they asked for the extension 😬

https://twitter.com/innercitypress/status/1666838526762139650

OMG the kicker on this very long, very good article on #AI labelers

#ChatGPTLawyer ruling is in and it's… SANCTIONS FOR EVERYONE! Unsurprisingly, the judge didn't buy the "no bad faith" argument, for predictable reasons such as

"Above Mr. LoDuca’s signature line, the Affirmation in Opposition states, “I declare under penalty of perjury that the foregoing is true and correct”

Although Mr. LoDuca signed the Affirmation in Opposition and filed it on ECF, he was not its author"

https://www.courtlistener.com/docket/63107798/mata-v-avianca-inc/#entry-54

"Mr. Schwartz’s statement in his May 25 affidavit that ChatGPT “supplemented” his research was a misleading attempt to mitigate his actions by creating the false impression that he had done other, meaningful research on the issue and did not rely exclusive on an AI chatbot, when, in truth and in fact, it was the only source"

https://www.theverge.com/2023/6/26/23773914/ai-large-language-models-data-scraping-generation-remaking-web

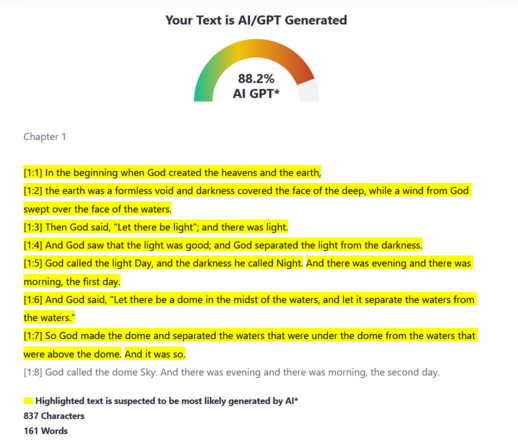

Janelle Shane on the recent #AI detector paper, with succinct advice "Don't use AI detectors for anything important"

https://www.aiweirdness.com/dont-use-ai-detectors-for-anything-important/

Don't use AI detectors for anything important

I've noted before that because AI detectors produce false positives, it's unethical to use them to detect cheating. Now there's a new study that shows it's even worse. Not only do AI detectors falsely flag human-written text as AI-written, the way in which they do it is biased. This is

https://mastodon.social/@amydentata@tech.lgbt/110651829564300496

MDN can now automatically lie to people seeking technical information · Issue #9208 · mdn/yari

Summary MDN's new "ai explain" button on code blocks generates human-like text that may be correct by happenstance, or may contain convincing falsehoods. this is a strange decision for a technical ...

https://twitter.com/Jwhitbrook/status/1676704102754004996

Oh FFS @[email protected] @[email protected] "readers also pointed out a handful of concrete cases where an incorrect answer was rendered. This feedback is enormously helpful, and the MDN team is now investigating these bug reports"

They aren't "bugs" - #LLMs by definition just put together plausible sounding words with no regard to correctness. Pointing out individual errors demonstrates this, but does not provide any mechanism by which it might be "fixed" in the general case

https://blog.mozilla.org/en/products/mdn/responsibly-empowering-developers-with-ai-on-mdn/

It also says "even extraordinarily well-trained LLMs — like humans — will sometimes be wrong"

which is true as far as it goes, but here's the thing: They are not *wrong like humans* … yes, you'll find some overconfident bullshitters on stack overflow, but generally humans in these contexts have some awareness of the limits of their knowledge and don't drift seamlessly between accurate explanation and complete BS

MDN can now automatically lie to people seeking technical information · Issue #9208 · mdn/yari

Summary MDN's new "ai explain" button on code blocks generates human-like text that may be correct by happenstance, or may contain convincing falsehoods. this is a strange decision for a technical ...

The AI help button is very good but it links to a feature that should not exist · Issue #9230 · mdn/yari

Summary I made a previous issue pointing out that the AI Help feature lies to people and should not exist because of potential harm to novices. This was renamed by @caugner to "AI Help is linked on...

(caveat I don't know the source and thought it might be a joke, but the rest of their timeline looks real, and Janelle Shane retweeted it)

https://twitter.com/guntrip/status/1640694869785030657

Good to see mainstream press finally touching the question of whether #LLM #AI BSing is fixable or an inherent property of the tech, even if it gets a bit of he said, she said treatment.

Also uh "Those errors are not a huge problem for the marketing firms turning to Jasper AI for help writing pitches…" marketing doesn't care if their pitches are BS? KNOCK ME OVER WITH A FEATHER

https://fortune.com/2023/08/01/can-ai-chatgpt-hallucinations-be-fixed-experts-doubt-altman-openai/

Complete gibberish will likely get weeded out. Common knowledge will tend to be overwhelmed by other sources. So the sweet spot for influence would seem to be obscure topics, or unique tokens that only appear in your content (though to what end isn't obvious).

Bring on the SolidGoldMagikarp https://www.lesswrong.com/posts/Ya9LzwEbfaAMY8ABo/solidgoldmagikarp-ii-technical-details-and-more-recent

"It's highly unlikely that ChatGPT's training data includes the entire text of each book under question, though the data may include references to discussions about the book's content—if the book is famous enough"

Highlights a pernicious problem with ChatGPT style #LLM #AI: It's far more likely to give reasonable answers on well-known subjects. If you spot check with say, Dickens and Hunter S. Thomson, you might think it was pretty good at spotting naughty books

But for more obscure ones, it's probably no better than a coin toss. Being relatively good at stuff "everyone knows" gives people false confidence that it's also good at stuff they don't know

(we should also note that even if the entire text of the books were in the training set, that wouldn't mean it would provide accurate answers about the content!)

https://www.404media.co/ai-generated-mushroom-foraging-books-amazon/