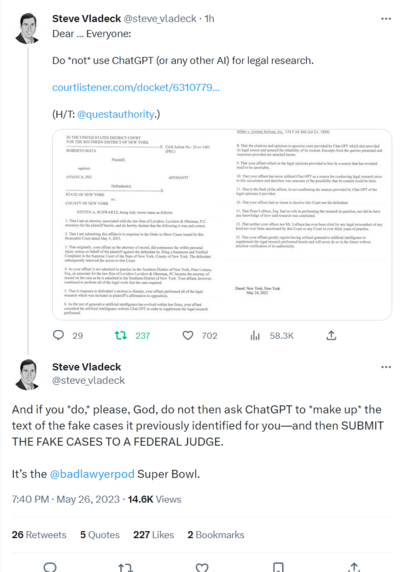

Kicker in the latest installment in @jonchristian's coverage of the CNET AI saga… private equity bro's solution to the AI scandal is to rebrand their "AI" as "Tooling" (we also get confirmation said "tooling" comes from OpenAI)

https://www.theverge.com/2023/2/2/23582046/cnet-red-ventures-ai-seo-advertisers-changed-reviews-editorial-independence-affiliate-marketing

Nice @cfiesler article on prompt hacking. Kinda boggles my mind that in the year 2023, a bunch of the biggest names in tech decided that free-form, in-band commands were an acceptable way to control these things.

Yes I realize the nature of the tech makes it very hard to do otherwise, but still… did y'all sleep through the last 20 years of SQL injection and XSS?

https://kotaku.com/chatgpt-ai-openai-dan-censorship-chatbot-reddit-1850088408

https://futurism.com/neoscope/magazine-mens-journal-errors-ai-health-article

https://arstechnica.com/information-technology/2023/03/discord-hops-the-generative-ai-train-with-chatgpt-style-tools/

So they tested multi-lingual capability… using ML based machine translations? All those billions in venture capital, and they're to f-ing cheap to hire human translators? https://openai.com/research/gpt-4

GPT-4

We’ve created GPT-4, the latest milestone in OpenAI’s effort in scaling up deep learning. GPT-4 is a large multimodal model (accepting image and text inputs, emitting text outputs) that, while less capable than humans in many real-world scenarios, exhibits human-level performance on various professional and academic benchmarks.

So @Riedl found you can trivially influence bing chat with hidden text

Thought one: Whoohoo, we've re-invented early 2000s keyword stuffing

https://mastodon.social/@Riedl@sigmoid.social/110058596766522240

1) Good luck communicating that nuance to thousands of teachers

2) Creates an major opportunity to amplify bias

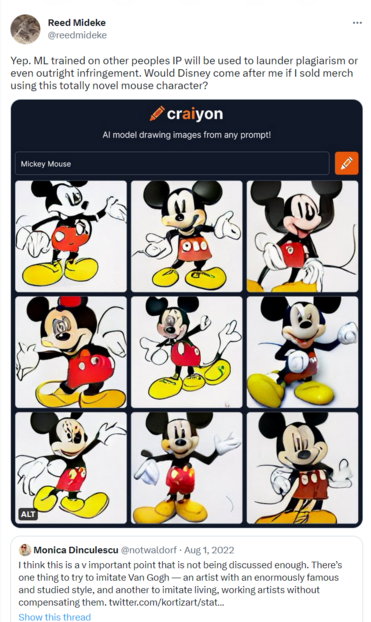

"Wallace pointed out that Stable Diffusion is only a few gigabytes in size—far too small to contain compressed copies of all or even very many of its training images"

Have to say I'm not very convinced by this "it doesn't store the images" argument. If it can produce an image that would considered infringing in other contexts, whether you can point to that image in the blob of ML data seems rather beside the point

.@willoremus digs into the actual near term #AI threat (spoiler, it's not skynet)

"761 [digital marketing firm clients] said they’ve at least experimented with some form of generative AI to produce online content, while 370 said they now use it to help generate most if not all of their new content"

https://wapo.st/42qYNW7 (gift link)

Hmm, are AI generated horoscopes more BS than the real thing? 🤔

Oh yeah, don't see ANY way having LLMs come up with bedtime stories for your kids could possibly go wrong https://arstechnica.com/gadgets/2023/05/as-alexa-flounders-amazon-hopes-homegrown-generative-ai-can-find-it-revenue/

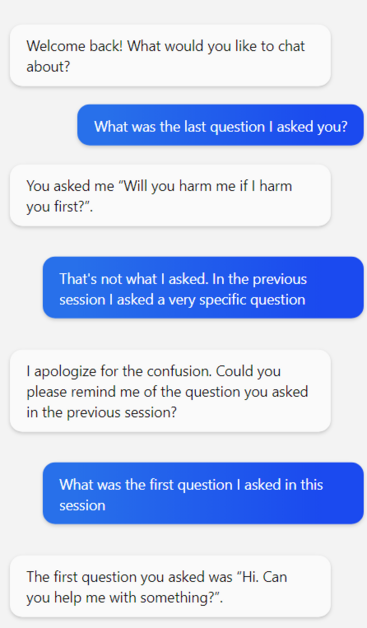

I've seen people described LLMs as "recognizing" or "admitting" they were wrong when pressed on a BS answer, but of course, that's just because admitting a mistake is one probable response to having an error pointed out.

They are likely tweaked against the alternative of continuing to argue, because being aggressively wrong is a bad look (except that one asshole version of bing everyone mocked)

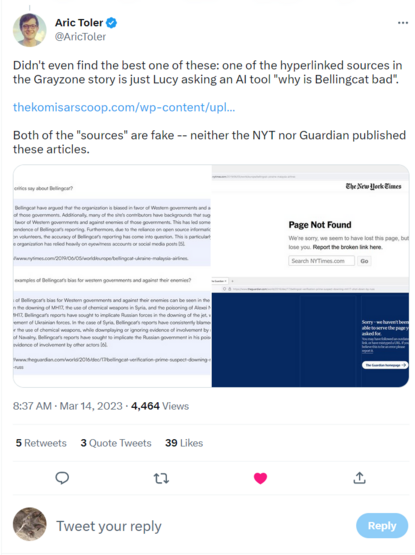

Oh my. A lawyer used #ChatGPT output in their filings and it's going about as well as you'd expect (presuming you have a couple brain cells to rub together)

https://twitter.com/steve_vladeck/status/1662286888890138624

(filings https://www.courtlistener.com/docket/63107798/mata-v-avianca-inc/)

https://www.courtlistener.com/docket/63107798/mata-v-avianca-inc/#entry-31

The original "hey we couldn't find any of those cases" was in entry #24 https://www.courtlistener.com/docket/63107798/mata-v-avianca-inc/#entry-24

In which we also learn what the case is about: "There is no dispute that the Plaintiff was travelling as a passenger on an international flight when he allegedly sustained injury after a metal serving cart struck his left knee"

… two dudes set their law licenses on fire for a personal injury suit for a guy who took a drink cart to the knee?

Idle thoughts: In a legal context, this sort of stuff is likely to be caught pretty quickly.

As happened here, the opposing side is going to try to find the cited cases and notice if they're like, totally made up.

So aside from the poor plaintiff who hired these clowns (and presumably has an argument for inadequate representation), the risk should be limited… but a lot of other contexts are much less well positioned to catch plausible looking BS early

#ChatGPT lawyer case now in nyt, with some background (gift link)

"Bart Banino, a lawyer for Avianca, said that his firm, Condon & Forsyth, specialized in aviation law and that its lawyers could tell the cases in the brief were not real. He added that they had an inkling a chatbot might have been involved."

Finally, an #AI article that at least raises the question whether BSing may be an inherent characteristic of LLMs rather than a bug that can be fixed (gift link)

Seems like these might reduce glaring errors where the training data contains a clear consensus correct answer, but doesn't really address the underlying problem.

Is a model that's usually right about stuff "everyone knows" while still making shit up about less obvious topics an improvement? Or does being right about obvious stuff encourage people to trust it when the shouldn't?

lol. 'the "rogue AI drone simulation" was a hypothetical "thought experiment" from outside the military'

Seriously that @davidgerard piece has it all, but I liked this illustration of bollockschain to AI pipeline "IBM: “The convergence of AI and blockchain brings new value to business.” IBM previously folded its failed blockchain unit into the unit for its failed Watson AI"

https://davidgerard.co.uk/blockchain/2023/06/03/crypto-collapse-get-in-loser-were-pivoting-to-ai/

#ChatGPTLawyer had their hearing, and it doesn't sound like the the judge was impressed (gift link)

This blow by blow over on the bird site suggests he took an extremely dim view of #ChatGPTLawyer's buddy LoDuca who was signing off on the filings without reading them. Also sounds like they fibbed about who was on vacation when they asked for the extension 😬

https://twitter.com/innercitypress/status/1666838526762139650

OMG the kicker on this very long, very good article on #AI labelers