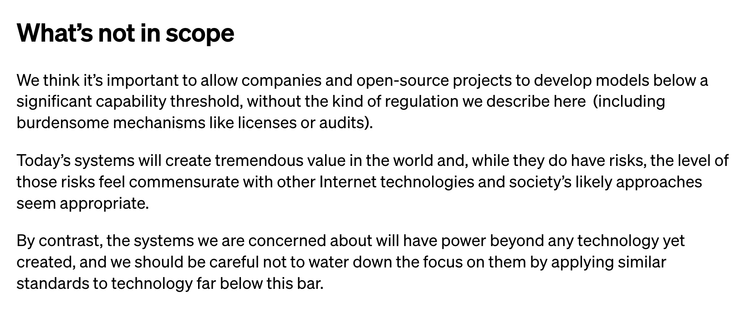

I'm actually kind of surprised that Open AI wrote this down. Like, straight up stating that though it's so so important that we regulate hypothetical technology that might harm people in the future, we should definitely not regulate the technology that exists today and is harming people in ways we can actually see... is quite a bold strategy. https://openai.com/blog/governance-of-superintelligence

The reason that Open AI emphasizing regulation for *future* technology bugs me is because I've been using science fiction to talk about tech regulation for years (e.g. https://www.howwegettonext.com/black-mirror-light-mirror-teaching-technology-ethics-through-speculation/), but try to be really careful in pointing out that near future preparation is important, and thinking through far future in an educational setting is a cool thought exercise, but that it's not a good use of our time to be actually writing laws for the robot wars.

@cfiesler Exactly. The best way to be prepared for the robot wars is to train at regulating technology now.

@cfiesler “we should totally regulate the future Death-robot tech, but let’s not get in the way of the innovation that will lead us there!”

@cfiesler yes but you're doing it to think through potential tech regulation, and they're doing it to shape tech regulation to their liking.

@cfiesler ooooo I missed this, thank you! I’m genuinely flabbergasted that the FTC has been like “heyyyy you should make sure you’re being ethical” and OpenAI is like “ummm how about no?”

@cfiesler I don’t think that’s what they’re saying.

@ftranschel @cfiesler I read it to say that they want licensing and audits for future high-capability models, but not *that type of regulation* for current models.

@jtlg @ftranschel I think that's how I read it too... my summary didn't necessarily mean all regulation, but also like, licenses and audits aren't exactly over-the-top regulation?

Considering licenses and audits are precisely some of the really basic mitigations and controls that have been proposed to partially address the current harms, it reads to me like exactly that.

@jtlg @ftranschel @cfiesler do they define what a “high-capability model” looks like? I suspect these goalposts are going to travel at the speed of Moore’s law.

@cfiesler Brazen

@cfiesler obvious play @charles_ex

@cfiesler some people want regulation, other people want to fire bomb their offices, me I'm a centrist so I suggest we regulate fire bombing their offices(only permitted during office hours)

@cfiesler this definitely gives me pause… many people have said this, but it seems worrisome that folks are focused on the long-term consequences… which seems both important in theory and also seems like a distraction from the more certain near-term concerns

@jbigham 💯

@cfiesler 'Commensurate with other Internet Technologies' is quite the standard for 'let's not regulate.' 😆 😭 🙄

@cfiesler it's as if the nuclear industry told congress "Regulate Godzilla, not radioactive waste."