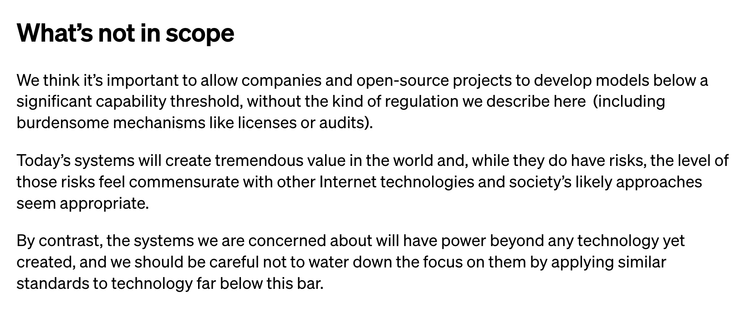

I'm actually kind of surprised that Open AI wrote this down. Like, straight up stating that though it's so so important that we regulate hypothetical technology that might harm people in the future, we should definitely not regulate the technology that exists today and is harming people in ways we can actually see... is quite a bold strategy. https://openai.com/blog/governance-of-superintelligence

The reason that Open AI emphasizing regulation for *future* technology bugs me is because I've been using science fiction to talk about tech regulation for years (e.g. https://www.howwegettonext.com/black-mirror-light-mirror-teaching-technology-ethics-through-speculation/), but try to be really careful in pointing out that near future preparation is important, and thinking through far future in an educational setting is a cool thought exercise, but that it's not a good use of our time to be actually writing laws for the robot wars.

@cfiesler Exactly. The best way to be prepared for the robot wars is to train at regulating technology now.