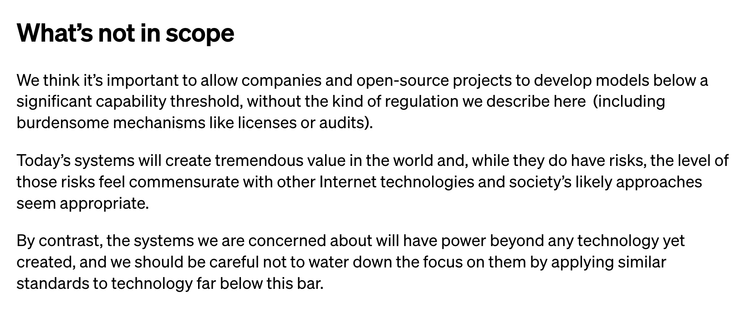

I'm actually kind of surprised that Open AI wrote this down. Like, straight up stating that though it's so so important that we regulate hypothetical technology that might harm people in the future, we should definitely not regulate the technology that exists today and is harming people in ways we can actually see... is quite a bold strategy. https://openai.com/blog/governance-of-superintelligence

@cfiesler I don’t think that’s what they’re saying.

@ftranschel @cfiesler I read it to say that they want licensing and audits for future high-capability models, but not *that type of regulation* for current models.

@jtlg @ftranschel I think that's how I read it too... my summary didn't necessarily mean all regulation, but also like, licenses and audits aren't exactly over-the-top regulation?

Considering licenses and audits are precisely some of the really basic mitigations and controls that have been proposed to partially address the current harms, it reads to me like exactly that.

@jtlg @ftranschel @cfiesler do they define what a “high-capability model” looks like? I suspect these goalposts are going to travel at the speed of Moore’s law.