I'm quite puzzled as to whether #ChatGPT has gotten better with citations or not.

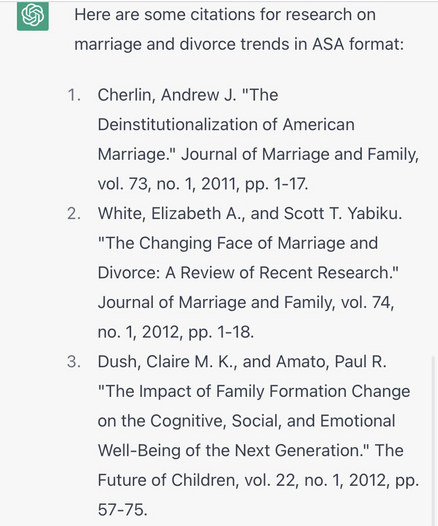

On one hand, it wrote a paper for me about bullshit that contained only references to actual papers. I don't think it used to be able to do that.

After an evening of playing around, I'm wondering if the change is not any kind of patch to the code itself that I drifted to giving it easier prompts for which it has a fuller training set and less need to fabricate references.

The more offbeat the topic, the more fabricated references I get. Some not completely crazy topics (the physics of asparagus) feature only fabricated references.

That said, I'm heartbroken that this is not a real paper.

Salinas-Melgoza, A., Taylor, A. H., and Seed, A. (2020). Wild crows discriminate objects based on their physical properties and cause a small fire to obtain food. Scientific Reports, 10(1), 1-7.

At least it didn't claim crows had learned to use AI to write code to earn their food.

Tbh, finding a good title based on a summary of findings is a good application of ChatGPT in science.

@ct_bergstrom Citing actual papers is a big improvement. The best I got from it was a list of fake papers with some author names appropriate to the subject.

I played with it soon after it was released, but I didn't save the outputs.

@bb @ct_bergstrom Doing it in a separate layer (at least filtering it through a separate layer) seems to be the only sane approach.

It’d also be the approach that humans (hopefully) use.