@sergueil37 @b0rk

> I kind of doubt that anyone had the patience to follow all of that arithmetic

I followed it all. Thanks for the explanations! #thingsivewonderedaboutformyentirelife

@b0rk Ooo, can you do one for posits?

https://www.sigarch.org/posit-a-potential-replacement-for-ieee-754/

Alanna (@[email protected])

@[email protected] The "Posits": https://en.wikipedia.org/wiki/Unum_(number_format)

@nathanstocks @steve @b0rk also worth noting that over the last 8 years there have been 3 different versions:

https://en.wikipedia.org/wiki/Unum_(number_format)

so if you had moved to type II posits (say) you'd have to migrate again. i would stay away unless you're interesting in doing your own evaluation and implementation.

How does posit distinguish between positive and negative infinity?

The exponent and fraction do not appear to use the same encoding as the regime number. How does one determine the respective lengths of these fields?

What do you do about computations that result in numbers that posit cannot represent, i.e. NaNs in floating point?

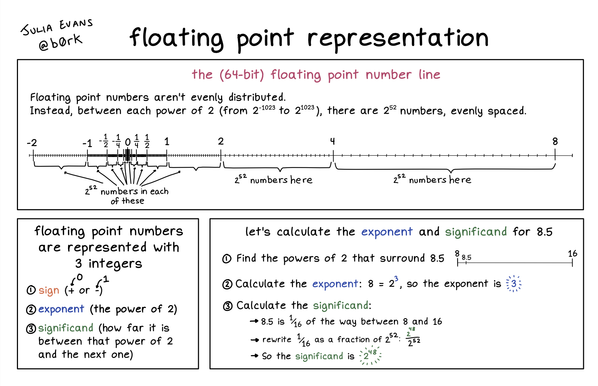

@b0rk This is a pretty spiffy illustration! And I knew that floating point didn't store numbers evenly, but I didn't know it did store them in an even distribution within tiers (powers of 2).

And I didn't realize it was so heavily focused on the center. This might explain the differences in GIMP when I switch between 32bit integer images and 32bit floating point.

Hmmm... 32bit float EXR images are nice, but I'm re-evaluating the utility of 16bit integer PNGs.

@b0rk Now I wonder how the number distribution compares between sRGB 16bit integer and Rec. 709 32bit floating point.

Though I suppose this comparison might not be so cleanly represented by an illustration like this.

@b0rk 🤯

In one toot you have made me completely understand where floating point errors originate!!

Also… does this mean that using floating points for higher numbers are more error prone than for small numbers?

@b0rk I think is something worth knowing about if you really need to manage calculation precision in floating point.

I learned about them for the first time reading about the SANE (Standard Apple Numeric Environment) in the Turbo Pascal for the Macintosh 1.0 manual… circa 1990!

@b0rk @juandesant Subnormals are very important! For example, fl(x - y) = 0 implies x = y (exactly!) if you have subnormals, and if y/2 < x < 2y then fl(x - y) = x - y (no rounding!).

But if you _don't_ have subnormals, you can't reason like this.

The diagram for subnormals is very simple: zoom in to the smallest and second-smallest exponent, and copy the same resolution between [2^emin, 2^{emin + 1}) into [0, 2^{emin}). Without subnormals, there's a huge gap between 0 and 2^{emin}!