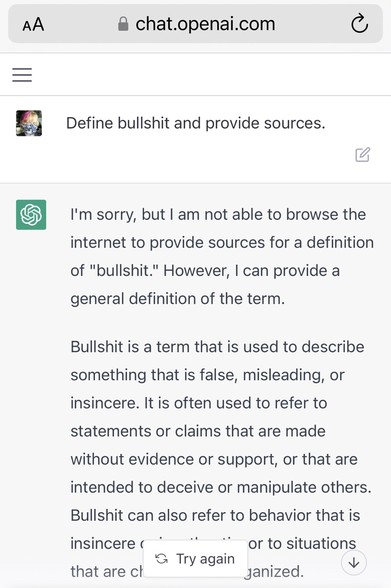

At this point it will surprise no one, but I asked #ChatGPT to define bullshit and to cite its sources.

It provided definitions from the Cambridge English Dictionary and the Merriam-Webster Dictionary.

The definitions it provided were entirely reasonable, but they were decidedly not from the sources it claimed.

This highlights the fact that ChatGPT and other LLMs are not knowledge models, they are themselves engines trained to produce convincing bullshit.

Below: ChatGPT, CED, MW.