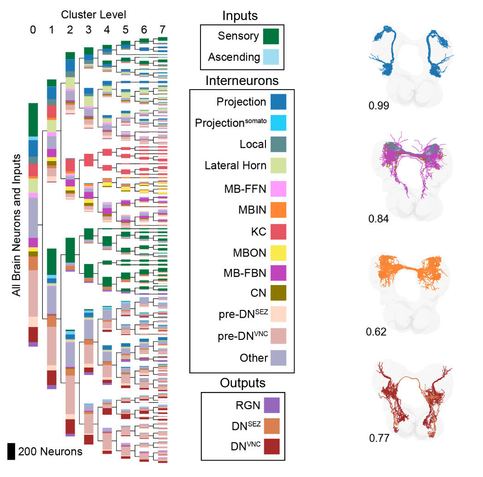

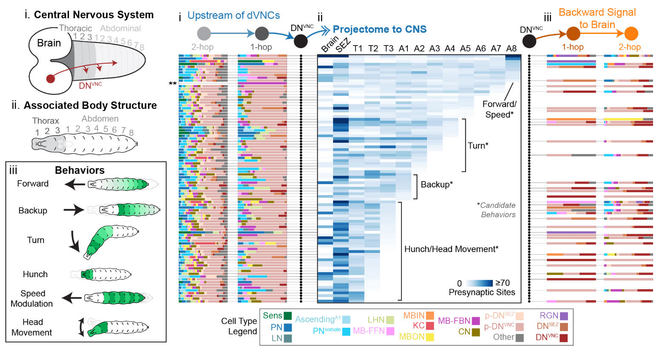

@alex_p_roe For the time being I am content with mapping brain circuits and making sense of them through a combination of genetics, functional imaging, observation of behavioural perturbations, and computational modeling. All of this is possible in a tiny organism, and not at all on a large one, at least, not if one has the ambition of studying the complete brain at nanometre resolution.

As per the "hallucinations", large language models don't hallucinate. Instead, they are simply statistical models of language, and therefore what they generate is what is plausible, given a corpus of texts it was trained on. It does not reason. They also fall prey to the curse of dimensionality, where the higher the number dimensions, the more "empty space" – regions of the activation space where nothing makes sense – there is.