This is a big problem for Mastodon.

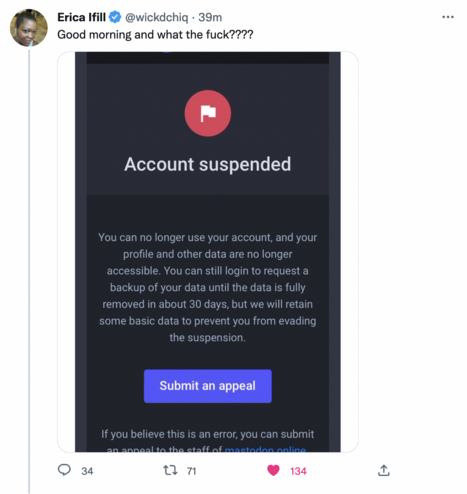

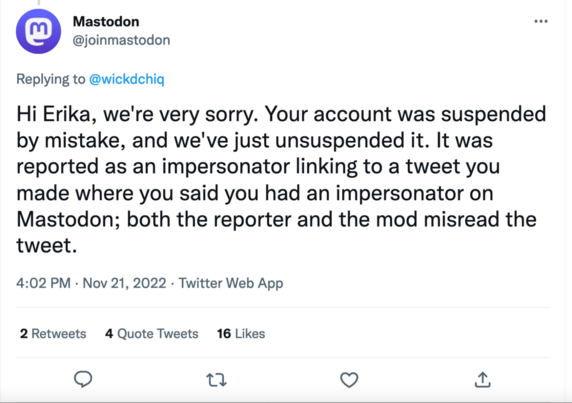

Canadian journalist Erica Ifill just had her mastodon.online account suspended without explanation. She's been sharing stuff critical of Mastodon re: intersectional issues.

I get the decentralized structure and idea that each server has its own rules. But this Reddit-style moderation, where moderators with god-complexes make mysterious and arbitrary decisions, is going to cause people migrating to Mastodon to flee in droves.