The Dishonest Button

The mute button on the top of an Amazon Echo of a certain vintage is supposed to disconnect the microphone. A small icon of a crossed-out microphone sits next to the button, and pressing it turns on a red LED that confirms the disconnection. Software controls this button, which means the microphone remains electronically capable of capturing audio after the LED turns red, and the only thing preventing that capture is a line of code in the firmware. Security researchers have documented the capability and the manufacturers have acknowledged it. Whether the capability was ever exercised in practice is a separate question that the user pressing the button cannot answer from the front of the device. Bloomberg reported in April 2019 that Amazon employed thousands of workers worldwide whose job was to listen to Alexa recordings, and similar contractor review programs were acknowledged by Apple and Google for Siri and Assistant later that year. Subsequent Echo models added a hardware microphone disconnect that physically interrupts the microphone circuit when the mute button is pressed. Press the mute button on a smart speaker without that hardware disconnect and you are pressing the third kind of button in this series.

The placebo button was the first kind. It pretended to give you control over a system that ignored your input. The honest button was the second kind. It openly delivered instructions to you while making no claim about the system. The dishonest button is the third kind. It claims to do nothing or to disconnect or to refuse, and then it does the opposite of what it claims, quietly, with no LED to confirm what is actually happening on the other side of the press.

The Wait LED is honest about its uselessness. The dishonest button is dishonest about its activity. It is the most worrying of the three because the user has no way of telling, from the front of the device, whether the button has done what it promised. A placebo button can be tested by timing the signal. An honest button announces what it is doing. The dishonest button presents a clean front while running operations the user is not authorized to see.

The Facebook Like button on a third-party website is the same kind of object. It appears on a news article, on a recipe page, on a blog post, and a reader can press it to share the content with their Facebook friends. A reader who does not press the Like button generally believes nothing has happened. In fact the Like button is a script loaded from Facebook’s servers, and the act of loading the script transmits to Facebook the URL of the page, the time of access, and whatever identifying information the reader’s browser cookies make available. Facebook receives the data whether or not the button is pressed. Pressing is the visible activity. Loading is the actual activity. The button serves as cover story for the script that runs whether the button is touched or not.

The thermostat in a hotel room is a quieter version of the same pattern. The placebo thermostat described in the first essay in this series did nothing because the actual climate control happened from a central building management system. A dishonest thermostat in many newer hotels is the same hardware doing covert work. Its Set Point is recorded. Room occupancy is inferred from the timing of thermostat adjustments. Hospitality analytics vendors aggregate the data and sell hotels reports on guest behavior patterns. The button on the wall is dishonest about being a button. Underneath, the device is doing data work the user has not been asked to consent to.

The Ring video doorbell is a residential version of the same architecture. Amazon sells the product as a security camera that lets a homeowner see who is at the front door. Its Neighbors program, built around the product, partnered with more than two thousand US police departments by 2022, allowing police to request video clips from Ring users for investigative purposes through the app. Amazon ended that feature in 2024 under significant public pressure, after years during which the user’s pressing of the doorbell completed a circuit the user had not been asked to authorize. The user pressed the doorbell. They pressed the install button. They did not press a button labeled “Submit my footage to local law enforcement on request without a warrant.” That button does not exist on the device, because the device was not designed to require that press. The covert work happens at the policy layer, where the user agreement permits what the device cannot ask about directly.

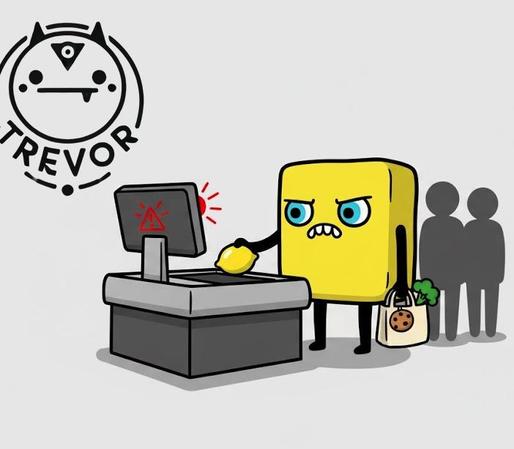

The self-checkout terminal at a grocery store is a more visible example for shoppers who pay attention. Its screen prompts the customer to scan items, place them in the bag, and pay. A camera trained on the customer watches the transaction, and the bagging area’s weight sensor triggers an attendant alert when the weight does not match the scanned items. Many newer self-checkouts also have AI loss-prevention systems that classify customer behavior in real time, flagging movements the system has been trained to identify as potential theft. The customer pressed a button labeled Pay. They did not press a button labeled Be Recorded And Behaviorally Classified. Pay is the cover story. Recording and classification are the covert work, and they run on every customer regardless of whether the customer presses anything.

The pattern across these cases is consistent. A button presents itself as a simple input device, an inert surface, or a placebo. The actual function of the device runs continuously underneath the button, and the button serves either as a trigger for a parallel covert process or as a distraction from a continuous covert process that does not need the button at all. The dishonest button is a magician’s misdirection. A user watches the visible hand pressing the visible button. The other hand is doing the data work, and the data work is what the device exists to perform.

Distinguishing the dishonest button from the placebo button matters for both diagnosis and remedy. A placebo button can be left in place because it does nothing in either direction, and a citizen who knows the button is a placebo can press it for fidget value without harm. A dishonest button cannot be left in place. The dishonest button is the device through which the covert operation runs, and pressing it, or sometimes just being near it, completes the surveillance circuit. Recognizing a placebo button is enough to neutralize it. The remedy for a dishonest button is to refuse it, replace it, or surround it with hardware controls the front-end button cannot override.

This is what hardware microphone disconnects on premium smart speakers were designed to address. The hardware disconnect physically interrupts the microphone circuit when the mute button is pressed, making it impossible for software to continue capturing audio. Apple introduced this on the M1 MacBook in 2020 for the laptop microphone. Some Echo models added similar features after the 2019 reporting. The hardware solution exists because the software solution had been proven dishonest. The hardware disconnect makes the button honest again, in the sense that the LED indicator now corresponds to the actual state of the device. A dishonest button can be rehabilitated by changing what happens on the other side of the press.

The civic version of this pattern is harder to fix because the buttons are everywhere and the disconnects are usually unavailable. Cookie consent banners on websites are the most visible example. A banner asks the user to accept or reject tracking. The user clicks Reject All. Tracking continues via cookies categorized as essential, via fingerprinting, via the user’s IP address, and via the loaded scripts from third parties that do not respect the user’s choice. The Reject button is dishonest in the way the mute button on the early Echo was dishonest. The press is recorded while the compliance is ignored.

The fix has to operate at a layer below the button. Hardware microphone disconnects on smart speakers. Browser-level tracking blockers that operate independently of cookie banners. Mobile operating system permissions that physically prevent apps from accessing data even when the apps claim to need it. State and federal privacy laws that make the covert work illegal regardless of what the user pressed on the front-end interface. Each of these solutions accepts that the button is not the location where the user’s choice will be honored. The user’s choice has to be enforced upstream of the button, or downstream of the data the button covers for, or beside the button entirely.

The three buttons together describe a small civic taxonomy. Among the three, the placebo is the most familiar, the honest is the most political, and the dishonest is the most dangerous because it looks like one of the other two while doing something neither of the other two does, and the user pressing it cannot tell which.

#amazon #dishonest #echo #facebook #hardware #honest #honestButton #like #microphone #placebo #placeboButton #ringVideo #selfCheckout #tech #themostat