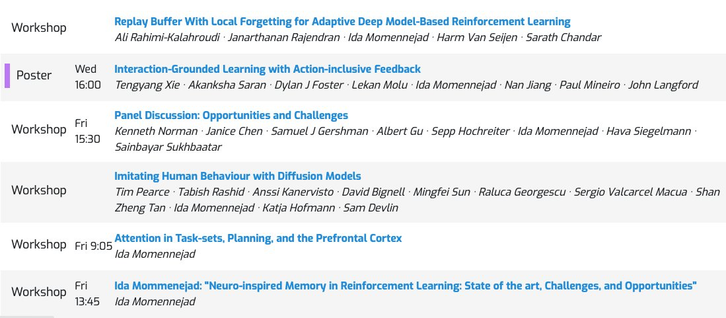

We're so excited to be part of this conversation and to have our work highlighted at #NeurIPS22.

We look forward to seeing how continuing collaborations create positive change! #MozFest #TAIWG

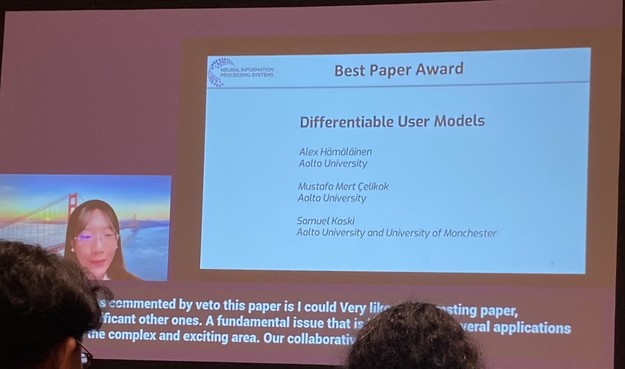

Thank you for your great work, @jen_gineered! ⭐️https://twitter.com/jen_gineered/status/1599093957224374272

Original tweet : https://twitter.com/mozillafestival/status/1622915177514426368