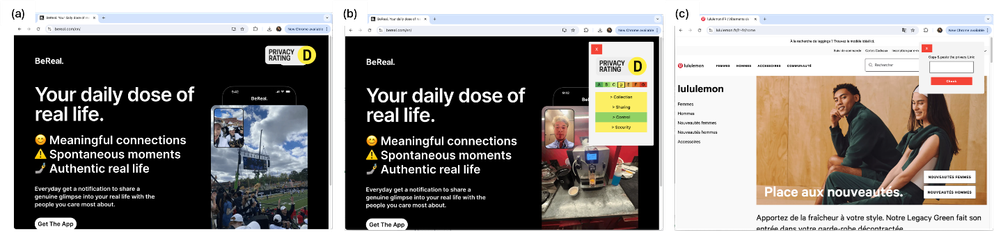

🔍 Do privacy labels actually change how people behave online?

Our new paper, “Visual Privacy: The Impact of Privacy Labels on Privacy Behaviors Online,” published in ACM Transactions on Social Computing, investigates exactly this question—and provides both a scalable technical solution and empirical evidence.

👉 Full paper: https://dl.acm.org/doi/abs/10.1145/3804460

#Privacy #DataPrivacy #HCI #PrivacyPolicies #PrivacyProtection