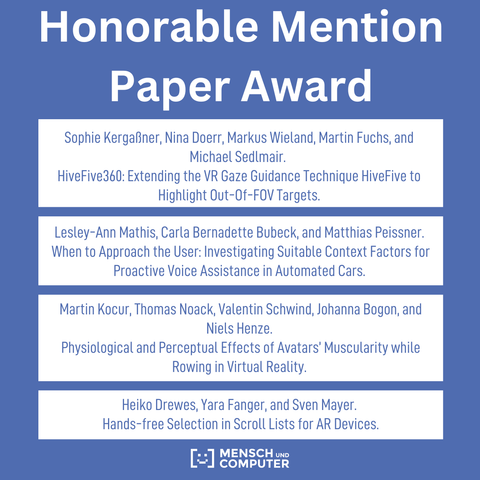

🇬🇧 Congratulations to Sophie Kergaßner, Nina Doerr, Markus Wieland, Martin Fuchs, Michael Sedlmair, Lesley-Ann Mathis, Carla Bernadette Bubeck, Matthias Peissner, Martin Kocur, Thomas Noack, Valentin Schwind, Johanna Bogon, Niels Henze, Heiko Drewes, Yara Fanger, and Sven Mayer! 🎉 Your paper received an Honorable Mention Paper Award for an outstanding work!

https://muc2024.mensch-und-computer.de/en/awards/

#muc2024 #awards #fullpaper