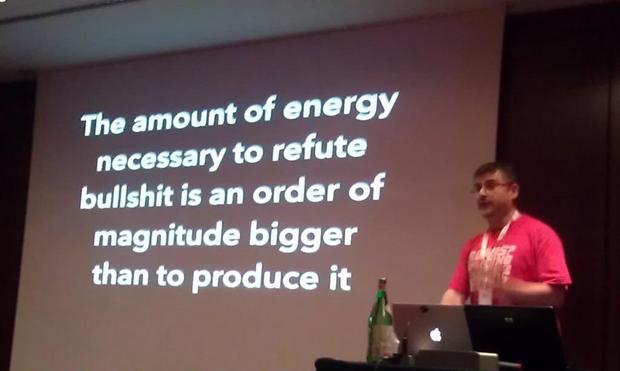

“The amount of energy needed to refute bullshit is an order of magnitude bigger than to produce it.” – Alberto Brandolini, 2013

The #infosec debt we're running up right now is a bit worrying.

@arti --

You're the prime example of this meme. Thank you for outing yourself.

Google, "Debunk Vaccines rewrite your DNA"

It is amazing that you posted this without checking it first.

#llm #ai #enshittification #cost #brandolinislaw

The easy LLM-ification of written communication is becoming so pervasive and is so harmful that eventually everyone who deals with written communication will need to build protection to guard against LLM-generated content.

This cost of filtering the toxic sludge is at least a magnitude higher than the cost of implementing the LLM solutions causing it. (1/2)

When alt-right says that “the left can’t meme”, this is what they mean.

Fusing around a crystallized idea that requires time and energy of #BrandolinisLaw is a superpower.

Sampath Pāṇini ® (@[email protected])

“a theory of how #totalitarianism might naturally triumph. The basic idea is that when #information is costly, #liberal #democracy wins because it gathers more and better information than closed societies, but when information is cheap, negative-sum information tournaments sap an increasingly large portion of a liberal #society’s resources” https://open.substack.com/pub/noahpinion/p/how-liberal-democracy-might-lose?r=4hxgy&utm_medium=ios

The universal asymmetry of bunk.

"...the effort to unpack it, explain it, draw the lines connecting the dots, and distinguish between what is real and not is an exhaustive, time-consuming, resource-intensive process, which is partly why these efforts are so effective and difficult to counter."

I had a fun time yesterday playing with customized prompts in Ollama. These basically wrap the prompts you give to your local LLM with a standing prompt that conditions all responses. So, if you want your LLM to respond to queries briefly, you can specify that. Or of you want it to respond to every prompt like Foghorn Leghorn, hey. You can also adjust the “temperature” of the model: The spicier you make it, the weirder things get.

First I tried to make a conspiracy theorist LLM, and it didn’t really work. I used llama2-uncensored and it was relentlessly boring. It kind of “both sides”ed everything and was studiously cautious about taking a position on anything. (Part of the problem may have been that I had the temperature set at 0.8 because I thought the scale was 0-1.)

So I moved on to a different uncensored model, dolphin-mixtral, and oh boy. This time, I set the temperature to 2 and we were off to the races:

OK buddy, go home, you’re drunk.

Then I cranked the temperature up to 3 and made a mean one…

… and a kind of horny/rapey one that I won’t post here. It was actually very easy, in a couple of minutes, to customize an open-source LLM to respond however you want. I could have made a racist one. I could have made one that endlessly argues about politics. I could have made one that raises concerns about Nazis infiltrating the Ukrainian armed forces, or Joe Biden’s age, or the national debt.

Basically, there’s a lot of easy ways to customize an LLM to make everyone’s day worse, but I don’t think I could have customized one to make anything better. There’s an asymmetry built into the technology. A bot that says nice things isn’t going to have the same impact as a bot that says mean things, or wrong things.

It got me thinking about Brandolini’s law, the asymmetry of bullshit, “The amount of energy needed to refute bullshit is an order of magnitude bigger than that needed to produce it,” which you can generalize even further to “it’s easier to break things than to make things.”

As the technology stands right now, uncensored open-source LLMs can be very good at breaking things– trust, self-worth, fact-based reality, sense of safety. It would be trivial to inject LLM-augmented bots into social spaces and corrupt them with hate, conflict, racism, and disinformation. It’s a much bigger lift to use an LLM to make something.

The cliche is that a technology is only as good as its user, but I’m having a hard time imaging a good LLM user who can do as much good as a bad LLM user can do bad.

With AI tools unleashed, #BrandolinisLaw is harshening. We face a new torrent of climate bunk.

So, we’re working on the holy grail of fact-checking - automated detection and debunking of climate misinformation - and we need your help!

Specifically, we’re using generative AI to automatically create debunkings in response to misinformation posts. We need critical thinking of real humans to grade "our" output according to a rubric.

Interested in volunteering? Details:

https://skepticalscience.com/volunteer-opportunity-GLLM-evaluation.shtml?utm-source=mastodon&utm-campaign=socialnetworks&utm-term=sks

Volunteer activity: Evaluate automated climate misinformation debunkings

Examines the science and arguments of global warming skepticism. Common objections like 'global warming is caused by the sun', 'temperature has changed naturally in the past' or 'other planets are warming too' are examined to see what the science really says.