If Elon Musk’s Neuralink brain-implant venture succeeds in its effort to create next-generation brain implants for artificial vision, the devices could bring about a breakthrough for those with impaired sight — but pro

https://cosmiclog.com/2024/07/29/elon-musks-views-on-artificial-vision-get-a-reality-check/

#GeekWire #ArtificialVision #Brain #ElonMusk #Health #Medicine #Neuralink #Neuroscience #Science #UniversityOfWashington

Day 20240331, Started the sowing of the transported genetic material

Day 20240407, first vegetable sprouts start appearing

Day 20240410, started to transplant the first set of plants in bigger pots.

Day 20240515, the plants seem to adapt perfectly at the local metereological conditions and of the continued sniffings of the local fauna - 🐈 🤪

Day 20240613, the vegetable speciments start the blooming process

...

#balconygardens #artificialvision

AI’s Potential to Transform the Visual Experience for the Blind #ArtificialVision

Hashtags: #chatGPT #AIforBlindness #EnhancingSight Summery: Envision, a smartphone app and Google Glass accessory, is integrating OpenAI's GPT-4 language model to provide visually impaired users with more detailed descriptions of their surroundings. The app, which originally launched in 2018 to read text in photos, has now incorporated GPT-4 to offer conversational responses and answer…

#DCNN #ArtificialVision #ComputationalObjectVision

https://doi.org/10.1371/journal.pcbi.1011086

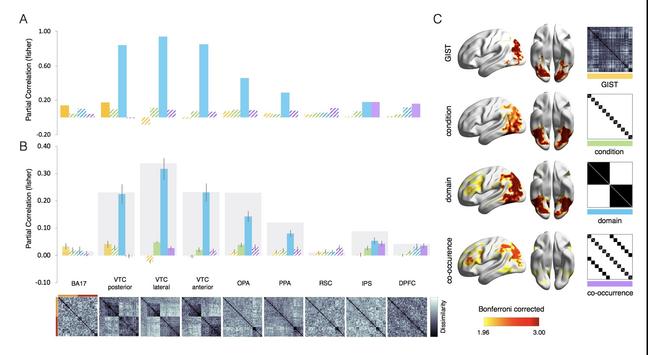

The representational hierarchy in human and artificial visual systems in the presence of object-scene regularities

Author summary Computational object vision represents the new frontier of brain models, but do current artificial visual systems known as deep convolutional neural networks (DCNNs) represent the world as humans do? Our results reveal that DCNNs are able to capture important representational aspects of human vision both at the behavioral and neural levels. At the behavioral level, DCNNs are able to pick up contextual regularities of objects and scenes thus mimicking human high-level semantic knowledge such as learning that a polar bear “lives” in ice landscapes. At the neural representational level, DCNNs capture the representational hierarchy observed in the visual cortex all the way up to frontoparietal areas. Despite these remarkable correspondences, the information processing strategies implemented differ. In order to aim for future DCNNs to perceive the world as humans do, we suggest the need to consider aspects of training and tasks that more closely match the wide computational role of human object vision over and above object recognition.