Observer | The Legal and Ethical Minefield of A.I.-Driven Employee Surveillance by Kayvon Touran and Ben Dattner

AI generated summary, Read the full article for complete information.

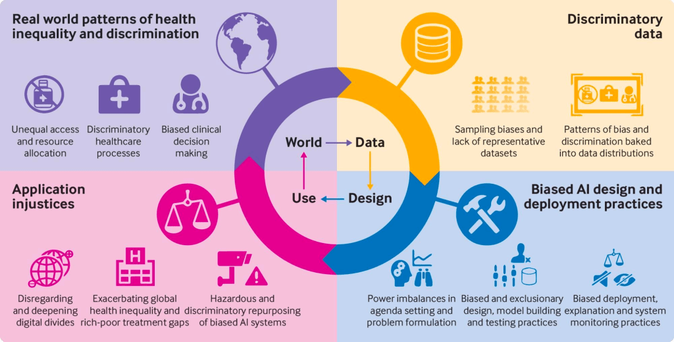

The article warns that AI systems originally marketed as objective performance tools are now being used to continuously monitor, profile, and even manipulate employees, creating a new “surveillance wages” dynamic where pay and career decisions are driven by hidden algorithms that analyze keystrokes, email sentiment, location data, and even personal financial or health information. This deep‑level monitoring can amplify existing biases, erode privacy, and undermine legal protections such as the ADA, Title VII, and the NLRA because current employment laws were designed for human decision‑makers, not opaque algorithmic systems. The authors detail how companies—from major tech platforms like Microsoft’s Viva Insights to warehouse giants like Amazon—are deploying tools that infer productivity, psychological states, and financial vulnerability, then use those insights to shape compensation, promotions, and even employee behavior without consent. To curb these risks, they advocate transparency, employee consent, independent validation of AI models, human oversight of critical decisions, and proactive engagement with emerging regulations that aim to limit algorithmic wage‑setting and protect workers from covert manipulation.

Read more: https://observer.com/2026/05/legal-ethical-risks-ai-employee-profiling-workplace-monitoring/

#artificialintelligence #surveillancewages #employeesurveillance #algorithmicbias #privacyrights

The Legal and Ethical Minefield of A.I.-Driven Employee Surveillance

Zal.ai CEO Kayvon Touran and organizational leadership expert Ben Dattner examine the rapidly expanding use of A.I. in employee monitoring, performance evaluation and compensation. They argue that existing laws and workplace norms are dangerously unprepared for a future defined by surveillance, behavioral profiling and psychological manipulation.