https://winbuzzer.com/2026/06/03/microsoft-assert-turns-ai-agent-policies-into-tests-xcxwbn/

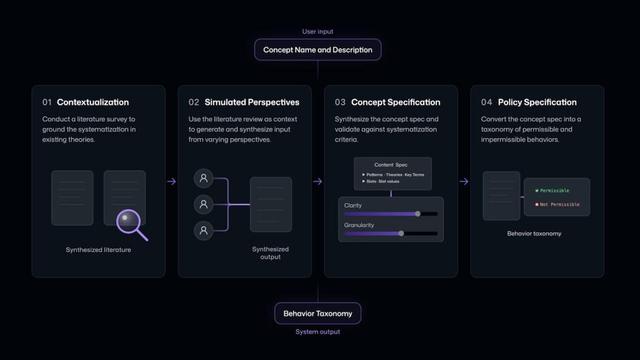

Microsoft has released ASSERT, an open-source framework that turns AI-agent policies into executable tests so developers can catch failures before deployment.

#AI #Microsoft #AIAgents #AgenticAI #AISafety #AIDevelopment #OpenSourceAI #Developers #MicrosoftAI