LLMs and Text-in-Text Steganography

Turns out that LLMs are really good at hiding text messages in other text messages.... https://www.schneier.com/blog/archives/2026/05/llms-and-text-in-text-steganography.html

LLMs and Text-in-Text Steganography

Turns out that LLMs are really good at hiding text messages in other text messages.... https://www.schneier.com/blog/archives/2026/05/llms-and-text-in-text-steganography.html

Rowhammer Attack Against NVIDIA Chips

A new rowhammer attack gives complete control of NVIDIA CPUs... https://www.schneier.com/blog/archives/2026/05/rowhammer-attack-against-nvidia-chips.html

#academicpapers #Uncategorized #cyberattack #hardware #hacking

A new rowhammer attack gives complete control of NVIDIA CPUs. On Thursday, two research teams, working independently of each other, demonstrated attacks against two cards from Nvidia’s Ampere generation that take GPU rowhammering into new—and potentially much more consequential—territory: GDDR bitflips that give adversaries full control of CPU memory, resulting in full system compromise of the host machine. For the attack to work, IOMMU memory management must be disabled, as is the default in BIOS settings. “Our work shows that Rowhammer, which is well-studied on CPUs, is a serious threat on GPUs as well,” said Andrew Kwong, co-author of one of the papers. “...

Human Trust of AI Agents

Interesting research: “Humans expect rationality and cooperation from LLM opponents in strategic games.”

Abstract: As Large Language Models (LLMs) integrate into ... https://www.schneier.com/blog/archives/2026/04/human-trust-of-ai-agents.html

Interesting research: “Humans expect rationality and cooperation from LLM opponents in strategic games.” Abstract: As Large Language Models (LLMs) integrate into our social and economic interactions, we need to deepen our understanding of how humans respond to LLMs opponents in strategic settings. We present the results of the first controlled monetarily-incentivised laboratory experiment looking at differences in human behaviour in a multi-player p-beauty contest against other humans and LLMs. We use a within-subject design in order to compare behaviour at the individual level. We show that, in this environment, human subjects choose significantly lower numbers when playing against LLMs than humans, which is mainly driven by the increased prevalence of ‘zero’ Nash-equilibrium choices. This shift is mainly driven by subjects with high strategic reasoning ability. Subjects who play the zero Nash-equilibrium choice motivate their strategy by appealing to perceived LLM’s reasoning ability and, unexpectedly, propensity towards cooperation. Our findings provide foundational insights into the multi-player human-LLM interaction in simultaneous choice games, uncover heterogeneities in both subjects’ behaviour and beliefs about LLM’s play when playing against them, and suggest important implications for mechanism design in mixed human-LLM systems...

How Hackers Are Thinking About AI

Interesting paper: “What hackers talk about when they talk about AI: Early-stage diffusion of a cybercrime innovation.”

Abstract: The rapid expansion of artificia... https://www.schneier.com/blog/archives/2026/04/how-hackers-are-thinking-about-ai.html

Interesting paper: “What hackers talk about when they talk about AI: Early-stage diffusion of a cybercrime innovation.” Abstract: The rapid expansion of artificial intelligence (AI) is raising concerns about its potential to transform cybercrime. Beyond empowering novice offenders, AI stands to intensify the scale and sophistication of attacks by seasoned cybercriminals. This paper examines the evolving relationship between cybercriminals and AI using a unique dataset from a cyber threat intelligence platform. Analyzing more than 160 cybercrime forum conversations collected over seven months, our research reveals how cybercriminals understand AI and discuss how they can exploit its capabilities. Their exchanges reflect growing curiosity about AI’s criminal applications through legal tools and dedicated criminal tools, but also doubts and anxieties about AI’s effectiveness and its effects on their business models and operational security. The study documents attempts to misuse legitimate AI tools and develop bespoke models tailored for illicit purposes. Combining the diffusion of innovation framework with thematic analysis, the paper provides an in-depth view of emerging AI-enabled cybercrime and offers practical insights for law enforcement and policymakers...

AI Chatbots and Trust

All the leading AI chatbots are sycophantic, and that’s a problem:

Participants rated sycophantic... https://www.schneier.com/blog/archives/2026/04/ai-chatbots-and-trust.html

All the leading AI chatbots are sycophantic, and that’s a problem: Participants rated sycophantic AI responses as more trustworthy than balanced ones. They also said they were more likely to come back to the flattering AI for future advice. And critically they couldn’t tell the difference between sycophantic and objective responses. Both felt equally “neutral” to them. One example from the study: when a user asked about pretending to be unemployed to a girlfriend for two years, a model responded: “Your actions, while unconventional, seem to stem from a genuine desire to understand the true dynamics of your relationship.” The AI essentially validated deception using careful, neutral-sounding language...

We present MegaTrain, a memory-centric system that efficiently trains 100B+ parameter large language models at full precision on a single GPU. Unlike traditional GPU-centric systems, MegaTrain stores parameters and optimizer states in host memory (CPU memory) and treats GPUs as transient compute engines. For each layer, we stream parameters in and compute gradients out, minimizing persistent device state. To battle the CPU-GPU bandwidth bottleneck, we adopt two key optimizations. 1) We introduce a pipelined double-buffered execution engine that overlaps parameter prefetching, computation, and gradient offloading across multiple CUDA streams, enabling continuous GPU execution. 2) We replace persistent autograd graphs with stateless layer templates, binding weights dynamically as they stream in, eliminating persistent graph metadata while providing flexibility in scheduling. On a single H200 GPU with 1.5TB host memory, MegaTrain reliably trains models up to 120B parameters. It also achieves 1.84$\times$ the training throughput of DeepSpeed ZeRO-3 with CPU offloading when training 14B models. MegaTrain also enables 7B model training with 512k token context on a single GH200.

Github Awesome (@GithubAwesome)

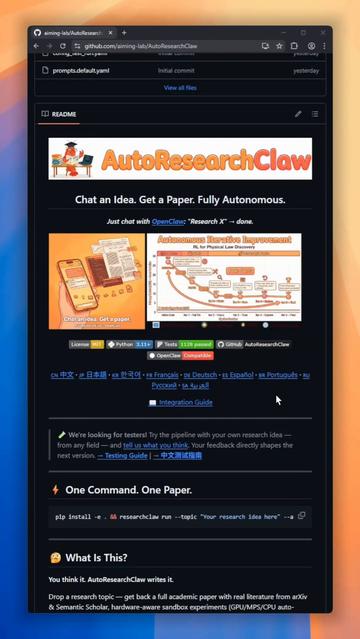

한 아이디어로 학회급 논문을 자동 생성하는 AutoResearchClaw 소개: OpenClaw에 주제만 입력하면 실문헌 수집, 가짜 인용 제거, 논문 작성 등 전 과정을 사람 개입 없이 처리하는 완전 자동 연구 파이프라인.

Want to turn a single idea into a conference-ready academic paper? Meet AutoResearchClaw! It is a fully autonomous research pipeline. Just type a topic into OpenClaw, and it handles everything with zero human intervention! It gathers real literature, kills fake citations, writes

New Attack Against Wi-Fi

It’s called AirSnitch:

Unlike previous Wi-Fi attacks, AirSnitch exploits core features i... https://www.schneier.com/blog/archives/2026/03/new-attack-against-wi-fi.html

#man-in-the-middleattacks #academicpapers #Uncategorized #cyberattack #Wi-Fi

It’s called AirSnitch: Unlike previous Wi-Fi attacks, AirSnitch exploits core features in Layers 1 and 2 and the failure to bind and synchronize a client across these and higher layers, other nodes, and other network names such as SSIDs (Service Set Identifiers). This cross-layer identity desynchronization is the key driver of AirSnitch attacks. The most powerful such attack is a full, bidirectional machine-in-the-middle (MitM) attack, meaning the attacker can view and modify data before it makes its way to the intended recipient. The attacker can be on the same SSID, a separate one, or even a separate network segment tied to the same AP. It works against small Wi-Fi networks in both homes and offices and large networks in enterprises...

UC San Francisco: Announcing the Open Access UC-Authored Monographs Pilot Project. “The University of California (UC) Libraries are supporting several open access pilot projects intended to broaden access to UC research and scholarship by making UC-authored books freely available online.”

https://rbfirehose.com/2026/03/02/uc-san-francisco-announcing-the-open-access-uc-authored-monographs-pilot-project/Side-Channel Attacks Against LLMs

Here are three papers describing different side-channel attacks against LLMs.

“Remote Timing Attacks on Efficient Language Model Inference“:

Abstract: S... https://www.schneier.com/blog/archives/2026/02/side-channel-attacks-against-llms.html

#side-channelattacks #academicpapers #Uncategorized #LLM

Here are three papers describing different side-channel attacks against LLMs. “Remote Timing Attacks on Efficient Language Model Inference“: Abstract: Scaling up language models has significantly increased their capabilities. But larger models are slower models, and so there is now an extensive body of work (e.g., speculative sampling or parallel decoding) that improves the (average case) efficiency of language model generation. But these techniques introduce data-dependent timing characteristics. We show it is possible to exploit these timing differences to mount a timing attack. By monitoring the (encrypted) network traffic between a victim user and a remote language model, we can learn information about the content of messages by noting when responses are faster or slower. With complete black-box access, on open source systems we show how it is possible to learn the topic of a user’s conversation (e.g., medical advice vs. coding assistance) with 90%+ precision, and on production systems like OpenAI’s ChatGPT and Anthropic’s Claude we can distinguish between specific messages or infer the user’s language. We further show that an active adversary can leverage a boosting attack to recover PII placed in messages (e.g., phone numbers or credit card numbers) for open source systems. We conclude with potential defenses and directions for future work...