Just Enough Chimera Linux ☯ Daniel Wayne Armstrong

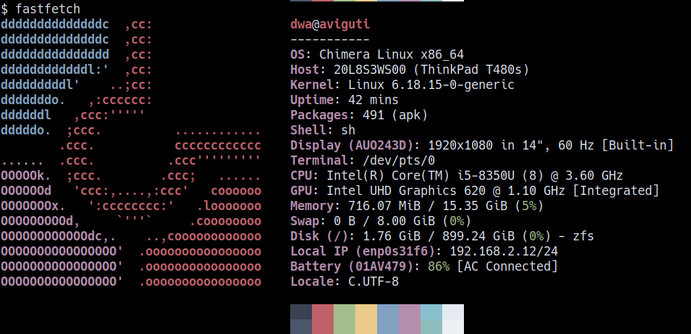

✨ Check out this awesome post from Hacker News 📖 📂 **Category**: 📌 **What You’ll Learn**: Last edited on 2026-04-03 • Tagged under #chimera #linux #zfs #encrypt #zfsbootmenu Chimera Linux is a delightful community-driven Linux distribution built from scratch that does things differently: musl instead of the typical glibc for C library, dinit over systemd for system init, and a userland derived from FreeBSD…

https://viralpique.com/just-enough-chimera-linux-%e2%98%af-daniel-wayne-armstrong/