@Jacob @pluralistic @HalvarFlake

HT x sharing, enjoyed listening:

• Compare current to #gofAI begging #ML algos & tools

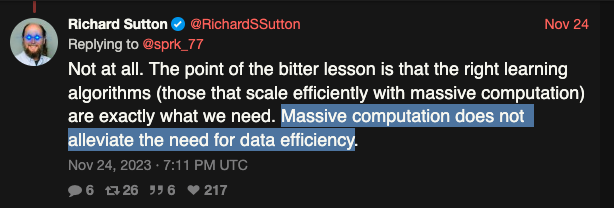

• Cool #RichSutton #TheBitterLessonInML remark that generic algos, w/o use of larger real datasets for training models, will beat expert algos & shorter datasets.

• Remark of synthetic data for training, yet employ with caution, and only helpful for some cases.

• Cool Q&A long answer for now is too late for keeping free internet datasets non-contaminated of synthetic BS .