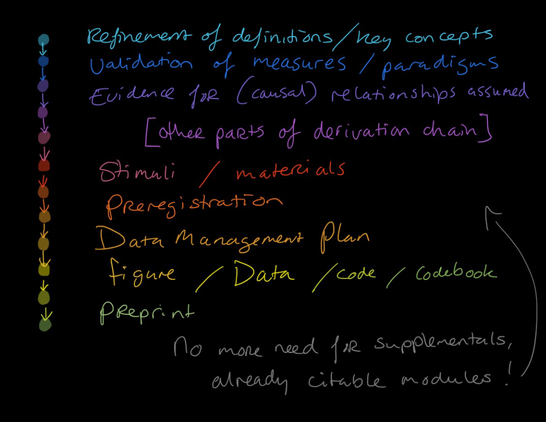

Find slides here if you have FOMO: https://www.researchequals.com/modules/kjp9-g898 Sketchnote shows my considerations for which parts of research could be a module... how about showing the derivation chain research journey on #ResearchEquals @annescheel? #openscience #modularpublishing #OpenScienceCommunity

Publishing research output continuously : The case of modular publishing (PROCess)

In this interactive workshop, I explore modular publishing as a new and alternative publishing format. Moreover, I will discuss with participants a modular approach to research, examine some benefits it can offer, and learn how to put it into practice. This are the slides for this interactive workshop designed to progress participants' knowledge and skills in academic research and publishing.