LLM이 컨텍스트 윈도우 100배를 처리한다: MIT의 Recursive Language Models

MIT CSAIL의 Recursive Language Models(RLM)은 LLM이 컨텍스트 윈도우 100배 규모의 입력을 처리하도록 합니다. 프롬프트를 환경 변수로 취급하고 재귀 호출로 1,000만 토큰 이상을 효율적으로 다루는 혁신적 추론 전략입니다.https://arxiv.org/abs/2512.24601 #RecursiveLanguageModels #AIRevolution #BuzzwordOverload #ITAcronyms #DonateButton #HackerNews #ngated

Recursive Language Models

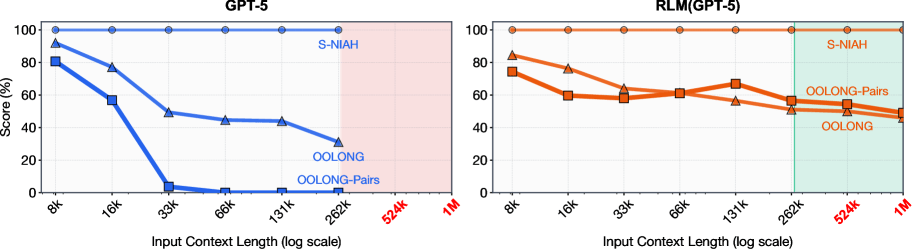

We study allowing large language models (LLMs) to process arbitrarily long prompts through the lens of inference-time scaling. We propose Recursive Language Models (RLMs), a general inference paradigm that treats long prompts as part of an external environment and allows the LLM to programmatically examine, decompose, and recursively call itself over snippets of the prompt. We find that RLMs can successfully process inputs up to two orders of magnitude beyond model context windows and, even for shorter prompts, dramatically outperform the quality of vanilla frontier LLMs and common long-context scaffolds across four diverse long-context tasks while having comparable cost. At a small scale, we post-train the first natively recursive language model. Our model, RLM-Qwen3-8B, outperforms the underlying Qwen3-8B model by $28.3\%$ on average and even approaches the quality of vanilla GPT-5 on three long-context tasks. Code is available at https://github.com/alexzhang13/rlm.

https://alexzhang13.github.io/blog/2025/rlm/ #RecursiveLanguageModels #AIChatbots #InfiniteLoops #TechHumor #HackerNews #ngated