@timhutton Hey Tim! I built a 2026 fork of your Squirm3 that keeps the chemistry rules unchanged but modernizes the substrate: WebGPU rendering, 5000×3000 arena, water as continuum heterogeneous-Brownian regions, seedable RNG, save/load.

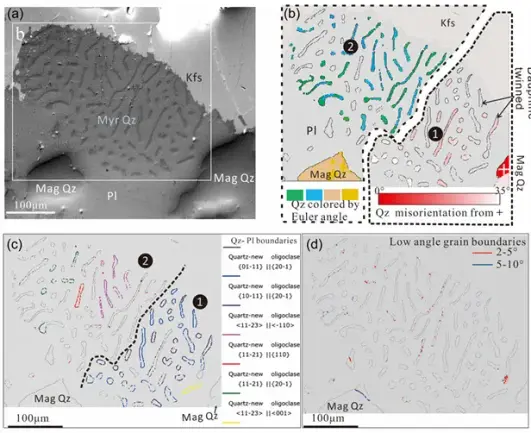

In a single seeded run I observed a closed a-a-a membrane loop spontaneously form around a cluster of intact protocells, then accumulate more cells over time:

1. iter 117,632 - outer loop, 1 inner protocell

2. iter 140,920 - 69-atom outer loop, 2 inner protocells

3. iter 157,068 - 78-atom outer loop, 4 inner protocells

Verified from the bond graph, not just visually, I also made an analysis script in the repo that walks the graph and runs point-in-polygon containment. The outer ring is ~3x the size of a typical single-cell membrane in the same run.

Your polymerization rule is scale-agnostic, so this is consistent with your chemistry, but I don't think meta-membrane formation appears in your 2002 or 2007 papers. Did you ever observe it in your own runs?

Three save files + the script are in the repo so it's reproducible. Load any save in the demo and you boot directly into that state.

Demo: https://davidortsac.github.io/primordium/

Saves + script: https://github.com/DavidOrtsac/primordium/tree/main/samples

Repo: https://github.com/DavidOrtsac/primordium

Big fan of your work!

#CellularAutomata #ArtificialLife #ALife #ReactionDiffusion #ArtificialChemistry