Apple-Workshop hebt Datenschutz in der Künstlichen Intelligenz hervor

Apple hat kürzlich einen Workshop zum Thema Datenschutz in Verbindung mit maschinellem Lernen veranstaltet. Nun teilt das Unternehmen Aufzeichnungen und Erkenntnisse, die

https://www.apfeltalk.de/magazin/news/apple-workshop-hebt-datenschutz-in-der-kuenstlichen-intelligenz-hervor/#KI #News #AirGapAgent #Apple #Datenschutz #Forschung #KI #KnstlicheIntelligenz #MaschinellesLernen #PPML #Privatsphre #Sicherheit #Workshop

Apple-Workshop hebt Datenschutz in der Künstlichen Intelligenz hervor

Apple teilt Erkenntnisse aus einem Workshop zu Datenschutz und KI und betont erneut den eigenen Fokus auf Privatsphäre

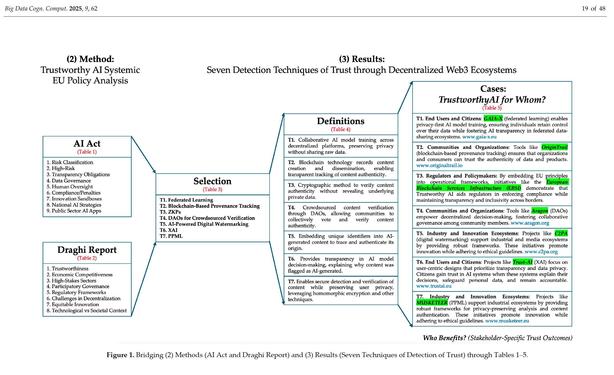

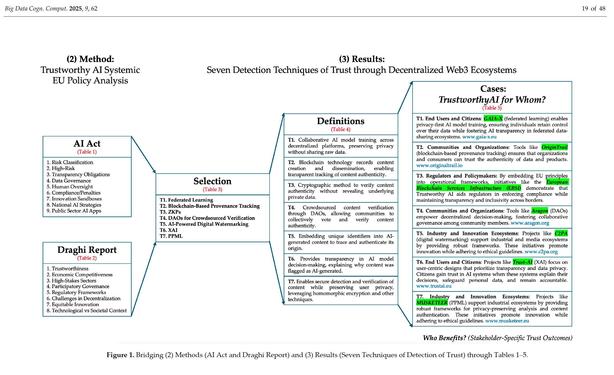

Trustworthy AI for Whom? GenAI Detection Techniques of Trust Through Decentralized Web3 Ecosystems

As generative AI (GenAI) technologies proliferate, ensuring trust and transparency in digital ecosystems becomes increasingly critical, particularly within democratic frameworks. This article examines decentralized Web3 mechanisms—blockchain, decentralized autonomous organizations (DAOs), and data cooperatives—as foundational tools for enhancing trust in GenAI. These mechanisms are analyzed within the framework of the EU’s AI Act and the Draghi Report, focusing on their potential to support content authenticity, community-driven verification, and data sovereignty. Based on a systematic policy analysis, this article proposes a multi-layered framework to mitigate the risks of AI-generated misinformation. Specifically, as a result of this analysis, it identifies and evaluates seven detection techniques of trust stemming from the action research conducted in the Horizon Europe Lighthouse project called ENFIELD: (i) federated learning for decentralized AI detection, (ii) blockchain-based provenance tracking, (iii) zero-knowledge proofs for content authentication, (iv) DAOs for crowdsourced verification, (v) AI-powered digital watermarking, (vi) explainable AI (XAI) for content detection, and (vii) privacy-preserving machine learning (PPML). By leveraging these approaches, the framework strengthens AI governance through peer-to-peer (P2P) structures while addressing the socio-political challenges of AI-driven misinformation. Ultimately, this research contributes to the development of resilient democratic systems in an era of increasing technopolitical polarization.