Software engineering was just so perfect 3 years ago. I’m sad we lost it all.

Another use case for computer-assisted programming - generating single (or repeated) use #bash scripts. I needed a script to download an SQL backup from certain folder via scp and then import that dump locally.

Could I handcode it? Sure. But I am not that good at bash and its variable system, plus this features bunch of configurable command line options + automatic logging.

#llm #ai #webdev #sql #cli #llms

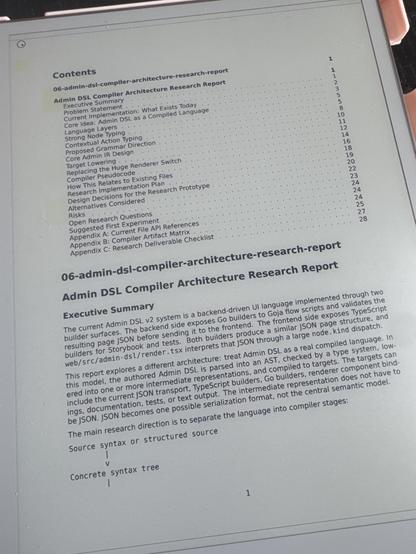

Took some walking and thinking, we’re on exploration number 3. This is rubber ducking but at a real design level, where the machine helps me place my random ideas into the context of the entire codebase and not just parrot my thoughts back.

This time, exploring the approaches that intermediate representation and multiple compiler passes could add to the architecture. This could easily take a couple of days in “the before times” , and would probably be such tunnel vision work that I would lose track of the bigger picture. Certainly I would also hold on to an immature approach by sheer sunk cost fallacy. Today, I easily try out and discard a dozen languages and their compilers before I settle on the proper target architecture. It’s ironically more token efficient than going straight to the answer overall.

Think of the amount of effort you’d have to put in editing and fixing even a high level IR instead of modifying a compiler pass. Plus you need to redo it each time.

@pbloem That's a good question! I wrote up a longer answer to your question at https://benjaminhan.net/posts/20260517-self-correction-after-reasoning-models/?utm_source=mastodon&utm_medium=social

The short version: yes, the recent reasoning-model training *internalizes* what used to be an inference-time external signals. Question is can we do it universally.

#LLMs Corrupt Your Documents When You Delegate

"Our large-scale experiment with 19 LLMs reveals that current models degrade documents during delegation: even frontier models (#Gemini 3.1 Pro, #Claude 4.6 #Opus, #GPT 5.4) corrupt an average of 25% of document content by the end of long workflows, with other models failing more severely.

...

Our analysis shows that current LLMs are unreliable delegates: they introduce sparse but severe errors that silently corrupt documents, compounding over long interaction."

Who would have expected that?

Maybe agentic AI is not the blessing everybody thinks.

#AI #openai

LLMs Corrupt Your Documents When You Delegate

Large Language Models (LLMs) are poised to disrupt knowledge work, with the emergence of delegated work as a new interaction paradigm (e.g., vibe coding). Delegation requires trust - the expectation that the LLM will faithfully execute the task without introducing errors into documents. We introduce DELEGATE-52 to study the readiness of AI systems in delegated workflows. DELEGATE-52 simulates long delegated workflows that require in-depth document editing across 52 professional domains, such as coding, crystallography, and music notation. Our large-scale experiment with 19 LLMs reveals that current models degrade documents during delegation: even frontier models (Gemini 3.1 Pro, Claude 4.6 Opus, GPT 5.4) corrupt an average of 25% of document content by the end of long workflows, with other models failing more severely. Additional experiments reveal that agentic tool use does not improve performance on DELEGATE-52, and that degradation severity is exacerbated by document size, length of interaction, or presence of distractor files. Our analysis shows that current LLMs are unreliable delegates: they introduce sparse but severe errors that silently corrupt documents, compounding over long interaction.

I have an hypothesis:

The reason CEOs (and the like) are so in love with ‘AI’ is because;

1) they are shallow narcissists who adore the sycophantic nature of these chatbots telling them that every stupid idea they have is amazing and world-changing.

2) they’re already using them to pretend to work, and so they think that everyone pretending to do work with chatbots is ‘efficiency’.

Mayor Sim uses '11 AI agents' to do work, but says it's strictly personal

Vancouver Mayor Ken Sim is clarifying his remarks that he uses “11 AI agents” to do a lot of his work, saying it’s in a strictly personal capacity. Sim had praised the efficiency of AI tools at the Web Summit in Vancouver on Tuesday, saying he expects AI to be 64 times better in three […]