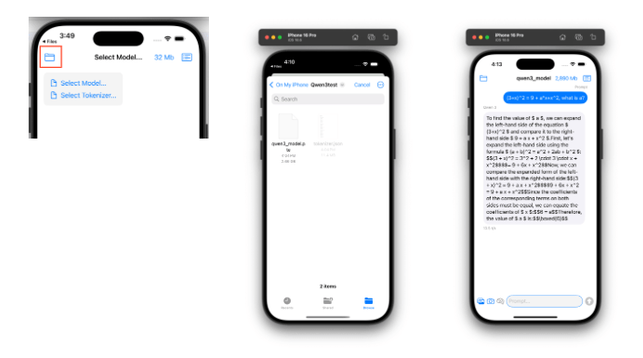

iPhone에서 AI 모델 돌리기: Unsloth가 5분 만에 가능하게 만든 방법

Unsloth가 LLM을 스마트폰에 5분 만에 배포하는 기능을 출시했습니다. iPhone에서 Llama 3.2가 초당 25토큰으로 작동하며, 완전한 프라이버시를 보장합니다.UPDATE: Dự án Llama 3.2 3B fMRI cải tiến khả năng tách biệt lớp dữ liệu, hỗ trợ điều chỉnh độc lập hình học, ánh xạ màu, bản đồ thang đo & nguồn prompt. Tương lai thêm tính năng trong suốt & hiệu ứng "ghosting" để phân biệt cấu trúc. #Llama32 #AI #FMRI #MastodonVN #CôngNghệ

https://www.reddit.com/r/LocalLLaMA/comments/1pqzlnv/llama_32_3b_fmri_build_update/

Dự án LLaMA 3.2 3B phát triển công cụ phân tích mới giúp quan sát các lớp transformer và token mức độ chi tiết, hỗ trợ điều chỉnh kích hoạt kết nối theo thời gian thực. Tính năng đáng chú ý: nhãn chiều nổi bật khi hoạt động mạnh, giao diện điều hướng token linh hoạt. Cơ hội học hỏi đáng ngạc nhiên từ người không phải phát triển Godot.

#AI #MachineLearning #LLaMA32 #FMRI #PhânTíchToken #NeuralNet

https://www.reddit.com/r/LocalLLaMA/comments/1pnhi27/llama_32_3b_fmri/

구글 EmbeddingGemma로 완전 로컬 AI 문서 검색 시스템 만들기

Google의 새로운 EmbeddingGemma 모델로 인터넷 연결 없이 완전 로컬에서 동작하는 AI 문서 검색 시스템을 구축하는 실용적인 가이드입니다. 프라이버시를 중시하는 개발자와 기업을 위한 단계별 튜토리얼을 제공합니다.Основы промптинга и математические возможности моделей Llama

Меня зовут Грибанов Никита, я Data Scientist в отделе R`n`D&ML компании Raft Digital Solutions, и сегодня я расскажу о больших языковых моделях. На данный момент в мире их существует уже более 39 тысяч! Далее буду называть их хайповым названием LLM (Large Language Model). В этой статье вы сначала узнаете новые или освежите в памяти уже известные вам основы общения с языковыми моделями. Затем разберёте пару реальных примеров настройки запросов и увидите математические возможности Llama 3.2 3B в сравнении с Llama 3.1 8B. Для достижения хороших результатов при решении различных задач с помощью LLM, с ними как и с людьми, нужно уметь правильно общаться. Как же это сделать?

https://habr.com/ru/companies/raft/articles/863478/

#llama32 #llama31 #llm #prompt #promptengineering #mathematics #begginers

Playing around with #ollama I got a nice gem response from #llama32

With the following prompt:

In the context of cybersecurity what are recommendation for a password expiration within a policy as part of an ISMS?

Please provide references for the password expiration intervals. What Does NIST, NERC CIP, IT Grundschutz, ISO 27001, NIS-2 say about this. Please reply with the id/name of the document, the section and the statement.

In the answer my highlight is what I got as reference for the statement in ISO 27001:

European Union. (2004). Directive 2004/36/EC on the application of the principle of equal treatment in medical treatment between men and women,

including cases of pregnancy and childbirth.

Breakthrough in Visual Language Models and Reasoning 🧠

🔍 #LLaVAo1 pioneers systematic visual reasoning capabilities:

• First #VLM to implement spontaneous step-by-step analysis like #GPT4

• New 11B model surpasses #Gemini15pro & #Llama32 performance

• Excels on 6 multimodal benchmark tests

• Breaks down complex problems into structured analysis stages

🎯 Key Features:

• Problem outline creation

• Image information interpretation

• Sequential reasoning process

• Evidence-based conclusions

• Handles science & reasoning challenges

💡 Technical Specs:

• Based on #opensource architecture

• Pretrained weights available on #HuggingFace

• 11B parameter model size

• Supports multiple reasoning domains

📚 Paper available: https://arxiv.org/abs/2411.10440

🔗 Project repository: https://github.com/PKU-YuanGroup/LLaVA-o1

LLaVA-CoT: Let Vision Language Models Reason Step-by-Step

Large language models have demonstrated substantial advancements in reasoning capabilities, particularly through inference-time scaling, as illustrated by models such as OpenAI's o1. However, current Vision-Language Models (VLMs) often struggle to perform systematic and structured reasoning, especially when handling complex visual question-answering tasks. In this work, we introduce LLaVA-CoT, a novel VLM designed to conduct autonomous multistage reasoning. Unlike chain-of-thought prompting, LLaVA-CoT independently engages in sequential stages of summarization, visual interpretation, logical reasoning, and conclusion generation. This structured approach enables LLaVA-CoT to achieve marked improvements in precision on reasoning-intensive tasks. To accomplish this, we compile the LLaVA-CoT-100k dataset, integrating samples from various visual question answering sources and providing structured reasoning annotations. Besides, we propose an inference-time stage-level beam search method, which enables effective inference-time scaling. Remarkably, with only 100k training samples and a simple yet effective inference time scaling method, LLaVA-CoT not only outperforms its base model by 7.4% on a wide range of multimodal reasoning benchmarks, but also surpasses the performance of larger and even closed-source models, such as Gemini-1.5-pro, GPT-4o-mini, and Llama-3.2-90B-Vision-Instruct.

Понимает ли Vision Llama импрессионистов?

Всем привет, меня зовут Арсений, я Data Scientist в компании Raft, и сегодня я расскажу вам про Visual Language Models (VLM). Большие языковые модели уже стали частью нашей жизни и мы применяем их, чтобы упростить современную рутину, а так же используем для решения бизнес задач. Недавно вышло новое поколение vision transformer моделей, которые заметно упростили анализ изображений, из какой бы сферы эти изображения не были. Особенно заметным был сентябрьский релиз Llama-3.2-11b, и не только потому что это первая vision модель от Llama, сколько потому, что с ней вместе вышло целое семейство моделей, включая маленькие на 1B и 3B параметров. А как вы знаете, меньше, значит юзабельнее.

https://habr.com/ru/companies/raft/articles/857118/

#Vision_Transformers #Vision_Language_Models #multimodal_llm #Llama32 #qwen2vl #llava #art #art_history

Just updated my terminal setup to get roasted by my Linux shell history. Every command I run gets judged by a local LLM, and the verdict goes straight to my window title. 😅

Including a few favorites:

"Oh, I see you use Arch, by the way..." when I update pacman

"Looking for your Brains?..." when I ran simonw's llm tool

"pwd, after ls? How lost are you?", after... well runinng pwd when not recognizing the files in my cwd.

GIF shows it in action

#linux #terminal #llama32 #llm #archlinux #shellshaming #ramtospare