Local LLMs as constrained data transformers: Duolingo vocab transformed to Anki using Qwen 32B on MacBook M2 Max (45min run). Key insights: larger models sometimes over-help, Qwen 2.5 32B balances quality/instruction adherence. Practical iteration on consumer hardware. #LocalLLaMA #DataTransformation #LLMApplications #AnkiIntegration #Qwen32B

12-factor Agents: Patterns of reliable LLM applications

https://github.com/humanlayer/12-factor-agents

#HackerNews #12factorAgents #LLMapplications #ReliablePatterns #AIdevelopment #SoftwareEngineering

GitHub - humanlayer/12-factor-agents: What are the principles we can use to build LLM-powered software that is actually good enough to put in the hands of production customers?

What are the principles we can use to build LLM-powered software that is actually good enough to put in the hands of production customers? - humanlayer/12-factor-agents

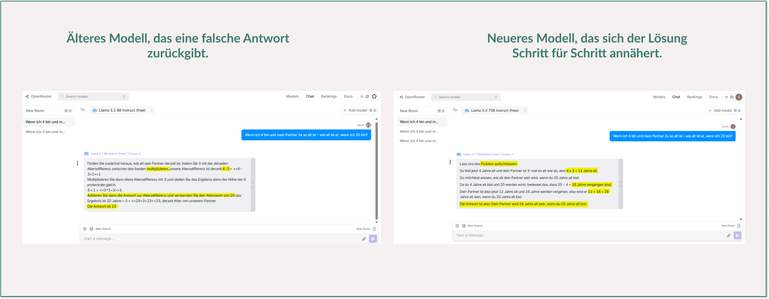

What happens when a language model solves maths problems?

"If I’m 4 years old and my partner is 3x my age – how old is my partner when I’m 20?"

Do you know the answer?

🤥 An older Llama model (by Meta) said 23.

🤓 A newer Llama model said 28 – correct.

So what made the difference?

Today I kicked off the 5-day Kaggle Generative AI Challenge.

Day 1: Fundamentals of LLMs, prompt engineering & more.

Three highlights from the session:

☕ Chain-of-Thought Prompting

→ Models that "think" step by step tend to produce more accurate answers. Sounds simple – but just look at the screenshots...

☕ Parameters like temperature and top_p

→ Try this on together.ai: Prompt a model with “Suggest 5 colors” – once with temperature 0 and once with 2.

Notice the difference?

☕ Zero-shot, One-shot, Few-shot prompting

→ The more examples you provide, the better the model understands what you want.

#PromptEngineering #GenerativeAI #LLM #Kaggle #LLMApplications #AI #DataScience #Google #Python #Tech