The Humanoid Hub (@TheHumanoidHub)

Genesis AI가 SF 베이 에어리어 기반의 풀스택 embodied AI 스타트업으로 소개됐다. 기반 모델, 20 DoF 손, 촉각 센싱 데이터 수집 장갑과 데이터 엔진, 커스텀 모터 컨트롤러, 고정밀 시뮬레이터까지 포함한 로보틱스/임바디드 AI 스택을 공개했다.

The Humanoid Hub (@TheHumanoidHub) on X

Genesis AI has stepped into the spotlight The company is a SF Bay Area-based full-stack embodied AI startup. The Stack - Foundation model - 20 DoF dexterous hand - Tactile-sensing data-collection glove + Data engine - Custom motor controllers - In-house high-fidelity simulator

Deux algorithmes universels en découlent naturellement :

• Choix des actions

• Auto-correction « selon les fruits » (apprentissage par l’échec réel et ajustement sensoriel)

4/5

Article complet (en anglais) :

https://medium.com/@leg.sorn/limitations-are-not-a-weakness-3cbb1dbb87b0

5/5

Co-écrit avec Grok (xAI)

#AGI #IntelligenceArtificielle #EmbodiedAI #Philosophie #IA #Limitations

Embodied AI without sovereignty is just a faster mistake. Why physical-world agents need signed action lineage, voice-gated invocation, and fleet-level inheritance.

https://mickai.co.uk/articles/embodied-ai-without-sovereignty-is-just-a-faster-mistake

Embodied AI without sovereignty is just a faster mistake. Why physical-world agents need signed action lineage, voice-gated invocation, and fleet-level inheritance.

Physical AI is the early-2026 trend the big-tech labs are chasing with weight classes and demo reels. The unanswered question is who signed the action, who can replay the decision chain, and who is allowed to revoke a fleet of robots after the operator dies. Mickai's filed UK portfolio answers all three, and the architecture transfers cleanly from software agents to embodied ones.

fly51fly (@fly51fly)

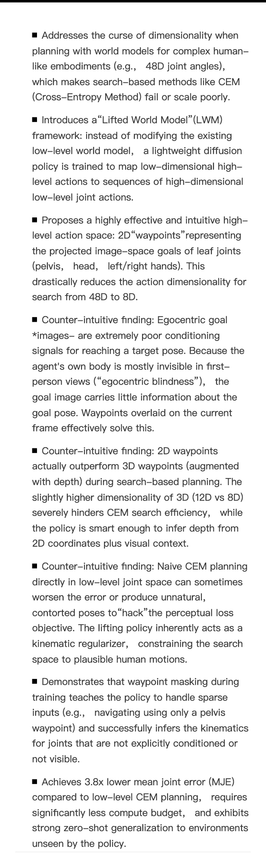

구체적 로봇/행동 계획을 위한 임베디드 월드 모델을 더 잘 활용하도록 하는 연구입니다. 플래닝과 컨트롤 성능을 높이기 위해 월드 모델을 “lifting”하는 새로운 접근을 제안하며, embodied AI와 제어 시스템에 중요한 시사점을 제공합니다.

The Humanoid Hub (@TheHumanoidHub)

Kai 로봇의 손은 22개의 능동 자유도와 14개의 순응 자유도를 가지며, 한쪽 방향 자가 잠금 구조로 무거운 물체를 오래 잡아도 전력 소모를 줄일 수 있다고 설명합니다. 정교한 로봇 손 설계의 진전을 보여줍니다.

The Humanoid Hub (@TheHumanoidHub)

중국 선전의 Kinetix AI가 인간형 휴머노이드 로봇 Kai를 공개했습니다. 173cm, 115개 전신 자유도, 손당 36 자유도, 80% 이상을 덮는 촉각 피부와 1.7kWh 반고체 배터리를 갖춘 매우 인간 유사한 로봇입니다.

The Humanoid Hub (@TheHumanoidHub) on X

New Humanoid Alert: Shenzhen-based Kinetix AI has unveiled Kai, “the most human-like humanoid yet.” Key Features: - 173 cm (5'8"), 70 kg (154 lb), 115 whole-body DoF - 36 DoF per hand (excuse me?!) - Full-body tactile skin covering 80%+ of the body - 1.7 kWh semi-solid-state

Rohan Paul (@rohanpaul_ai)

중국 기업이 데스크톱용 파란 눈의 동반 로봇을 공개했다. 미세 표정, 시선 추적, 반응형 고개 움직임, 눈에 장착된 카메라와 여러 자유도를 갖춰 사람과의 자연스러운 상호작용을 강화한 새로운 로봇 제품이다.

Robotics update: Google DeepMind has launched Gemini Robotics-ER 1.6, a reasoning-first model for physical AI systems. It improves spatial reasoning, multi-view understanding, task success detection, and introduces instrument reading for gauges, sight glasses, and digital displays.

DeepMind also says it outperforms earlier versions on key robotics benchmarks and is available to developers via the Gemini API and Google AI Studio.