MAYØVKA RAVE: TOTAL RAZJEB @ Waldorffa25 - 01 May feat. DNNS, FennX, Leo Bufera + more

I am really proud to announce that our latest effort on showing the advantages of our analog/digital #neuromorphic spiking neural network chips in solving complex biomedical applications has just been published here: https://rdcu.be/ef5N0

The demonstrates that *small* *highly variable* and *low accuracy* #SNNs can indeed be useful, without having to resort to #backprop in large-scale #DNNs! 😉

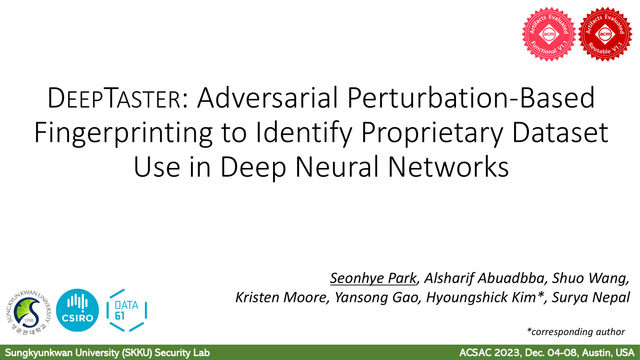

#DeepLearning #DataSecurity

'Densely Connected G-invariant Deep Neural Networks with Signed Permutation Representations', by Devanshu Agrawal, James Ostrowski.

http://jmlr.org/papers/v24/23-0294.html

#representations #dnns #dnn

We techies love solving problems with cool technology, to where we attempt to implement the e

https://blog.deepsec.net/deepsec-2023-talk-skynet-wants-your-passwords-the-role-of-ai-in-automating-social-engineering-alexander-hurbean-wolfgang-ettlinger/

#Conference #AI #AttackPrevention #Deepfakes #DeepSec2023 #DNNs #SocialEngineering #Talk #Transformers

DeepSec 2023 Talk: Skynet wants your Passwords! The Role of AI in Automating Social Engineering - Alexander Hurbean & Wolfgang Ettlinger

This presentation at DeepSec 2023 explores the use of Artificial Intelligence Large Language Models for social engineering campaigns.

Training DNNs Resilient to Adversarial and Random Bit-Flips by Learning Quantization Ranges

Beyond Distribution Shift: Spurious Features Through the Lens of Training Dynamics

Compressors such as #gzip + #kNN (k-nearest-neighbor i.e. your grandparents' #classifier) beats the living daylights of Deep neural networks (#DNNs) in sentence classification.

H/t @lgessler

Without any training parameters, this non-parametric, easy and lightweight (no #GPU) method achieves results that are competitive with non-pretrained deep learning methods on six in-distribution datasets.It even outperforms BERT on all five OOD datasets.

🧠

🧠