Need a lightweight solution for few-shot domain-specific sentence classification?

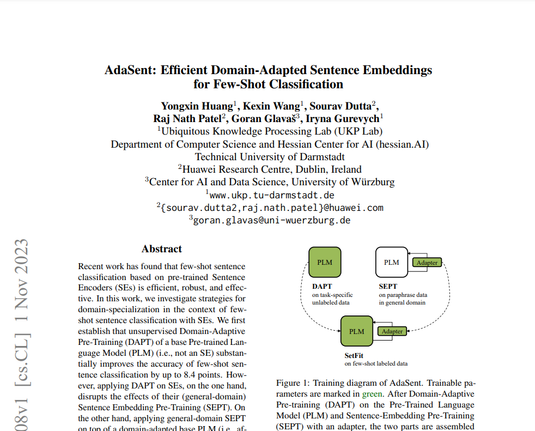

We propose #AdaSent!

🚀 Up to 7.2 acc. gain in 8-shot classification with 10K unlabeled data

🪶 Small backbone with 82M parameters

🧩 Reusable general sentence adapter across domains

(1/🧵) #EMNLP2023