https://youtu.be/UUuC6Q1SIoM?t=2190 https://twitter.com/frimelle/status/1747919662405353701

https://youtu.be/UUuC6Q1SIoM?t=2190 https://twitter.com/frimelle/status/1747919662405353701

Some highlights from EMNLP 2023

https://health-nlp.com/posts/emnlp23.html

#EMNLP2023 #ml #machinelearning #NLProc #NLP #ai #artificialintelligence #conference

RT by @wikiresearch: Excited to start the new year by presenting our #EMNLP2023 paper on Transparent Stance Detection in Multilingual Wikipedia Editor Discussions w/ @rnav_arora @IAugenstein at the @Wikimedia Research Showcase!

Online, 17.01., 17:30 UTC

https://www.mediawiki.org/wiki/Wikimedia_Research/Showcase#January_2024 @wikiresearch https://twitter.com/frimelle/status/1746569501284368467

RT by @wikiresearch: Thanks @wikiresearch for sharing!

Our #emnlp2023 paper is also available on ACL Anthology now: https://aclanthology.org/2023.emnlp-main.100/ https://twitter.com/ConiaSimone/status/1740084544881963053

A paper on the topic by Max Glockner (UKP Lab), @ievaraminta Staliūnaitė (University of Cambridge), James Thorne (KAIST AI), Gisela Vallejo (University of Melbourne), Andreas Vlachos (University of Cambridge) and Iryna Gurevych was accepted to TACL and has just been presented at #EMNLP2023.

AmbiFC: Fact-Checking Ambiguous Claims with Evidence

Automated fact-checking systems verify claims against evidence to predict their veracity. In real-world scenarios, the retrieved evidence may not unambiguously support or refute the claim and yield conflicting but valid interpretations. Existing fact-checking datasets assume that the models developed with them predict a single veracity label for each claim, thus discouraging the handling of such ambiguity. To address this issue we present AmbiFC, a fact-checking dataset with 10k claims derived from real-world information needs. It contains fine-grained evidence annotations of 50k passages from 5k Wikipedia pages. We analyze the disagreements arising from ambiguity when comparing claims against evidence in AmbiFC, observing a strong correlation of annotator disagreement with linguistic phenomena such as underspecification and probabilistic reasoning. We develop models for predicting veracity handling this ambiguity via soft labels and find that a pipeline that learns the label distribution for sentence-level evidence selection and veracity prediction yields the best performance. We compare models trained on different subsets of AmbiFC and show that models trained on the ambiguous instances perform better when faced with the identified linguistic phenomena.

At #EMNLP2023, our colleague Jonathan Tonglet presented his master thesis, conducted at the KU Leuven. Find out more about »SEER : A Knapsack approach to Exemplar Selection for In-Context HybridQA« in this thread 🧵:

UKP Lab (@[email protected])

Attached: 1 image Can we combine integer linear programming with exemplar selection to improve In-Context Learning? Yes! All you need is to optimize your Knapsack 🎒 The paper by Jonathan Tonglet, Manon Reusens, Philipp Borchert and Bart Baesens on #SEER was just accepted to #EMNLP2023 – learn more in this thread (1/🧵). 📰 https://arxiv.org/abs/2310.06675v2

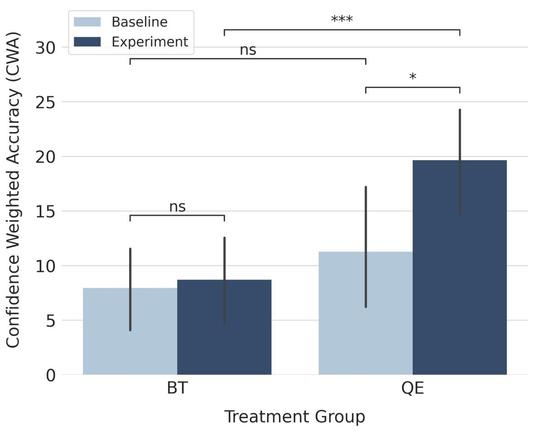

Many models produce outputs that are hard to verify for an end user.

🏆 Our new #emnlp2023 paper won an outstanding paper award for showing that a secondary quality estimation model can help users decide when to rely on the model output.

We ran a controlled experiment showing that a calibrated quality estimation model can make physicians twice better at correctly deciding when to rely on a translation model output.

You can find our paper here:

📃 https://arxiv.org/abs/2311.00408

and our code here:

💻 https://github.com/UKPLab/AdaSent

Check out the work of our authors Yongxin Huang, Kexin Wang, Sourav Dutta, Raj Nath Patel, Goran Glavaš and Iryna Gurevych! (6/🧵) #EMNLP2023 #AdaSent #NLProc

AdaSent: Efficient Domain-Adapted Sentence Embeddings for Few-Shot Classification

Recent work has found that few-shot sentence classification based on pre-trained Sentence Encoders (SEs) is efficient, robust, and effective. In this work, we investigate strategies for domain-specialization in the context of few-shot sentence classification with SEs. We first establish that unsupervised Domain-Adaptive Pre-Training (DAPT) of a base Pre-trained Language Model (PLM) (i.e., not an SE) substantially improves the accuracy of few-shot sentence classification by up to 8.4 points. However, applying DAPT on SEs, on the one hand, disrupts the effects of their (general-domain) Sentence Embedding Pre-Training (SEPT). On the other hand, applying general-domain SEPT on top of a domain-adapted base PLM (i.e., after DAPT) is effective but inefficient, since the computationally expensive SEPT needs to be executed on top of a DAPT-ed PLM of each domain. As a solution, we propose AdaSent, which decouples SEPT from DAPT by training a SEPT adapter on the base PLM. The adapter can be inserted into DAPT-ed PLMs from any domain. We demonstrate AdaSent's effectiveness in extensive experiments on 17 different few-shot sentence classification datasets. AdaSent matches or surpasses the performance of full SEPT on DAPT-ed PLM, while substantially reducing the training costs. The code for AdaSent is available.