The prEN 18228 Problem: Why Your AI Risk Assessment Will Fail the First Real Test

Most AI risk assessments look solid on paper and collapse the moment a regulator, client, or auditor asks a simple question. What exactly can go wrong, how likely is it, and what does it cost when it does.

That gap is about to matter more.

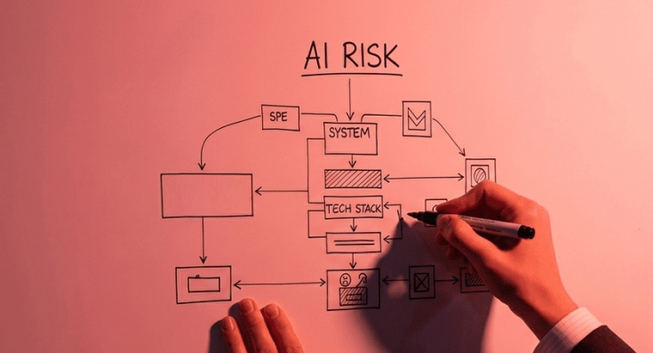

A new European standard, prEN 18228, sets out a formal process for managing risks in AI systems across their full life cycle. It is designed to support regulatory expectations by requiring organizations to identify hazards, estimate and evaluate risks, […]

https://hernanhuwyler.wordpress.com/2026/05/08/the-pren-18228-problem-why-your-ai-risk-assessment-will-fail-the-first-real-test/