wikipedia-article-transform - a cli tool and agentic skill to work with Wikipedia articles in token efficient way. Transform the html content into content focussed Markdown or plain text or structured json. Can save about 90% of tokens.

https://thottingal.in/blog/2026/03/14/wikipedia-article-transform/

CLI for transforming Wikipedia articles to text, markdown, and JSON

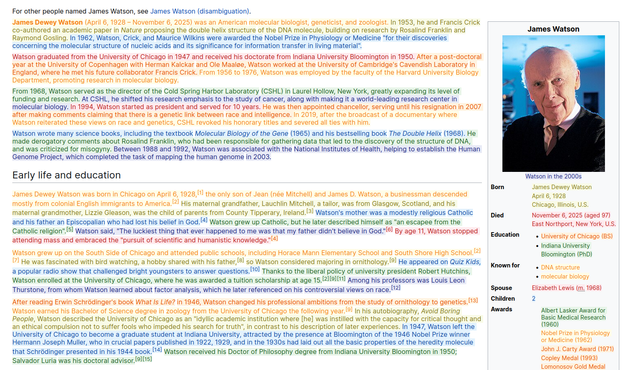

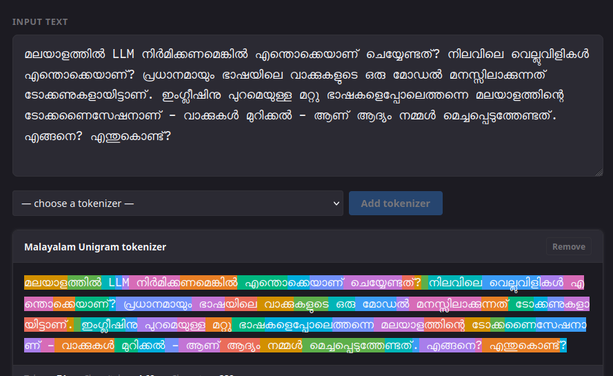

We are witnessing a resurgence and evolution of Command Line Interfaces (CLIs), accelerated by AI agents. Text-based, scriptable CLI tools work very well with LLM-based workflows. Accessing Wikipedia articles during an agent session is common. Usually, a webfetch call is used to get the HTML for a page from a URL like https://en.wikipedia.org/wiki/2026_Winter_Olympics. That works, and LLMs are smart enough to read HTML. But there is a cost: HTML is for rendering, so the model must ignore a lot of non-content markup to get to the useful text. i That increases token usage and adds context noise. Can we improve this?