| Other | @dancrossnyc |

| Radio | https://kz2x.radio |

| Web | https://pub.gajendra.net/ |

| Git | https://github.com/dancrossnyc/ |

Dan Cross

- 678 Followers

- 295 Following

- 3.4K Posts

People with #adhd have three types of workday:

* Get absolutely nothing done

* Get 4 hours of work done at a random point in time

* Get 40 hours of work done in 4 hours

All types are sprinkled with work unrelated sidequests

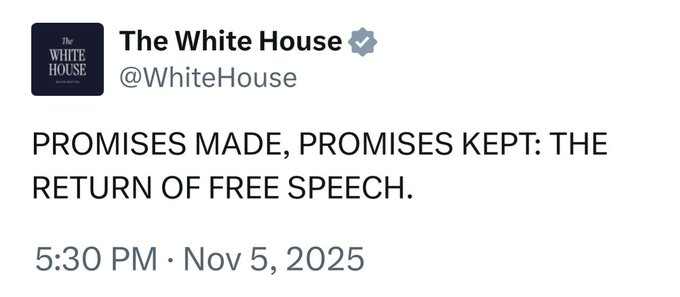

Your regular reminder that as bad as you think things currently are living in a country run by an incompetent and corrupt group of racist grifters, it’s going to get worse.

Look out for yourselves and your community.

I want to be clear that this is pretty divorced from my own views of likely scenarios, even likely nightmare scenarios, so they're not getting this from me.

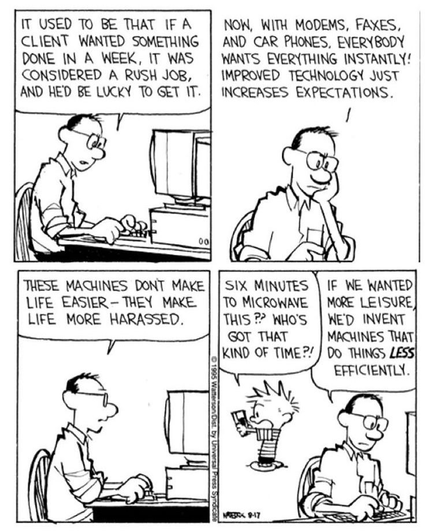

If you're reading this and you're involved in LLM hype, I'd really like you to take a moment to pause and examine the fact that this is the commonly accepted peer wisdom in a 2nd-grade classroom right now. That their parents' generation is actively involved in betraying them to a distant and inscrutable machine-god of pure greed.