hotwheels

- 1 Followers

- 9 Following

- 14 Posts

This March, I 🔊 presented at the Leibniz Supercomputing Centre (LRZ) my code, hotwheels. There, I showed its modular design, GPU-ready kernels, and how they could change the game for cosmological simulations.

Slides here: https://docs.google.com/presentation/d/14BeqAdOqfYc5rbpVECS_ghbFJorFckbAbfjhlebwJUs 🧑🏫

🔥 New hotwheels tutorial is out!

A direct consequence of hotwheels extreme modularity is that you can easily stack multiple particle-mesh (PM) solvers like nested dolls!

In this demo, we layer PM grids over a N-body sampled Navarro-Frenk-White (NFW) halo and match the analytic acceleration profile down to 1 kpc on a galaxy cluster scale.

Link: https://www.ict.inaf.it/gitlab/hotwheels/gitlab-profile/-/blob/main/run_pm_dmo_NFW_fixed_timestep.md

🚀 hotwheels Tree Build: CPU vs GPU scaling tst🚀

CPU OpenMP parallel tree build scales linearly with threads up to 32 threads! GPU scales like an 8-thread CPU - great when cores are limited! More particles per leaf = faster tree & less traversal!

#HPC #GPU #OpenMP #Octree #Scaling https://www.ict.inaf.it/gitlab/hotwheels/

Reminder: @numba is an *excellent* accelerator for numeric computations in Python. Also, issues with Python's stability are greatly exaggerated. Case in point: I just ran my 8yo n-body benchmarks without modification:

https://github.com/jni/nbody-numba

and they were just 10% slower than the fastest C code — and that's including all the Python launch time and JIT warmup!

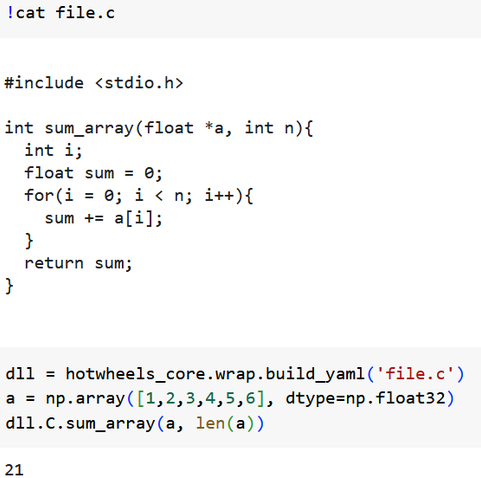

⚡️Kernels are written in C for best exploitation of HPC facilities

🛠️Python wrappers make it easy to run them (inspired by the power of ML tools).

🔗Link: https://www.ict.inaf.it/gitlab/hotwheels

A look back at #ESAEuclid in 2024, and a look forward into what to expect from #Euclid in the coming year:

https://www.euclid-ec.org/euclid-in-2024-and-whats-to-come-in-2025/

You can see how easy it is to use hotwheels C kernels in Python:

https://colab.research.google.com/drive/1EObmEt7XK56EpwHh7PQ-viR7PHZ5H0pl

an image from the multi-domain Hilbert-based domain decomposition of the new n-body code for hydrodynamic cosmological simulations `hotwheels`

(repo: https://www.ict.inaf.it/gitlab/hotwheels)