New update for the slides of my talk "Run LLMs Locally": WebGPU

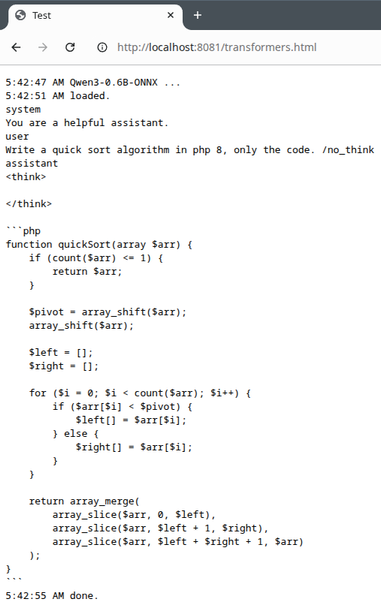

Now models can run completely inside the browser using Transformers.js, Vulkan and WebGPU (slower than llama.cpp, but already usable).

https://codeberg.org/thbley/talks/raw/branch/main/Run_LLMs_Locally_2026_ThomasBley.pdf

#ai #llm #llamacpp #stablediffusion #gptoss #qwen3 #glm #localai #webgpu

don't expect llm generated code to be correct ↓