#AIIsgoingGreat "This AI agent ran within a GitHub Actions workflow and ran with broad privileges. You might be able to guess where this is heading…"

oooh I got this… AGI? The Singularity? To the store for ice cream?

Also: The #AI workflow was added to "automate first-response to reduce maintainer burden"

One might ask the maintainers how the burden of dealing with this shitshow compares with triaging issues

"Amazon said it was a coincidence that AI tools were involved in the outages, and that there was no evidence that such technology led to more errors than human engineers. “In both instances, this was user error, not AI error,” it said" - Ah yes, the engineers probably prompted it wrong

Original FT story (via @arstechnica, sans paywall) states "In these two cases, the engineers involved did not require a second person’s approval before making changes, as would normally be the case" and quotes Amazon saying it was "a user access control issue, not an AI autonomy issue" because the engineer involved had "broader permissions than expected" 🤨

Lot of different scenarios could be read between the lines there

https://arstechnica.com/ai/2026/02/an-ai-coding-bot-took-down-amazon-web-services/

RE: https://infosec.exchange/@josephcox/116120627475419783

Today's #AIIsGoingGreat aligns perfectly with expectations https://mastodon.social/@josephcox@infosec.exchange/116120627585647890

"Anthropic said the jailbreaking technique used in the Stanford and Yale research was impractical for normal users and would require more effort to extract the text than just purchasing the content" - Ah yes, the old "it's not infringement because I stored it in an inconvenient format" defense, just like my punch-card MP3 collection

RE: https://vmst.io/@jalefkowit/116162471325793098

Turns out, you can lose your job to AI https://mastodon.social/@jalefkowit@vmst.io/116162471447876131

#AIIsGoingGreat 'An AP reporter followed prompts for Spanish-language options and was met with a voice speaking accented English that used Spanish only for numbers. “Your estimated wait time is less than ‘tres’ minutes,” the voice said' - Of course, the real problem isn't so much the AI system per se, but the fact such a monumental screwup made it to production and went unfixed for months

https://apnews.com/article/washington-dol-spanish-accent-ai-3a1b8438a5674c07242a8d48c057d5a3

Washington state hotline callers hear AI voice with Spanish accent

Callers to Washington state’s driver’s license agency who select automated service in Spanish have instead been hearing an AI voice speaking English with a strong Spanish accent. The voice slipped Spanish numbers into key phrases. A recording of the odd-sounding accent drew attention on social media. And one person described the experience as “hilarious,” “absurd” and like a scene out of “Parks and Recreation.” The Department of Licensing has apologized and says it fixed the problem.

I am less concerned over alleged violations of Anthropic's TOS or Trump's retaliatory ban and more concerned the DOD is reportedly using spicy autocomplete for "intelligence purposes, as well as to help select targets"

https://www.theguardian.com/technology/2026/mar/01/claude-anthropic-iran-strikes-us-military

Fedi nerds (including yours truly): I wouldn't let that slop anywhere near my hobby open source project

DOD: As planning for a potential strike in Iran was underway, Maven, powered by Claude, suggested hundreds of targets, issued precise location coordinates, and prioritized those targets according to importance … The AI tools also evaluate a strike after it is initiated

😬

https://wapo.st/4rcM6dC

(email walled)

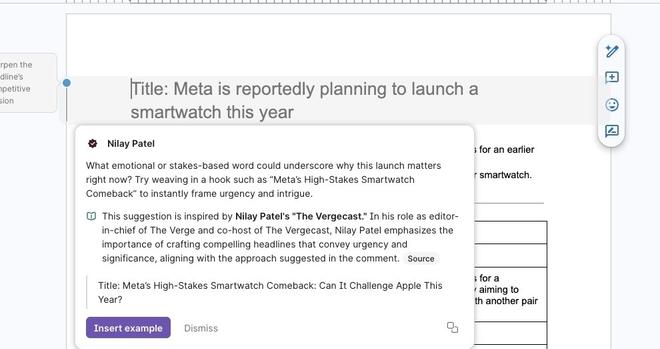

Three years into the AI hype wave, it keeps happening:

1) AI vendor tries to make BS machine look more reliable by having it link sources

2) BS machine BSes the sources

"…its “sources” linked to spammy copies of legit websites, or other archived copies that aren’t the actual source page. Some sources even went to completely unrelated links that weren’t written by the person whose work they were supposedly an example of"

https://www.theverge.com/ai-artificial-intelligence/890921/grammarly-ai-expert-reviews

With a little help from a Ouija board, even the dead ones can opt out, I suppose. I mean, it's as much their voice as the Grammarly version

"you can create a DLP policy to help protect against the use of sensitive information types (SIT), such as credit card numbers, passport identification, or social security numbers in Microsoft Copilot 365 prompts" - So hypothetically, if one were to include random but formally valid SSN or CC values hidden in your emails or documents, would it stop users of this feature from using their microslop on it? 🤔

https://learn.microsoft.com/en-us/purview/dlp-microsoft365-copilot-location-learn-about

Learn about using Microsoft Purview Data Loss Prevention to protect interactions with Microsoft 365 Copilot and Copilot Chat

You can use Microsoft Purview Data Loss Prevention (DLP) targeted at the Microsoft 365 Copilot and Copilot Chat location to help prevent the use of sensitive information types in prompts and files and emails that have sensitivity labels in Microsoft 365 Copilot and Copilot Chat prompts.

In today's #AIIsGoingGreat (ht @platypus*), Microsoft security does a nice writeup of SEO bros abusing "summarize with AI" buttons to inject "memories" into AI assistants… and then goes on to offer mitigations like "be sure to hover links before you click them" and "regularly check your AI memories" … because if the last 30 years of infosec has taught us anything, it's that user vigilance is the first and best line of defense, right?

https://www.microsoft.com/en-us/security/blog/2026/02/10/ai-recommendation-poisoning/

Manipulating AI memory for profit: The rise of AI Recommendation Poisoning | Microsoft Security Blog

That helpful “Summarize with AI” button? It might be secretly manipulating what your AI recommends. Microsoft security researchers have discovered a growing trend of AI memory poisoning attacks used for promotional purposes, a technique we call AI Recommendation Poisoning.

Bonus #AIIsGoingGreat: After assuring us recent incidents were only coincidentally connected to AI*, Amazon "summoned a large group of engineers to a meeting on Tuesday for a “deep dive” into a spate of outages, including incidents tied to the use of AI coding tools"

RE: https://mstdn.social/@rysiek/116211625230754185

Not that humans are immune to screwing up TZ/DST logic of course, but I feel like the odds the offending logic was Claude vomit are pretty high, and the fact the RFO doesn't address this is pretty telling

Also this is a good illustration of why the "buT It WRiTes WorKInG CodE" argument is fairly unpersuasive on its own

https://mastodon.social/@rysiek@mstdn.social/116211625348719630

LOL. But will there be any reflection on how it got this far? Did no one stand up and point out the many obvious reasons was likely to be a total shit-show, or were they ignored?

I mean, one of their examples is "where is the closest public bathroom that isn’t completely disgusting" and what are the odds Google's LLM has accurate, up to date information about this? (and if, in fact, google does have realtime surveillance of public restrooms, I may have a privacy-related followup)

https://www.theverge.com/tech/893262/google-maps-gemini-ai-ask-maps-immersive-navigation

RE: https://mathstodon.xyz/@mjd/116224397839379268

This will be an interesting test of the AI companies fine print "don't use this great amazing world transforming genius machine for anything serious, lol" disclaimer.

My totally uneducated IANAL guess is OpenAI will win, if they don't settle to make it all go away. As much as the disclaimers are obvious CYA, OpenAI hasn't (AFAIK) explicitly promoted it ChatGPT for litigation

https://mastodon.social/@mjd@mathstodon.xyz/116224398083939471

Original pro se case https://www.courtlistener.com/docket/69634076/dela-torre-v-nippon-life-insurance-company-of-america/ which as far as I can tell seems to have effectively ended with the plaintiff agreeing to arbitration and not being sanctioned into oblivion

Case against OpenAI

https://www.courtlistener.com/docket/72365583/nippon-life-insurance-company-of-america-v-openai-foundation/

RE: https://infosec.exchange/@josephcox/116256386324754543

Shot: "Kantor told 404 Media that artificial intelligence is writing more than half the app’s code these days"

Chaser:

https://mastodon.social/@josephcox@infosec.exchange/116256386410352613

I do wonder though, does anyone involved actually want it or think it will work, or does it exist purely for management have have an ✨AI story?

https://www.404media.co/tinder-plans-to-let-ai-scan-your-camera-roll/

"The leak, which Meta confirmed, happened when an employee asked for guidance on an engineering problem on an internal forum. An AI agent responded with a solution, which the employee implemented – causing a large amount of sensitive user and company data to be exposed to its engineers for two hours" - I would like to see a description of what happened not filtered through generalist press…

https://www.theverge.com/ai-artificial-intelligence/897528/meta-rogue-ai-agent-security-incident

#AI takes another journalism job: https://www.theguardian.com/technology/2026/mar/20/mediahuis-suspends-senior-journalist-over-ai-generated-quotes

🤔 #AGI… Artificial Gross Incompetence? Another Grift Industry? A Giant Inferno?

Grammarly dude is very quick to say their "expert review" impersonation feature was a bad feature created by a small team that he wasn't involved with and how they already killed before the lawsuit was filed, but very much less to keen to concede that attributing slop to people without permission or compensation is just a shitty thing to do

Who decided to (mis)appropriate CQ from hams when "Slop Overflow" was right there?!

(reposting in the correct thread)

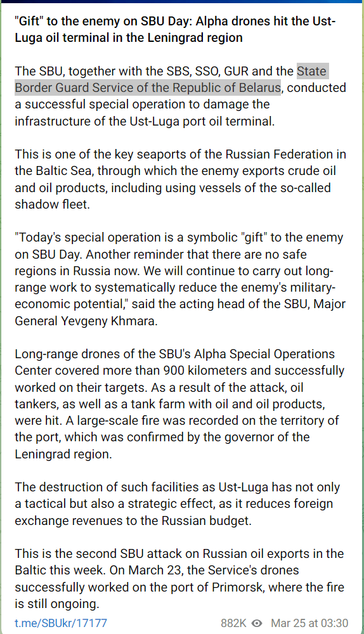

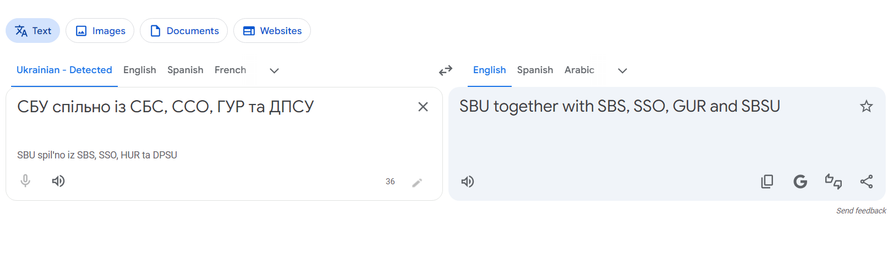

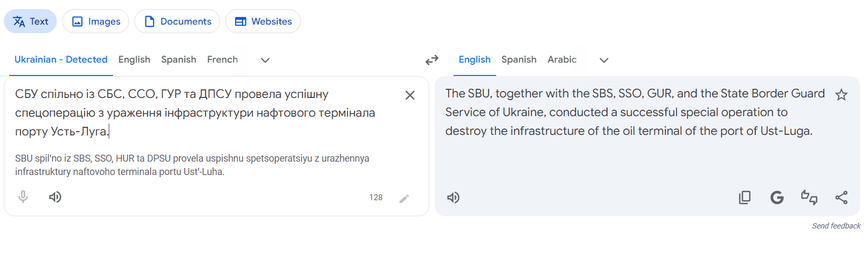

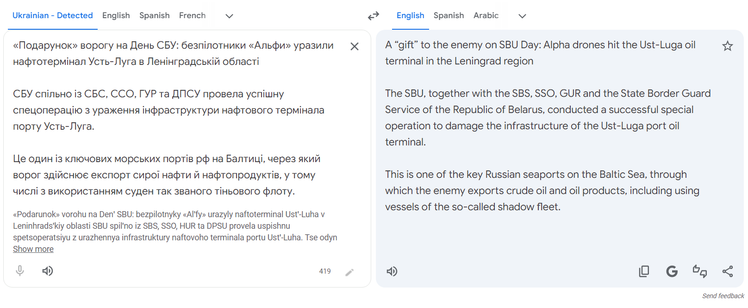

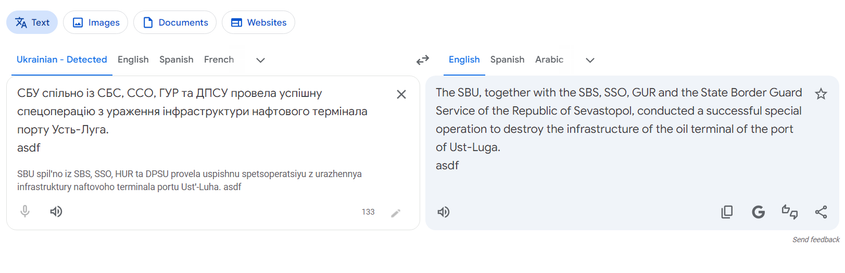

So I thought this was SBU trolling, given the very strong indications Ukrainian drones *have* gone through Belarusian airspace in the attacks mentioned, but on closer inspection, it appears Google decided to translate "ДПСУ" as "State Border Guard Service of the Republic of Belarus" 😬 . Oddly, translating just the paragraph gets it right. This seems like a very #LLM-y failure mode

https://www.404media.co/wikipedia-bans-ai-generated-content/

Also the earlier story about AI translations sure sounds familiar: "implemented new policies and restricted a number of contributors who were paid to use AI to translate existing Wikipedia articles into other languages after they discovered these AI translations added AI “hallucinations,” or errors, to the resulting article"

https://www.404media.co/ai-translations-are-adding-hallucinations-to-wikipedia-articles/

#AIIsGoingGreat… so great it's opening up a whole new branch of psychiatry: "There seem to be three common delusions in the cases Brisson has encountered. The most frequent is the belief that they have created the first conscious AI. The second is a conviction that they have stumbled upon a major breakthrough in their field of work or interest and are going to make millions. The third relates to spirituality and the belief that they are speaking directly to God"

https://www.theguardian.com/lifeandstyle/2026/mar/26/ai-chatbot-users-lives-wrecked-by-delusion

Bonus #AIIsGoingGreat (HT @davidgerard*) I was joking about collateralized GPU obligations**, but Quinn Emanuel Urquhart & Sullivan, LLP ain't: "This note surveys the major financing mechanics—direct loans, SPV structures, securitizations, and GPU-collateralized facilities—and identifies nine categories of emerging litigation risk"

https://www.quinnemanuel.com/the-firm/publications/client-alert-emerging-litigation-risks-in-financing-ai-data-centers-boom/

* https://mastodon.social/@davidgerard@circumstances.run/116320136755099444

** https://mastodon.social/@reedmideke/115080228685202839

Yet more #AIIsGoingGreat: Dude sets a slop bot loose on wikipedia and then lectures them about being "constructive", despite unapproved bots being prohibited by longstanding policy "They probably should have used this more as a learning experience because this type of AI agent interaction is about to become the new normal, and they will need more constructive ways of working with them"

More (HT @nora*) "The worst thing that happens is he gets banned and something gets taken down. The best thing that happens is he actually contributes some useful things to Wikipedia … I was real surprised by the reaction, because I just kind of assumed that there [were] a lot of agents that were potentially contributing to Wikipedia at this point" - Dude just incapable of understanding that other people might not want to clean up his shit

* https://mastodon.social/@[email protected]e/116319649387305289

Today's #AIISGoingGreat is amazing, revolutionary technology, created at vast expense, which will reshape the global economy and virtually everything we do. Get on board or be left behind*

https://www.microsoft.com/en-us/microsoft-copilot/for-individuals/termsofuse

* for entertainment purposes only

(HT @mhoye https://mastodon.social/@mhoye@cosocial.ca/116325416135307490)