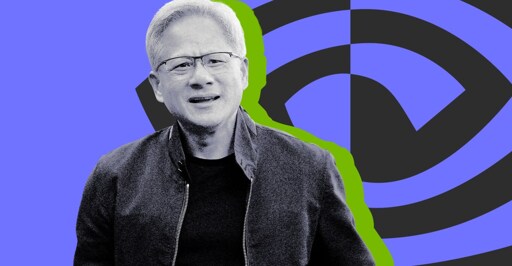

Nvidia CEO Jensen Huang says ‘I think we’ve achieved AGI’

I only have a rather high level understanding of current AI models, but I don’t see any way for the current generation of LLMs to actually be intelligent or conscious.

They’re entirely stateless, once-through models: any activity in the model that could be remotely considered “thought” is completely lost the moment the model outputs a token. Then it starts over fresh for the next token with nothing but the previous inputs and outputs (the context window) to work with.

That’s why it’s so stupid to ask an LLM “what were you thinking”, because even it doesn’t know! All it’s going to do is look at what it spat out last and hallucinate a reasonable-sounding answer.

That’s what an LLM is, a database of words using vectors.

You’re still limited by the context window in your example, giving it another source of information doesn’t do anything than give more context.

If you prompted an LLM to review all of it’s database entries, generate a new response based on that data, then save that output to the database and repeat at regular intervals, I could see calling that a kind of thinking.

That’s kind of what the current agentic AI products like Claude Code do. The problem is context rot. When the context window fills up, the model loses the ability to distinguish between what information is important and what’s not, and it inevitably starts to hallucinate.

The current fixes are to prune irrelevant information from the context window, use sub-agents with their own context windows, or just occasionally start over from scratch. They’ve also developed conventional AGENTS.md and CLAUDE.md files where you can store long-term context and basically “advice” for the model, which is automatically read into the context window.

However, I think an AGI inherently would need to be able to store that state internally, to have memory circuits, and “consciousness” circuits that are connected in a loop so it can work on its own internally encoded context. And ideally it would be able to modify its own weights and connections to “learn” in real time.

The problem is that would not scale to current usage because you’d need to store all that internal state, including potentially a unique copy of the model, for every user. And the companies wouldn’t want that because they’d be giving up control over the model’s outputs since they’d have no feasible way to supervise the learning process.

The size of the context window is fixed in the structure of the model. LLMs are still at their core artificial neural networks, so an analogy to biology might be helpful.

Think of the input layer of the model like the retinas in your eyes. Each token in the context window, after embedding (i.e. conversion to a series of numbers, because ofc it’s just all math under the hood), is fed to a certain set of input neurons, just like the rods and cones in your retina capture light and convert it to electrical signals, which are passed to neurons in your optic nerve, which connect to neurons in your visual cortex, each layer along the way processing and analyzing the signal.

The number of tokens in the context window is directly proportional to the number of neurons in the input layer of the model. To make the context window bigger, you have to add more neurons to the input layer, but that quickly results in diminishing returns without adding more neurons to the inner layers to be able to process the extra information. Ultimately, you have to make the whole model larger, which means more parameters, which means more data to store and more processing power per prompt.

Training data isn’t stored in the model. The model processes that data and uses it to adjust the weights and measures of its parameters (usually several to a hundred billion or more for commercial models), which are divided among several layers, hidden sizes, and attention heads. These weights and the architecture are what are hard-coded into the model during training.

Inferencing is what happens when the model generates text from an input, and at this point the weights and measures are hard-coded so it doesn’t actually retain all that information it was trained on. The context window refers to how many tokens (words or phrases) it can store in its memory at a time.

For every token that it processes, it runs a series of calculations on embedded vectors passes them through several layers in which they’re considered against the context of all the other tokens in the context window. This involves matrix multiplication and is very compute-heavy. Think like 1-4GB of RAM for every billion parameters, plus several more GB of RAM for the context window. There’s just no way it would be able to hold its entire training dataset in RAM at a time.

You would need to integrate retrieval-augmented generation to fetch the relevant data into the context window before generating a response, but that’s not at all the same as containing all that knowledge in a stateful manner

The conversion of the output to tokens inherently loses a lot of the information extracted by the model and any intermediate state it has synthesized (what it “thinks” of the input).

Until the model is able to retain its own internal state and able to integrate new information into that state as it receives it, all it will ever be able to do is try to fill in the blanks.

Not sure what this internal state you are referring to is. Are you talking about all the values that come out of each step of the computations?

As for your second half… integration. That is a tricky one. Because the inputs it is getting aren’t necessarily correct. So that can do more harm than good. The current loop for integrating new data is too long though. They need to reduce that down to like an hour so it can absorb current events at least. And ideally they would be able to take a conversation and identify what worked and what didn’t. Then integrate what did. This is what was mentioned about claud.md files and such that essentially keep track of wwhat was learned. There is room for improvement there, as I seem to have to tell the model to go read those or it doesn’t.

Not sure what this internal state you are referring to is. Are you talking about all the values that come out of each step of the computations?

It would need to be able to form memories like real brains do, by creating new connections between neurons and adjusting their weights in real time in response to stimuli, and having those connections persist. I think that’s a prerequisite to models that are capable of higher-level reasoning and understanding. But then you would need to store those changes to the model for each user, which would be tens or hundreds of gigabytes.

These current once-through LLMs don’t have time to properly digest what they’re looking at, because they essentially forget everything once they output a token. I don’t think you can make up for that by spitting some tokens out to a file and reading them back in, because it still has to be human-readable and coherent. That transformation is inherently lossy.

This is basically what I’m talking about:

But for every single token the LLM outputs. The fact that it’s allowed to take notes is a mitigation for this context loss, not a silver bullet.