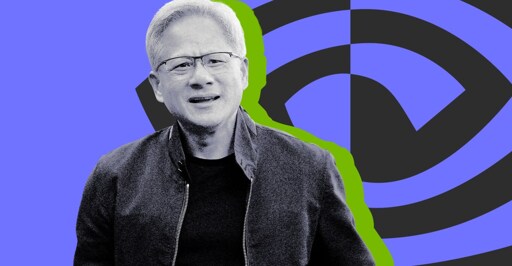

Nvidia CEO Jensen Huang says ‘I think we’ve achieved AGI’

I only have a rather high level understanding of current AI models, but I don’t see any way for the current generation of LLMs to actually be intelligent or conscious.

They’re entirely stateless, once-through models: any activity in the model that could be remotely considered “thought” is completely lost the moment the model outputs a token. Then it starts over fresh for the next token with nothing but the previous inputs and outputs (the context window) to work with.

That’s why it’s so stupid to ask an LLM “what were you thinking”, because even it doesn’t know! All it’s going to do is look at what it spat out last and hallucinate a reasonable-sounding answer.

The conversion of the output to tokens inherently loses a lot of the information extracted by the model and any intermediate state it has synthesized (what it “thinks” of the input).

Until the model is able to retain its own internal state and able to integrate new information into that state as it receives it, all it will ever be able to do is try to fill in the blanks.

Not sure what this internal state you are referring to is. Are you talking about all the values that come out of each step of the computations?

As for your second half… integration. That is a tricky one. Because the inputs it is getting aren’t necessarily correct. So that can do more harm than good. The current loop for integrating new data is too long though. They need to reduce that down to like an hour so it can absorb current events at least. And ideally they would be able to take a conversation and identify what worked and what didn’t. Then integrate what did. This is what was mentioned about claud.md files and such that essentially keep track of wwhat was learned. There is room for improvement there, as I seem to have to tell the model to go read those or it doesn’t.

Not sure what this internal state you are referring to is. Are you talking about all the values that come out of each step of the computations?

It would need to be able to form memories like real brains do, by creating new connections between neurons and adjusting their weights in real time in response to stimuli, and having those connections persist. I think that’s a prerequisite to models that are capable of higher-level reasoning and understanding. But then you would need to store those changes to the model for each user, which would be tens or hundreds of gigabytes.

These current once-through LLMs don’t have time to properly digest what they’re looking at, because they essentially forget everything once they output a token. I don’t think you can make up for that by spitting some tokens out to a file and reading them back in, because it still has to be human-readable and coherent. That transformation is inherently lossy.

This is basically what I’m talking about:

But for every single token the LLM outputs. The fact that it’s allowed to take notes is a mitigation for this context loss, not a silver bullet.

They also have vector dbs. My understanding is that they are closer to what you are talking about as far as internal state. But they still don’t allow th AI to update the vectordb in real time. Mainly they worry about what happens with live updates being similar to how people are easily manipulated into believeing BS. So they are more careful about what they feed it to update. I do wonder how they generate those vector dbs, and if that is something users could utilize locally.