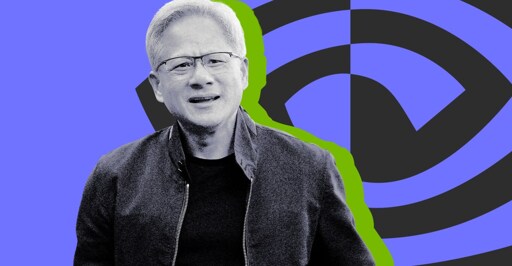

Nvidia CEO Jensen Huang says ‘I think we’ve achieved AGI’

I only have a rather high level understanding of current AI models, but I don’t see any way for the current generation of LLMs to actually be intelligent or conscious.

They’re entirely stateless, once-through models: any activity in the model that could be remotely considered “thought” is completely lost the moment the model outputs a token. Then it starts over fresh for the next token with nothing but the previous inputs and outputs (the context window) to work with.

That’s why it’s so stupid to ask an LLM “what were you thinking”, because even it doesn’t know! All it’s going to do is look at what it spat out last and hallucinate a reasonable-sounding answer.

That’s what an LLM is, a database of words using vectors.

You’re still limited by the context window in your example, giving it another source of information doesn’t do anything than give more context.