Once again I am heartbroken to remind you that the Dunning-Kruger effect is probably not real:

https://www.mcgill.ca/oss/article/critical-thinking/dunning-kruger-effect-probably-not-real

Like Freudian psychology, Hardin's tragedy of the commons and any number of other popular pseudoscientific narratives, it caters to our preconceptions and makes for entertaining, easy to re-tell stories, but it's also... not true.

And - again, I am entirely saddened by this - that means that if we keep using these metaphors we're legitimizing the false ideas behind them.

The Dunning-Kruger Effect Is Probably Not Real

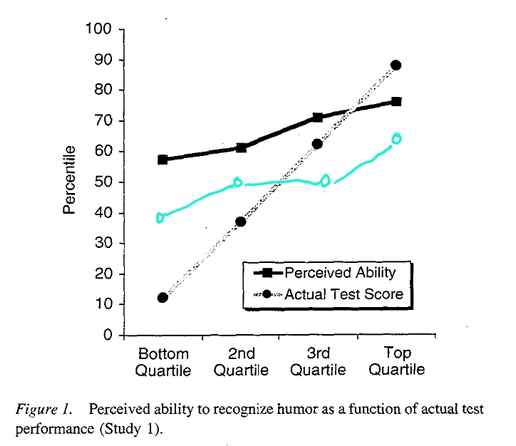

I want the Dunning-Kruger effect to be real. First described in a seminal 1999 paper by David Dunning and Justin Kruger, this effect has been the darling of journalists who want to explain why dumb people don’t know they’re dumb. There’s even video of a fantastic pastiche of Turandot’s famous aria, Nessun dorma, explaining the Dunning-Kruger effect. “They don’t know,” the opera singer belts out at the climax, “that they don’t know.” I was planning on writing a very short article about the Dunning-Kruger effect and it felt like shooting fish in a barrel. Here’s the effect, how it was discovered, what it means. End of story. But as I double-checked the academic literature, doubt started to creep in. While trying to understand the criticism that had been leveled at the original study, I fell down a rabbit hole, spoke to a few statistics-minded people, corresponded with Dr. Dunning himself, and tried to understand if our brain really was biased to overstate our competence in activities at which we suck... or if the celebrated effect was just a mirage brought about by the peculiar way in which we can play with numbers. Have we been overstating our confidence in the Dunning-Kruger effect? A misunderstood effect The most important mistake people make about the Dunning-Kruger effect, according to Dr. Dunning, has to do with who falls victim to it. “The effect is about us, not them,” he wrote to me. “The lesson of the effect was always about how we should be humble and cautious about ourselves.” The Dunning-Kruger effect is not about dumb people. It’s mostly about all of us when it comes to things we are not very competent at. In a nutshell, the Dunning-Kruger effect was originally defined as a bias in our thinking. If I am terrible at English grammar and am told to answer a quiz testing my knowledge of English grammar, this bias in my thinking would lead me, according to the theory, to believe I would get a higher score than I actually would. And if I excel at English grammar, the effect dictates I would be likely to slightly underestimate how well I would do. I might predict I would get a 70% score while my actual score would be 90%. But if my actual score was 15% (because I’m terrible at grammar), I might think more highly of myself and predict a score of 60%. This discrepancy is the effect, and it is thought to be due to a specific problem with our brain’s ability to assess its skills. This is what student participants went through for Dunning and Kruger’s research project in the late 1990s. There were assessments of grammar, of humour, and of logical reasoning. Everyone was asked how well they thought they did and everyone was also graded objectively, and the two were compared. Since then, many studies have been done that have reported this effect in other domains of knowledge. Dr. Dunning tells me he believes the effect “has more to do with being misinformed rather than uninformed.” If I am asked the boiling point of mercury, it is clear my brain does not hold the answer. But if I am asked what is the capital of Scotland, I may think I know enough to say Glasgow, but it turns out it’s Edinburgh. That’s misinformation and it’s pushing down on that confidence button in my brain. So case closed, right? On the contrary. In 2016 and 2017, two papers were published in a mathematics journal called Numeracy. In them, the authors argued that the Dunning-Kruger effect was a mirage. And I tend to agree. The effect is in the noise The two papers, by Dr. Ed Nuhfer and colleagues, argued that the Dunning-Kruger effect could be replicated by using random data. “We all then believed the [1999] paper was valid,” Dr. Nuhfer told me via email. “The reasoning and argument just made so much sense. We never set out to disprove it; we were even fans of that paper.” In Dr. Nuhfer’s own papers, which used both computer-generated data and results from actual people undergoing a science literacy test, his team disproved the claim that most people that are unskilled are unaware of it (“a small number are: we saw about 5-6% that fit that in our data”) and instead showed that both experts and novices underestimate and overestimate their skills with the same frequency. “It’s just that experts do that over a narrower range,” he wrote to me. Wrapping my brain around all this took weeks. I recruited a husband-and-wife team, Dr. Patrick E. McKnight (from the Department of Psychology at George Mason University, also on the advisory board of Sense About Science and STATS.org) and Dr. Simone C. McKnight (from Global Systems Technologies, Inc.), to help me understand what was going on. Patrick McKnight not only believed in the existence of the Dunning-Kruger effect: he was teaching it to warn his students to be mindful of what they actually knew versus what they thought they knew. But after replicating Dr. Nuhfer’s findings using a different platform (the statistical computing language R instead of Nuhfer’s Microsoft Excel), he became convinced the effect was just an artefact of how the thing that was being measured was indeed measured. We had long conversations over this as I kept pushing back. As a skeptic, I am easily enticed by stories of the sort “everything you know about this is wrong.” That’s my bias. To overcome it, I kept playing devil’s advocate with the McKnights to make sure we were not forgetting something. Every time I felt my understanding crystallize, doubt would creep in the next day and my discussion with the McKnights would resume. I finally reached a point where I was fairly certain the Dunning-Kruger effect had not been shown to be a bias in our thinking but was just an artefact. Here then is the simplest explanation I have for why the effect appears to be real. For an effect of human psychology to be real, it cannot be rigorously replicated using random noise. If the human brain was predisposed to choose heads when a coin is flipped, you could compare this to random predictions (heads or tails) made by a computer and see the bias. A human would call more heads than the computer would because the computer is making random bets whereas the human is biased toward heads. With the Dunning-Kruger effect, this is not the case. Random data actually mimics the effect really well. The effect as originally described in 1999 makes use of a very peculiar type of graph. “This graph, to my knowledge, is quite unusual for most areas of science,” Patrick McKnight told me. In the original experiment, students took a test and were asked to guess their score. Therefore, each student had two data points: the score they thought they got (self-assessment) and the score they actually got (performance). In order to visualize these results, Dunning and Kruger separated everybody into quartiles: those who performed in the bottom 25%, those who scored in the top 25%, and the two quartiles in the middle. For each quartile, the average performance score and the average self-assessed score was plotted. This resulted in the famous Dunning-Kruger graph. Plotted this way, it looks like those in the bottom 25% thought they did much better than they did, and those in the top 25% underestimated their performance. This observation was thought to be due to the human brain: the unskilled are unaware of it. But if we remove the human brain from the equation, we get this: The above Dunning-Kruger graph was created by Patrick McKnight using computer-generated results for both self-assessment and performance. The numbers were random. There was no bias in the coding that would lead these fictitious students to guess they had done really well when their actual score was very low. And yet we can see that the two lines look eerily similar to those of Dunning and Kruger’s seminal experiment. A similar simulation was done by Dr. Phillip Ackerman and colleagues three years after the original Dunning-Kruger paper, and the results were similar. Measuring someone’s perception of anything, including their own skills, is fraught with difficulties. How well I think I did on my test today could change if the whole thing was done tomorrow, when my mood might differ and my self-confidence may waver. This measurement of self-assessment is thus, to a degree, unreliable. This unreliability--sometimes massive, sometimes not--means that any true psychological effect that does exist will be measured as smaller in the context of an experiment. This is called attenuation due to unreliability. “Scores of books, articles, and chapters highlight the problem with measurement error and attenuated effects,” Patrick McKnight wrote to me. In his simulation with random measurements, the so-called Dunning-Kruger effect actually becomes more visible as the measurement error increases. “We have no instance in the history of scientific discovery,” he continued, “where a finding improves by increasing measurement error. None.” Breaking the spell When I plug “Dunning-Kruger effect” into Google News, I get over 8,500 hits from media outlets like The New York Times, New Scientist, and the CBC. So many simply endorse the effect as a real bias of the brain, so it’s no wonder that people are not aware of the academic criticism that has existed since the effect was first published. It’s not just Dr. Nuhfer and his Numeracy papers. Other academic critics have pointed the finger, for example, at regression to the mean. But as Patrick McKnight points out, regression to the mean occurs when the same measure is taken over time and we track its evolution. If I take my temperature every morning and one day spike a fever, that same measure will (hopefully) go down the next day and return to its mean value as my fever abates. That’s regression to the mean. But in the context of the Dunning-Kruger effect, nothing is measured over time, and self-assessment and performance are different measures entirely, so regression to the mean should not apply. The unreliability of the self-assessment measurement itself, however, is a strong contender to explain a good chunk of what Dunning, Kruger, and other scientists who have since reported this effect in other contexts were actually describing. This story is not over. There will undoubtedly be more ink spilled in academic journals over this issue, which is a healthy part of scientific research after all. Studying protons and electrons is relatively easy as these particles don’t have a mind of their own; studying human psychology, by comparison, is much harder because the number of variables being juggled is incredibly high. It is thus really easy for findings in psychology to appear real when they are not. Are there dumb people who do not realize they are dumb? Sure, but that was never what the Dunning-Kruger effect was about. Are there people who are very confident and arrogant in their ignorance? Absolutely, but here too, Dunning and Kruger did not measure confidence or arrogance back in 1999. There are other effects known to psychologists, like the overconfidence bias and the better-than-average bias (where most car drivers believe themselves to be well above average, which makes no mathematical sense), so if the Dunning-Kruger effect is convincingly shown to be nothing but a mirage, it does not mean the human brain is spotless. And if researchers continue to believe in the effect in the face of weighty criticism, this is not a paradoxical example of the Dunning-Kruger effect. In the original classic experiments, students received no feedback when making their self-assessment. It is fair to say researchers are in a different position now. The words “Dunning-Kruger effect” have been wielded as an incantation by journalists and skeptics alike for years to explain away stupidity and incompetence. It may be time to break that spell. Take-home message: - The Dunning-Kruger effect was originally described in 1999 as the observation that people who are terrible at a particular task think they are much better than they are, while people who are very good at it tend to underestimate their competence - The Dunning-Kruger effect was never about “dumb people not knowing they are dumb” or about “ignorant people being very arrogant and confident in their lack of knowledge.” - Because the effect can be seen in random, computer-generated data, it may not be a real flaw in our thinking and thus may not really exist @CrackedScience Leave a comment!