(Previous posts on this)

Another reveal: NSToolbar's .flexibleSpace doesn't provide progressive blur by default, but .space does 🤦♂️

All in a good day's work.

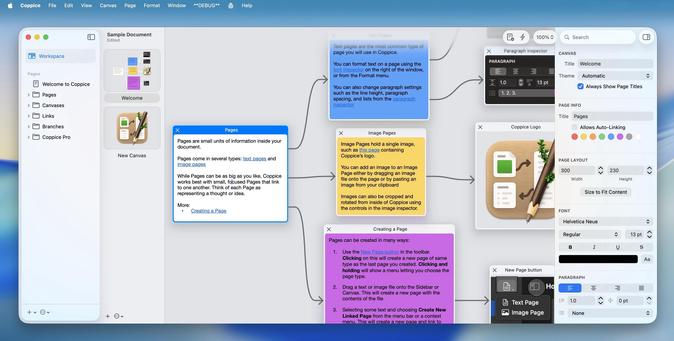

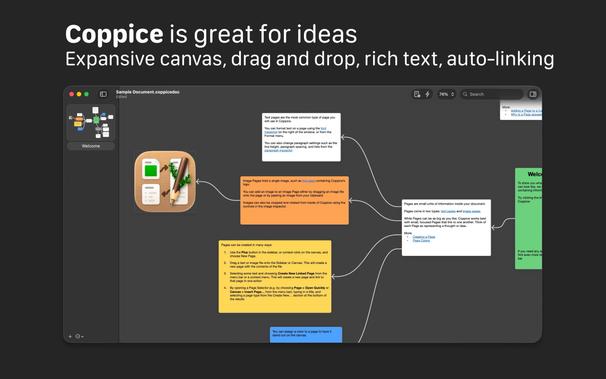

If you want to see how Coppice was built, pilky's dev livestreams are still up on YouTube, with hundreds of hours of AppKit and related discussion. As we approach a year since his passing, and as I prepare a significant update to Coppice, it's kinda nice to revisit them

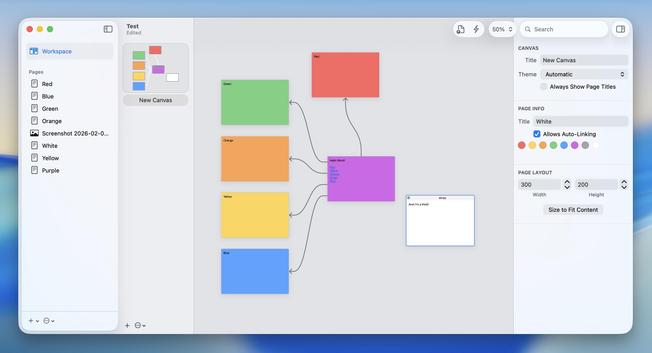

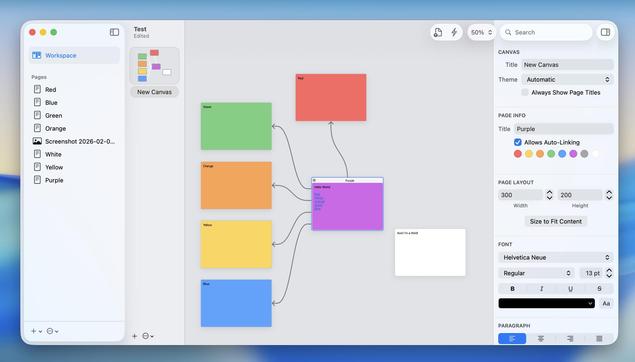

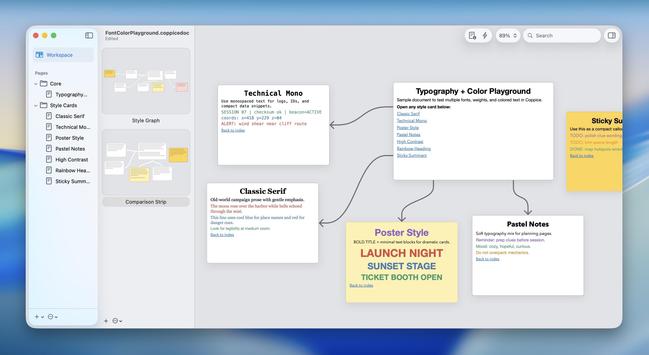

I had often asked for color pages in Coppice, but it was always on the back burner behind a lot of other pressing todo list items. So I figured I may as well dig in now and see if I could do it myself

~several hours later~

Related: IB autolayout can go IN THE BIN 🚮

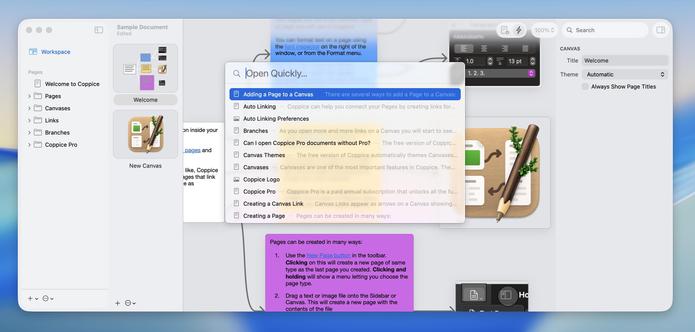

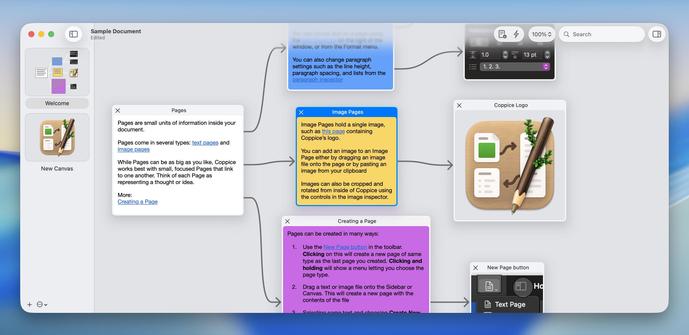

👨✈️ What I'm currently working on in Coppice is now available on TestFlight if you're running macOS 26 or newer. I would love for some external feedback, as it's a big app with a lot of corners to test and I don't have a pre-existing bugs list

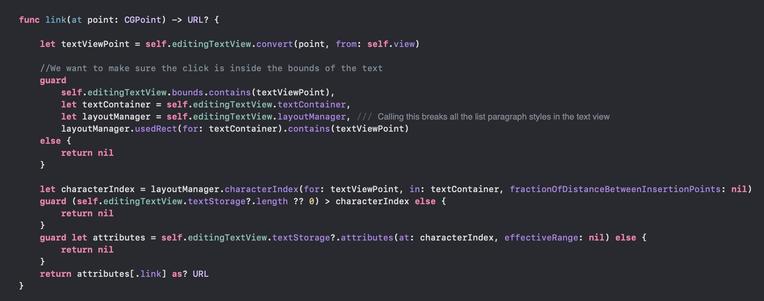

I think I found my critical issue in Coppice, and it's due to NSTextView downgrading from TextKit 2 to TextKit 1 if you access .layoutManager on macOS 26 😅 It isn't triggered by apps built with an older SDK.

It seems like setting (the private) NSTextViewAllowsDowngradeToLayoutManager=NO completely fixes the issue?

This might be a problem I need Apple input on…

Props to Codex for pointing me to the problem location (in this 70Kloc codebase), but actually debugging the issue required digging through AppKit and UIFoundation in Hopper. Codex looped me round in circles with multiple superficial fixes, that worked, but left the underlying problem, so I threw it all out.

AI can do many things, but it can't replace a developer with deep platform knowledge

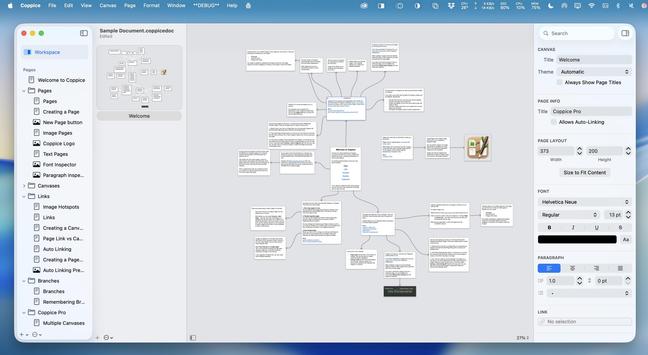

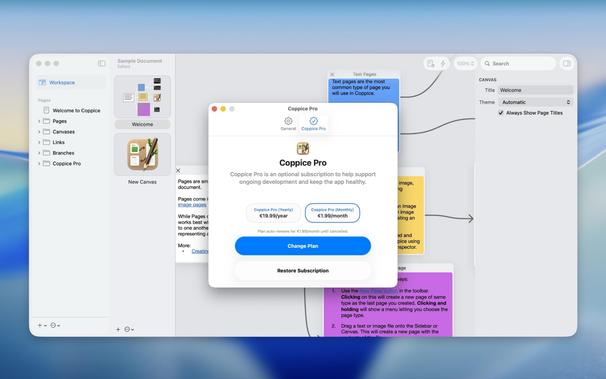

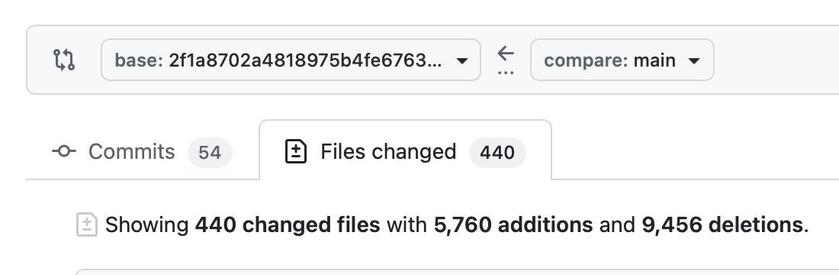

💜 A year ago today we lost one of the kindest, most-generous developers in the Mac community, Martin Pilkington (pilky). He was known for and worked on many projects over the years, but Coppice was his labor of love. I have been working the past couple of months on rebuilding Coppice for macOS 26, Liquid Glass, and the App Store, and I'm thrilled that today is the day I get to release it into the world — with all of its previously-'Pro' features available for free

pilky had two white whales: one, was bringing Coppice to the App Store.

The other, was Coppice for iPad.

Hopefully, that's a story for another time

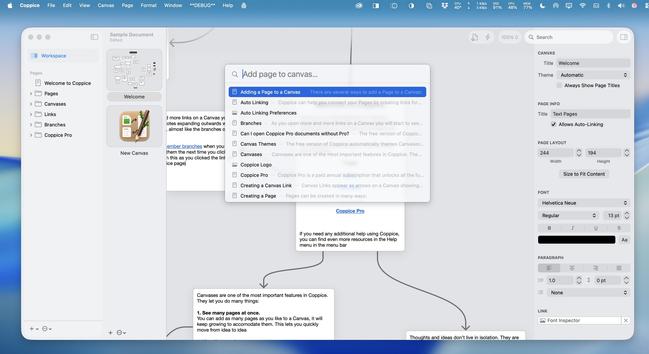

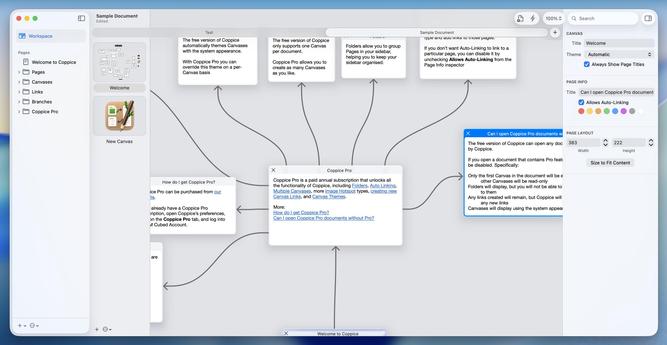

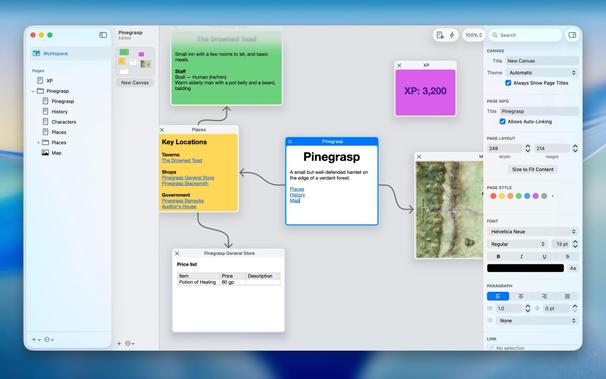

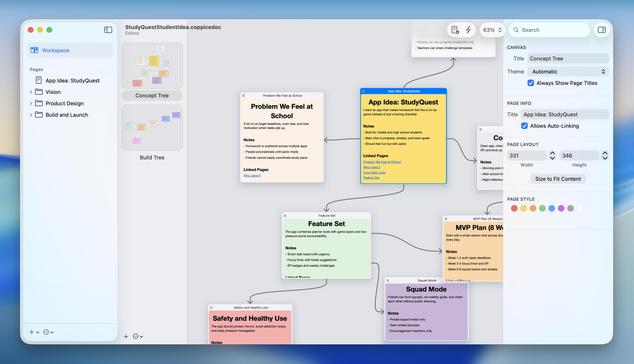

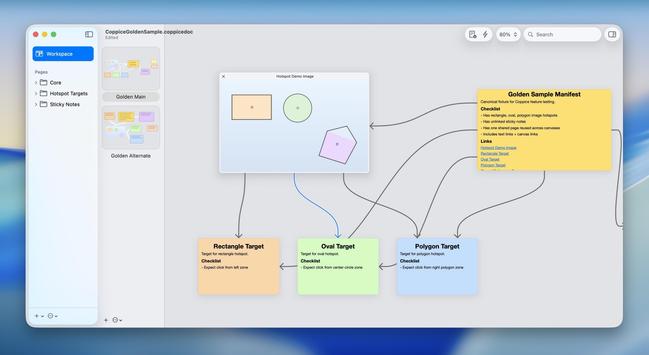

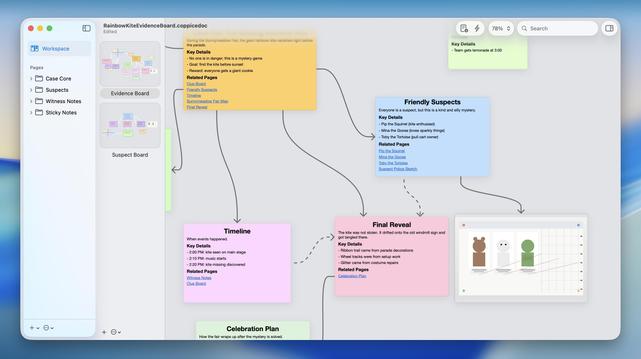

I handed Codex a Coppice document and told it to just figure out the data model, then create a complex document of its own, and it effectively gave me the evidence board meme 😂

Jokes aside, this gives me an incredibly powerful tool for creating sample Coppice documents to test all kinds of things, and for free

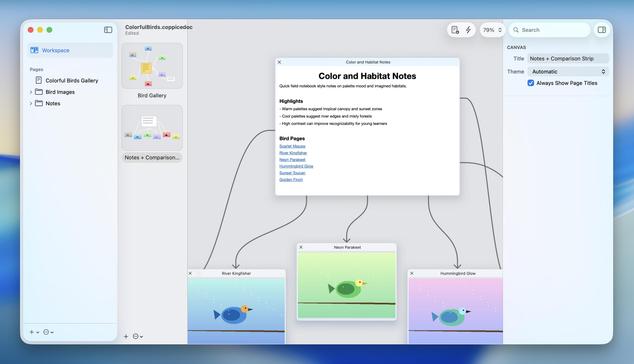

'Codex, insert some pictures of birds for me'

I mean. An attempt was made! 🤣

If Apple Intelligence could actually perform like other cloud-based models, this whole document-generation feature could actually be a real feature I could put in the app.

Alas.

(Also this is a ton better than Keynote's $20/mo Creator Studio slide generator, already. Just saying. I'll happily take your money instead 🥲)

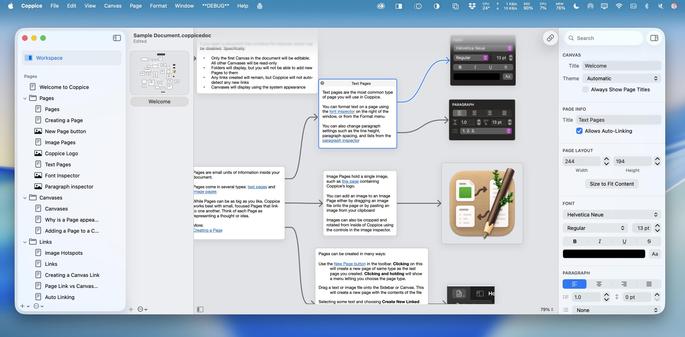

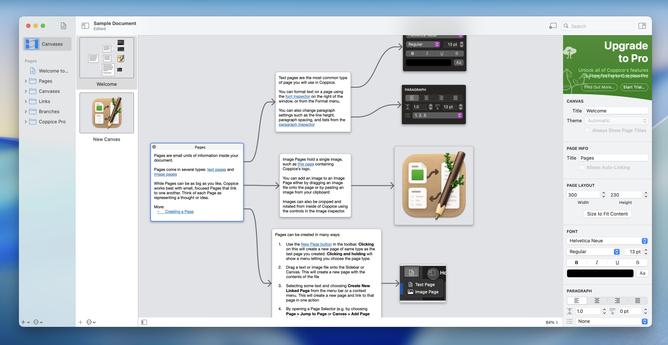

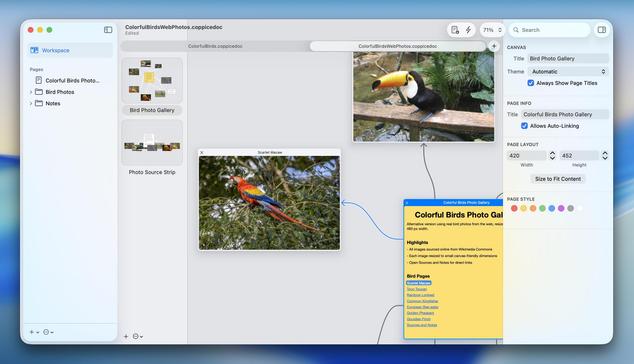

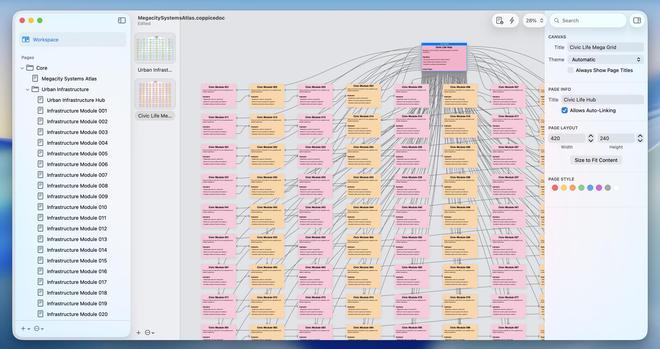

"Make a stress-test document for me"?

No problem.

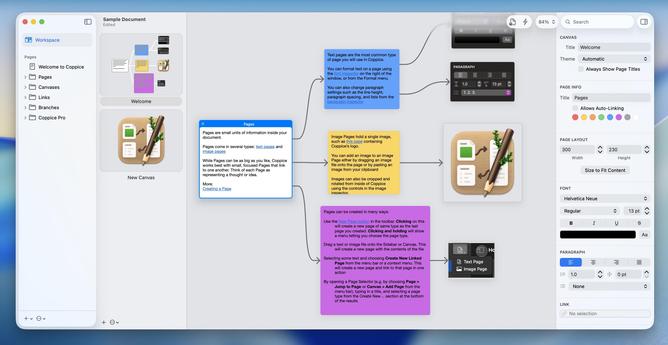

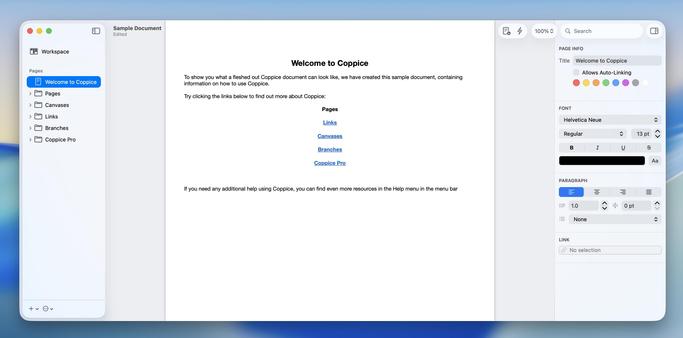

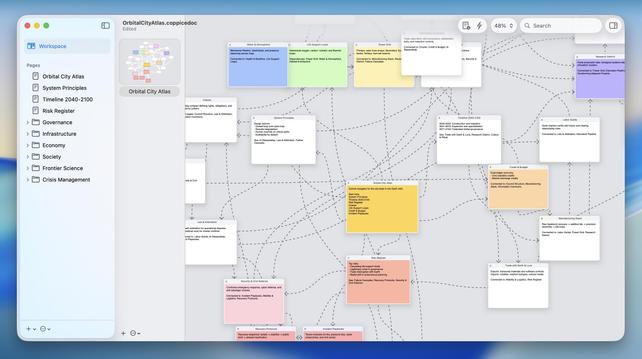

Codex is now so familiar with Coppice documents that I can hand it a *screenshot* of an existing Coppice document and it will build it.

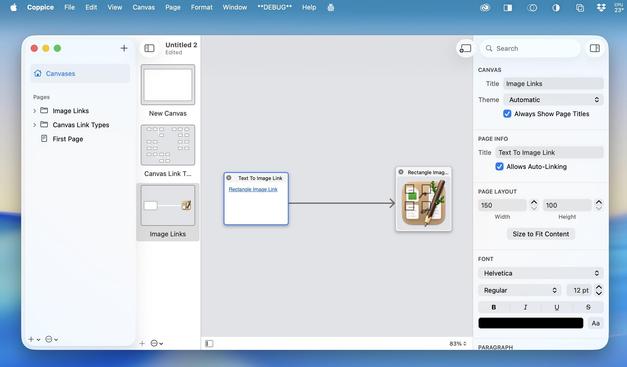

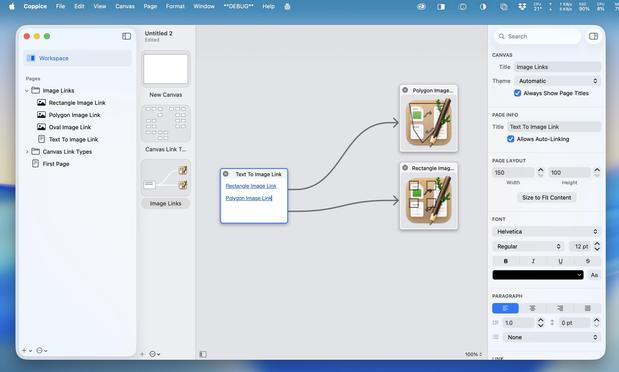

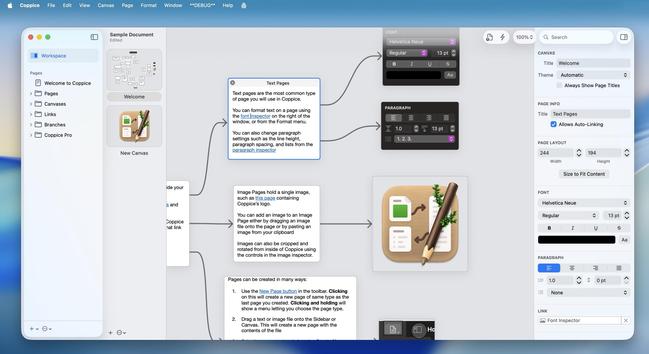

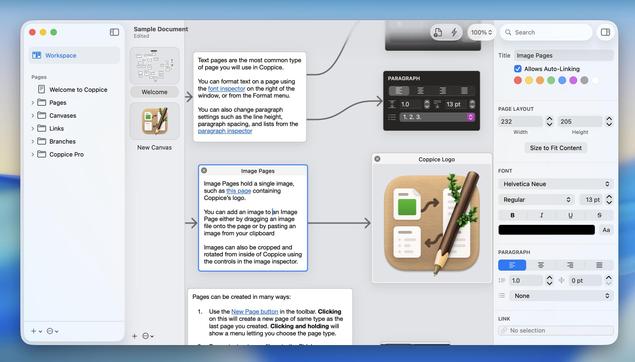

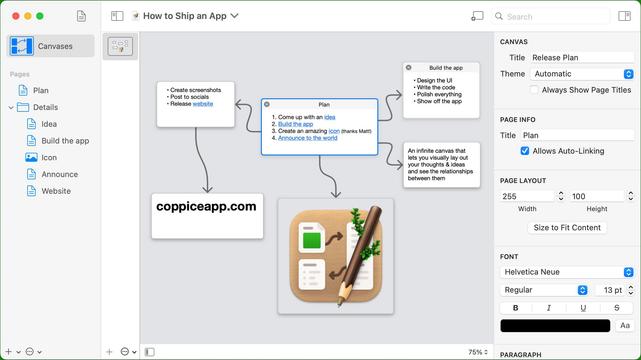

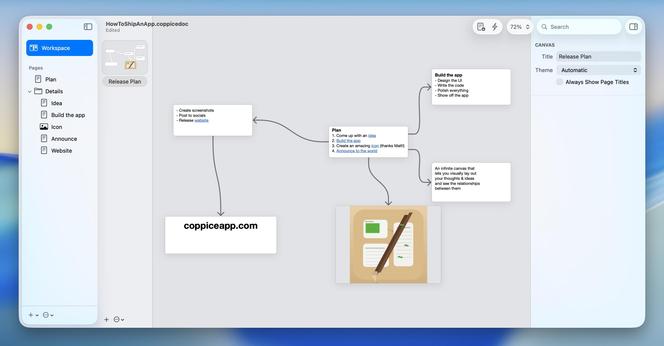

First image here is an image from the old Coppice website. I gave it to Codex and asked it to recreate the document.

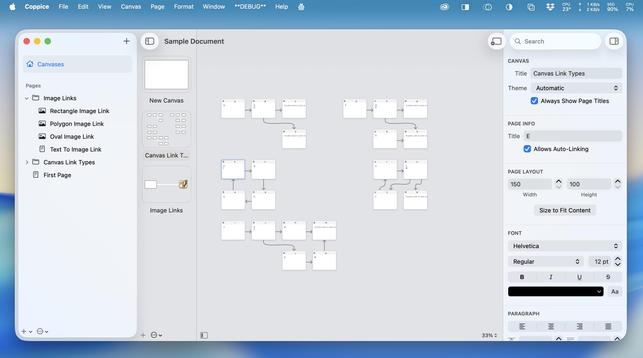

Second image is the recreation. Layout very similar, formatting, links intact, structure in the sidebar. And it did a cheap vector pass at recreating the image too.

As a reminder: it /had never seen this document format until an hour ago/

@ePirat given enough time and experimentation, you could have figured it out, yeah. I had it create a 'spec' to bring future chats up to speed, so you can understand the level of complexity involved:

https://gist.github.com/steventroughtonsmith/7c6eae6b1894c02d4824e35d857f4311

@stroughtonsmith I’ve followed your experiments with LLM-assisted coding for apple and wanted to ask, if possible: what’s the most efficient way for you to work right now, Codex in Xcode or using more “standard” tools like Claude code/vscode plugins?

Background: I’m a software developer with zero experience in app development for mobile, but I’ve always wanted to make a small video editing app (just applying existing CoreML models really) and I feel like my time has finally come

@stroughtonsmith awesome, thanks a lot! I’ve started using Codex quite a bit in non-Apple contexts, so I think/hope I’ll be fine.

Incredibly exciting! If I have some time on the weekend I’ll report back how it goes :)

@joe @stroughtonsmith Let's assume the price of models from Anthropic/OpenAI/etc shoots up and no one wants to use 'em.

Open weights models are a few months behind the SOTA closed models, are getting better quicker, and can be served profitably. (Inference is already profitable for model providers, it's training that is a giant expense).

I can't predict the market but we've crossed a threshold where today's models are good enough to write code, so people will continue using them to write code.

@mergesort @stroughtonsmith it’s hard to beat the user end experience of a tool that can attempt an ok job at almost anything without specialization. but even on device, LLMs are probably overall more compute intensive and less predictable than the right tool for the job

seems like a natural evolution of the “agent” model that the undifferentiated LLM eventually becomes a frontend to more specialized models or traditional tools. that would also make it more practical to run those models locally

@joe @stroughtonsmith I think this flattens a few arguments into one that each deserve expansion.

1. Many people say LLMs when they mean agents and vice versa, so it's a bit hard to pull 'em apart.

2. But even LLMs that all "work the same way" aren't really undifferentiated, post-training base models changes the characteristics a ton.

3. Using an LLM out the box is mostly fine, but the progress in developing harnesses is what's making AI very capable at coding, and that's only getting better.

@mergesort @stroughtonsmith > the progress in developing harnesses is what's making AI very capable at coding, and that's only getting better.

maybe i'm getting the terms mixed up but that's what i'm thinking of. AIUI the effective LLM coding tools aren't literally making the LLM generate a tree of source files but also are generating directed commands using traditional tools to manipulate the code base. it seems to me like that could generalize beyond codegen and existing UNIX tools

@joe @stroughtonsmith Even if they do it would probably be a dot com type blip, where ultimately the market demand comes back.

I do think we'll get *this* level of performance locally, but by that point many people will want a smarter model that makes less mistakes, knows you more intimately, etc. There are eventually diminishing returns to what people need (or are willing to pay for), but even with a fast take off I don't think we're getting to that at least until the 2030s.

@stroughtonsmith ah, got it! Thanks!

(It’s so annoying that the AS doesn’t go into detail about this).