@wingo @whitequark 😌Ritchie probably spins in his grave.

These standardization foobars commonly happen when implementors with commercial interests seek to „compromize“ out of being buggy.

Update: @david_chisnall points to this probably just being a misbehaviour of earlier tool chains. See below.

This has nothing to do with commercial vendors. The original Lex / YACC could not handle token streams that did not terminate with a separator. The very first C compiler would not correctly handle files that did not end with a newline (or, I think, other whitespace) and so the UNIX convention was to always end a file with a newline.

This limitation was picked up by most early C compilers, because they used the same tooling.

Modern compilers tend not to use this kind of tooling (and newer versions can handle this corner case), but the standard didn't want to exclude them.

As a QoI issue, most compilers will simply diagnose this and interpret the file as if it had a newline at the end. Early compilers would, as I recall, simply stop in the middle of parsing (I think. A parser generator I used 20+ years ago had this issue, but I don't remember the details).

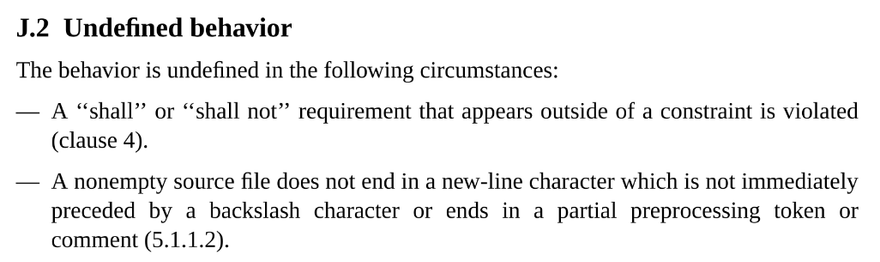

@david_chisnall @icing @wingo @whitequark Yes, but this doesn't justify UB at all. UB means "the compiler can do whatever it wants".

The missing new line could be specified as "mandatory else the compilation will fails" or as "if missing, the compilation might fail".

I guess they defined it as UB to treat multiple files at once, concatenated without separation, thus meaning the last line of a file could be completed with the first of another...

@tdelmas @icing @wingo @whitequark

Not ending a header with a newline means some C compilers will join the last token of the header with the first of the next file, which leads to completely unpredictable outcomes. Not terminating the compilation unit with a newline causes those same to fail to terminate during compilation.

Both of those were existing behaviours when ANSI C was standardised in 1989.

EDIT: UB wasn't meant to mean 'the compiler can do whatever it wants', it was meant to mean 'compilers cannot (for technical reasons) diagnose this and may do unexpected things'. Division by zero traps on a lot of architectures, adding a branch-if-zero before every divide would fix it but cause a lot of performance problems, so compilers may assume it doesn't happen. Use after free can't be statically detected in C, so compilers may assume it doesn't happen. Files not ending with a newline was probably detectable.

@david_chisnall @icing @wingo @whitequark I hit this around 2008 in the PIC microcontroller toolchain (I assume MPLAB). Symptom was the last function in the file couldn't be linked to - as it wasn't compiled.

On the plus side, leveraging loosely remembered minutiae to suggest "try adding a new line at the end of the file" makes you look like a magician. (When it was just a lucky guess).

I hope that's been fixed in that toolchain now, but haven't used PIC since then.

@Scatterdemic @icing @wingo @whitequark

The PIC C toolchain supported a very exciting version of C. As I recall, there were no variadics in the language and printf was handled specially by the compiler.

@whitequark @nafnlaus.bsky.social @wingo

I mean, that's a problem with undefined behaviour in general, isn't it

allowing the compiler to do anything whatsoever when it encounters a particular class of error doesn't make anything safer, whether that's a truncated file or a null pointer dereference or an infinite loop that makes no progress

rm -rf /