@stroughtonsmith For me, it's much less about slop and much more about the risk of societal, cognitive, and environmental damage (not to mention the basis of theft) that prevents me from getting excited about any of it 😔

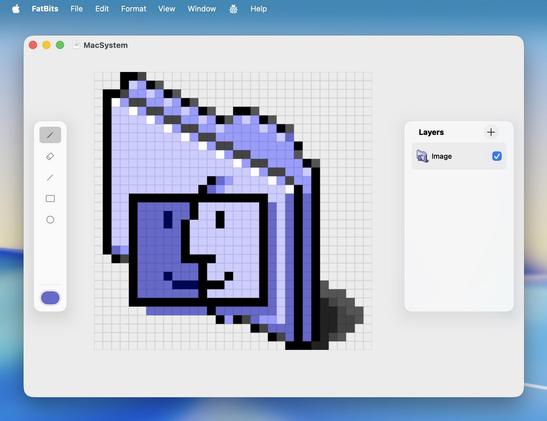

I'd much rather see more tools that make it easier to get started on new ideas be invented that _don't_ use LLMs… surely we as a whole could build towards that kind of future instead 😅

@dimitribouniol @stroughtonsmith hmmm.. You could look into GSD just don't let it write code but just specs and examples and split work.

GitHub - glittercowboy/get-shit-done: A light-weight and powerful meta-prompting, context engineering and spec-driven development system for Claude Code and OpenCode.

A light-weight and powerful meta-prompting, context engineering and spec-driven development system for Claude Code and OpenCode. - glittercowboy/get-shit-done

@stroughtonsmith

I think it’s also interesting that the well-structured Xcode and general corpus that was likely used in post training can be exploited by current model search / exploration as well as LLM generation.

My guess is that the corpus is largely code that that works and makes good use of idioms that you might use yourself—along with many others. The probability of generating slop is lower. This may make code generation a sweet spot for current LLM tech.